Building Efficient Long-Context Retrieval-Augmented Generation Systems with Modern Techniques

The landscape of artificial intelligence, particularly within the realm of large language models (LLMs) and their application in retrieval-augmented generation (RAG) systems, is undergoing a profound transformation. Historically, the efficacy of RAG hinged on a pragmatic yet limiting approach: meticulously partitioning extensive documents into smaller, digestible segments, embedding these fragments into vector spaces, and then retrieving the most pertinent pieces to augment LLM prompts. This strategy was largely dictated by the constrained and costly nature of LLM context windows, which typically ranged from a modest 4,000 to a more generous 32,000 tokens. However, the recent advent of models like Google’s Gemini Pro and Anthropic’s Claude Opus, boasting context windows extending to a million tokens or more, has fundamentally altered the paradigm. While this expansion theoretically allows for the ingestion of vast datasets, including entire libraries of novels, it introduces a new set of complex challenges related to computational efficiency, cost management, and, crucially, the effective utilization of such expansive contexts. This article delves into five sophisticated techniques designed to construct highly efficient long-context RAG systems, moving beyond simplistic data partitioning to address the intricacies of attention limitations and foster intelligent context reuse from a developer’s standpoint.

The Evolving Challenge of Long-Context RAG

The traditional RAG methodology, while effective, was a direct consequence of LLM architecture limitations. The computational expense associated with processing each token within an LLM’s attention mechanism meant that maximizing the number of tokens in a prompt directly correlated with increased processing time and financial outlay. Developers were thus incentivized to employ aggressive chunking strategies to fit essential information within these tight constraints.

The introduction of models with million-token context windows, such as Gemini Pro and Claude Opus, represents a significant leap forward. These models possess the capacity to hold a substantially larger volume of information within their immediate processing scope. This capability promises to revolutionize how we interact with and leverage vast knowledge bases. Imagine an AI assistant capable of referencing an entire company’s documentation, a comprehensive legal library, or an extensive collection of scientific research papers simultaneously.

However, this newfound capacity is not without its hurdles. The sheer volume of information, even when technically accessible, presents two primary obstacles:

- Attention Distribution and the "Lost in the Middle" Phenomenon: LLMs, despite their advancements, exhibit limitations in how they distribute their "attention" across extremely long sequences of text. Research has highlighted a phenomenon where information placed in the middle of a very long context is less likely to be effectively processed or utilized by the model compared to information at the beginning or end. This means that simply dumping a massive amount of data into a prompt does not guarantee that the LLM will intelligently extract the most relevant insights from the core of the provided text.

- Cost and Latency Implications: While the raw token limit has expanded, the cost of processing these tokens remains a significant factor, especially for enterprise-level applications or high-volume usage. Furthermore, the computational resources required to attend to and process such extensive contexts can lead to increased latency, impacting the real-time responsiveness of RAG systems. Efficiently managing these resources is paramount to deploying practical and scalable solutions.

To navigate these complexities, developers must adopt more nuanced strategies. The following five techniques offer a roadmap for building RAG systems that are not only capable of handling extensive contexts but are also optimized for performance, accuracy, and cost-effectiveness.

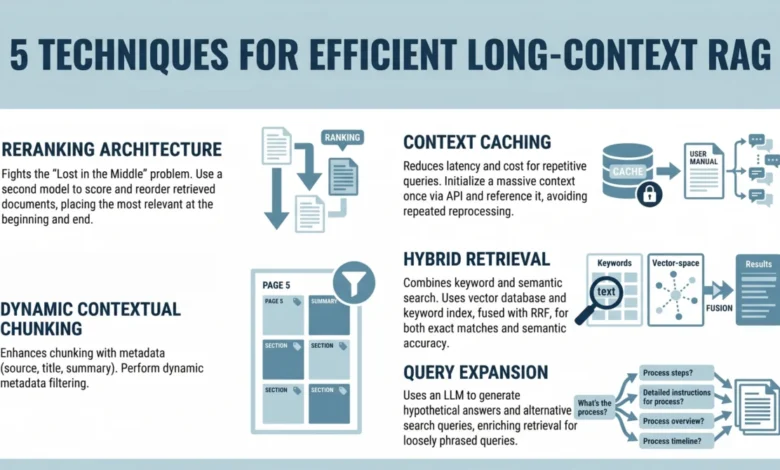

1. Implementing a Reranking Architecture to Combat "Lost in the Middle"

A seminal study from Stanford University and UC Berkeley in 2023 identified a critical weakness in current LLM attention mechanisms: the "Lost in the Middle" problem. This phenomenon empirically demonstrates that when confronted with extensive textual input, LLMs tend to prioritize information presented at the very beginning or the very end of the context window. Information embedded in the middle of a lengthy document or a long series of retrieved chunks is disproportionately likely to be overlooked, misinterpreted, or inadequately weighted by the model’s attention layers. This inherent bias can severely undermine the effectiveness of RAG systems, as crucial details might reside precisely in these neglected central sections.

To counteract this limitation, a crucial architectural enhancement is to introduce a reranking step after the initial retrieval but before the final prompt construction. Instead of feeding the retrieved documents into the LLM prompt in the order they were initially discovered, a sophisticated reranking process ensures that the most vital pieces of information are strategically positioned to maximize the model’s attention.

The developer workflow for implementing this strategy typically involves the following stages:

- Initial Retrieval: The RAG system first performs a standard retrieval process, querying a knowledge base (e.g., a vector database) based on the user’s input. This yields an initial set of potentially relevant document chunks.

- Pre-Reranking Analysis: Before reranking, an analysis of the retrieved chunks can be performed. This might involve metadata checks, simple keyword frequency analysis, or even a quick pass through a smaller, faster LLM to assess the immediate relevance of each chunk to the query.

- Reranking Application: A dedicated reranking model or algorithm is then applied to the initially retrieved set. This reranker is specifically designed to identify and reorder the chunks based on their predicted importance or relevance, often with a bias towards placing high-value content at the beginning or end of the final prompt. For example, a cross-encoder model, which processes query and document pairs simultaneously, can provide a more nuanced relevance score than a simple bi-encoder used in initial retrieval.

- Strategic Prompt Construction: The reranked chunks are then assembled into the final prompt for the primary LLM. This construction can be carefully managed to ensure that the highest-ranked documents are placed at the extremities of the context window, thereby leveraging the LLM’s inherent attention biases for beneficial effect.

This strategic placement ensures that the most important information receives maximum attention from the LLM, mitigating the "Lost in the Middle" effect and significantly improving the quality and accuracy of the generated responses. By prioritizing crucial content through reranking, developers can ensure that the expanded context window is utilized not just for its size, but for its intelligent application.

2. Leveraging Context Caching for Repetitive Queries

The sheer scale of long contexts, while offering immense potential, also introduces significant computational overhead. Processing hundreds of thousands, or even millions, of tokens repeatedly for every query can lead to substantial latency and escalating operational costs. This inefficiency becomes particularly pronounced in applications that handle a high volume of similar or repetitive queries, such as customer support chatbots, internal knowledge assistants, or interactive learning platforms that frequently revisit established topics.

Context caching emerges as a powerful solution to this problem by optimizing the use of computational resources. The core idea behind context caching is to initialize and maintain a persistent, relevant context for the LLM that can be reused across multiple interactions, thereby avoiding the need to re-process the entire vast context each time.

The developer workflow for implementing context caching involves:

- Persistent Context Initialization: For specific use cases or recurring themes, a substantial portion of the relevant long context is loaded and processed once. This could involve ingesting a primary knowledge base, a set of ongoing discussion logs, or critical reference documents.

- Caching Mechanism: The processed context, or a condensed representation of it, is stored in a readily accessible cache. This cache can be memory-based for speed, or a persistent storage solution for longer-term availability.

- Query Interception and Context Augmentation: When a new query arrives, the system first checks if it pertains to the cached context. If it does, the cached context is augmented with any new, query-specific information or recent conversational turns.

- Reduced Re-processing: Instead of re-processing the entire million-token context from scratch, the LLM primarily works with the pre-processed cached context, augmented by the new query-specific data. This dramatically reduces the computational load, latency, and cost associated with each interaction.

- Cache Invalidation and Updates: Mechanisms for invalidating and updating the cache are essential to ensure that the cached information remains relevant. This could involve time-based expiration, content change triggers, or explicit user commands to refresh the knowledge base.

This approach is particularly effective for chatbots built upon static or slowly evolving knowledge bases. For instance, a company’s internal HR policy documentation, a medical textbook for a diagnostic assistant, or a legal code for a legal research tool can all benefit immensely from context caching. By intelligently managing and reusing already processed information, context caching significantly enhances the efficiency and economic viability of long-context RAG systems, making them more practical for real-world deployment.

3. Using Dynamic Contextual Chunking with Metadata Filters

While the expansion of LLM context windows alleviates the need for extremely granular chunking, the principle of relevance remains paramount. Simply increasing the volume of text fed into an LLM does not automatically guarantee improved performance; in fact, it can introduce more noise and dilute the impact of genuinely critical information. The challenge then shifts from fitting information into a small box to effectively navigating and prioritizing within a much larger one.

This is where dynamic contextual chunking, enhanced with metadata filters, becomes indispensable. This approach moves beyond static, fixed-size chunking and instead focuses on creating contextually relevant segments, enriched with descriptive metadata, that can be intelligently filtered and prioritized.

The developer workflow for implementing this technique includes:

- Intelligent Chunking Strategies: Instead of arbitrary fixed-size chunks, employ strategies that respect semantic boundaries. This might involve chunking by paragraphs, sections, or even by logical units of information (e.g., a specific product feature description, a single legal clause, or a distinct step in a process). The goal is to create chunks that represent coherent pieces of information.

- Enrichment with Metadata: Crucially, each chunk is associated with rich metadata. This metadata can include:

- Source Document Information: Title, chapter, section number, page number.

- Content Type: "Introduction," "Methodology," "Conclusion," "Code Snippet," "FAQ."

- Date/Version: When the information was last updated.

- Keywords/Tags: Manually assigned or automatically generated terms describing the chunk’s content.

- Relevance Score (pre-computation): If a preliminary analysis is done during indexing, a rough relevance score can be attached.

- Metadata-Driven Filtering: During the retrieval and prompt construction phases, metadata filters are applied to narrow down the selection of relevant chunks. For example, if a user asks about a recent policy change, the system can prioritize chunks with recent timestamps or specific "policy update" tags.

- Dynamic Context Assembly: Based on the query and the applied metadata filters, the system dynamically assembles the final prompt. This allows for the inclusion of a more targeted and relevant set of information, even from a vast source. For instance, if a user is asking about troubleshooting a specific product model, the system can filter for chunks pertaining to that model and associated error codes, while ignoring general product descriptions.

By combining intelligent chunking with robust metadata, developers can create RAG systems that effectively prune irrelevant information and precisely deliver the most pertinent context. This dramatically reduces the "noise" within the long context, thereby improving the precision and accuracy of the LLM’s output and making the large context window a powerful tool rather than a data overload.

4. Combining Keyword and Semantic Search with Hybrid Retrieval

Vector search, the backbone of modern semantic retrieval, excels at capturing the meaning and intent behind a query, finding documents that are conceptually similar. However, it can sometimes falter when precise keyword matches are critical. For technical queries, specific jargon, product names, or unique identifiers, exact keyword matches are often essential for accuracy. Relying solely on semantic search might miss these crucial lexical details, leading to incomplete or inaccurate results.

Hybrid retrieval addresses this limitation by synergistically combining the strengths of both semantic (vector) search and traditional keyword-based (lexical) search. This integrated approach ensures that the RAG system can find information based on both conceptual understanding and exact term matching, providing a more comprehensive and robust retrieval mechanism.

The developer workflow for implementing hybrid retrieval involves:

- Dual Indexing: The knowledge base is indexed in two ways:

- Vector Index: Document chunks are embedded into vector representations and stored in a vector database for semantic similarity searches.

- Keyword Index: A traditional inverted index (e.g., using BM25 or similar algorithms) is created for efficient keyword searches.

- Query Decomposition and Execution: When a user query is received, it is processed by both search mechanisms:

- Semantic Search: The query is embedded, and a vector similarity search is performed against the vector index.

- Keyword Search: The query is parsed for keywords, and a lexical search is executed against the keyword index.

- Result Merging and Re-ranking: The results from both search types are then combined. Sophisticated algorithms are used to merge and re-rank these results, often assigning scores based on a weighted combination of semantic similarity and keyword relevance. Techniques like reciprocal rank fusion (RRF) are commonly employed to balance the contributions of different retrieval methods.

- Prompt Augmentation: The top-ranked documents from the hybrid retrieval process are then selected and used to augment the LLM prompt.

This combined approach ensures that the RAG system benefits from both the nuanced understanding of semantic search and the precise accuracy of keyword search. For example, a query like "What is the latest firmware version for the ‘QuantumLeap X100’ device?" would benefit from finding documents that contain the exact string "QuantumLeap X100" (keyword search) while also identifying documents discussing "firmware updates" and "device performance optimization" conceptually (semantic search).

Hybrid retrieval is particularly valuable in domains like technical documentation, scientific literature, and legal texts, where specific terminology and precise phrasing are critical for accurate information retrieval. By ensuring both semantic relevance and lexical accuracy, this technique significantly enhances the reliability and utility of long-context RAG systems.

5. Applying Query Expansion with Summarize-Then-Retrieve

User queries are often a simplified, sometimes ambiguous, representation of the information they are truly seeking. The language used in a natural language query might differ significantly from the precise terminology or phrasing found within a knowledge base. This mismatch can lead to suboptimal retrieval results, even with advanced search techniques. Query expansion is a powerful technique designed to bridge this gap by broadening the scope of the initial search.

A particularly effective method for query expansion in the context of RAG is the "Summarize-Then-Retrieve" approach, often leveraging a lightweight LLM. This strategy aims to rephrase and enrich the original user query by generating hypothetical questions or statements that are more likely to match the content within the knowledge base, thereby improving the chances of retrieving the most relevant information.

The developer workflow for implementing query expansion using this method includes:

- Lightweight LLM Integration: A smaller, more efficient LLM (compared to the primary generation LLM) is used for the query expansion task. This keeps the process fast and cost-effective.

- Hypothetical Question Generation: The original user query is fed into the lightweight LLM, with instructions to generate a set of alternative phrasings, related questions, or hypothetical scenarios that capture the essence of the original query. The model is prompted to think about different ways the information might be expressed in the source documents.

- Example of Query Expansion:

- User Query: "What do I do if the fire alarm goes off?"

- Generated Hypotheticals:

- "Emergency procedures for fire alarms."

- "Steps to take during a fire alarm activation."

- "Safety protocols when a fire alarm sounds."

- "Immediate actions for a fire alarm event."

- "Guide to fire alarm response."

- Expanded Search Execution: The original query, along with one or more of the generated hypothetical queries, is then used to perform the retrieval. This can involve executing multiple searches and aggregating the results, or using a combined query that incorporates elements of the original and expanded queries.

- Summarization and Refinement (Optional but Recommended): After retrieval, the most relevant retrieved documents can be briefly summarized by the lightweight LLM. This summary can then be used to further refine the original query for a subsequent retrieval pass or to provide context for the main LLM.

This technique is particularly beneficial for inferential queries, vaguely phrased questions, or queries where the user might not know the exact terminology used in the source material. By anticipating different ways the information might be presented, query expansion with summarize-then-retrieve significantly enhances the recall and precision of the RAG system, ensuring that even complex or indirectly phrased questions can yield accurate and comprehensive answers.

Conclusion: Towards More Intelligent and Efficient AI Interaction

The advent of LLMs with million-token context windows has ushered in a new era for retrieval-augmented generation. This expansion has shifted the focus from the mechanical challenge of fitting data into small containers to the sophisticated task of effectively navigating, prioritizing, and leveraging vast amounts of information. The inherent limitations of LLM attention mechanisms, particularly the "Lost in the Middle" phenomenon, coupled with the ongoing concerns regarding computational cost and latency, necessitate a move beyond naive data ingestion.

By implementing advanced techniques such as strategic reranking to combat attention biases, context caching to optimize resource utilization for repetitive queries, dynamic contextual chunking with metadata filtering for precise information delivery, hybrid retrieval to combine semantic and keyword search accuracy, and query expansion via summarize-then-retrieve to bridge the gap between user intent and document language, developers can construct RAG systems that are not only scalable but also highly precise and cost-effective.

The ultimate goal is not merely to provide LLMs with more data, but to ensure that these models can consistently and reliably access, interpret, and utilize the most pertinent information within expansive contexts. These modern techniques represent a crucial step towards building more intelligent, efficient, and ultimately more powerful AI applications that can truly harness the potential of vast knowledge bases. The evolution of RAG is a testament to the ongoing innovation in the field of AI, demonstrating a continuous effort to push the boundaries of what is possible with large language models and their integration with external information sources.