Building Trust in the AI Era with Privacy-Led UX

The practice of privacy-led user experience (UX) is emerging as a critical design philosophy, treating transparency around data collection and usage not as a mere regulatory hurdle, but as a foundational element in cultivating robust customer relationships. This approach reframes user consent from a perfunctory compliance exercise into the inaugural step of an ongoing, trust-based dialogue between companies and their customers. For organizations that successfully integrate privacy-led UX, the rewards extend beyond immediate consent rates, fostering a more intangible, enduring, and valuable asset: deep consumer trust. This strategic shift is particularly pertinent in the rapidly evolving digital landscape, where the increasing sophistication of AI technologies presents both unprecedented opportunities and significant challenges for data privacy.

The growing recognition of privacy-led UX’s potential is a relatively recent development. Adelina Peltea, Chief Marketing Officer at Usercentrics, has observed a significant evolution in enterprise sentiment regarding this domain. "Even just a few years ago," Peltea stated, "this space was viewed more as a trade-off between growth and compliance. However, as the market has matured, there’s been a greater focus on how to tie well-designed privacy experiences to business growth." This sentiment reflects a broader industry realization that prioritizing user privacy can, in fact, become a powerful driver of both customer loyalty and commercial success.

The Evolution of Privacy as a Business Imperative

Historically, data privacy regulations, such as the General Data Protection Regulation (GDPR) in Europe enacted in May 2018 and the California Consumer Privacy Act (CCPA) which came into effect in January 2020, have primarily been perceived as compliance mandates. These regulations imposed strict rules on how companies collect, process, and store personal data, with significant penalties for non-compliance. Initial industry responses often involved implementing the bare minimum required to avoid fines, leading to cumbersome and often opaque consent mechanisms. This approach, however, proved to be a missed opportunity for genuine engagement.

The past five years have witnessed a significant paradigm shift. As consumers have become more aware of their data rights and the pervasive nature of data collection, they have increasingly demanded greater control and transparency. This heightened consumer awareness, coupled with ongoing regulatory developments and high-profile data breaches, has pushed privacy from a back-office compliance concern to a front-and-center strategic priority for businesses. Companies are now beginning to understand that proactively embracing privacy can differentiate them in a crowded marketplace and build a competitive advantage.

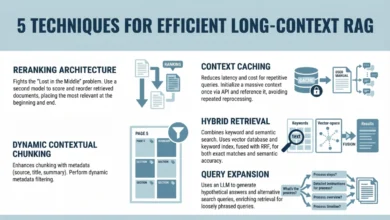

The Usercentrics report, titled "Building Trust in the AI Era with Privacy-Led UX," delves into this crucial intersection of data transparency, consumer trust, and business performance. It highlights how well-designed, value-forward consent experiences consistently exceed initial performance projections. These experiences are not confined to a single touchpoint but are woven into various aspects of the user journey, including:

- Consent Management Platforms (CMPs): These tools provide users with granular control over which data they consent to share and for what purposes.

- Terms and Conditions & Privacy Policies: Traditionally dense and complex legal documents, these are increasingly being reimagined for clarity and accessibility.

- Data Subject Access Request (DSAR) Tools: These empower individuals to request access to the personal data a company holds about them, fostering transparency and accountability.

- AI Data Use Disclosures: As AI becomes more integrated into products and services, clear explanations of how AI systems utilize personal data are becoming paramount.

The report underscores that effective privacy practices are not just about adhering to legal obligations; they are about building a foundational relationship with users based on mutual respect and understanding.

The Tangible Benefits of Transparency and Trust

The assertion that well-designed privacy experiences routinely outperform initial estimates is supported by a growing body of evidence. Companies that prioritize transparency often see higher engagement rates and more valuable interactions with their users. When users understand what data is being collected, why it is being collected, and how it will be used, they are more likely to grant consent and to engage more deeply with the product or service. This is because they feel empowered and respected, rather than manipulated or exploited.

For instance, a study by Usercentrics found that personalized consent banners, which offer clear choices and explanations, can lead to significantly higher consent rates compared to generic, opt-out-heavy banners. While specific figures vary across industries and user demographics, improvements of 10-20% in consent rates have been observed in some implementations. More importantly, the quality of consent obtained through transparent means is higher. Users who understand and actively choose to share their data are more likely to be engaged customers, providing more accurate and valuable data, and exhibiting greater loyalty.

The implications for business performance are multifaceted:

- Enhanced Customer Loyalty: Trust is the bedrock of long-term customer relationships. When customers feel their privacy is respected, they are less likely to churn and more likely to advocate for the brand.

- Improved Data Quality: Consensual data collection leads to more accurate and reliable data, which in turn fuels more effective marketing campaigns, better product development, and more personalized user experiences.

- Competitive Differentiation: In an era of increasing data skepticism, companies that champion privacy can stand out from competitors who are perceived as less transparent or trustworthy.

- Reduced Risk: Proactive privacy measures can mitigate the risk of regulatory fines, data breaches, and the associated reputational damage.

The report specifically examines how this data transparency builds trust, how this trust can support business performance, and crucially, how organizations can sustain this trust even as AI systems introduce new layers of complexity into consent processes.

Navigating the AI Frontier: New Challenges and Opportunities

The advent of sophisticated Artificial Intelligence (AI) systems has introduced a new dimension to the privacy landscape. AI’s ability to process vast amounts of data, identify patterns, and make predictions raises novel questions about data usage and user consent. For example, the use of AI in personalized advertising, content recommendation, and even in decision-making processes within applications requires a clear understanding of how personal data is being utilized.

Timeline of Key Developments:

- May 2018: GDPR implementation in the European Union, setting a global precedent for data privacy.

- January 2020: CCPA comes into effect in California, granting consumers new rights over their personal information.

- Ongoing: Continuous development of AI technologies, leading to new data processing capabilities and privacy considerations.

- Present: Growing industry focus on privacy-led UX as a strategic differentiator and a means to build consumer trust, particularly in the context of AI.

The report highlights that organizations must be prepared to explain AI-driven data usage in a clear and understandable manner. This could involve:

- AI Data Use Disclosures: Providing users with specific information about how their data is used by AI algorithms. This goes beyond general statements in privacy policies and offers concrete examples.

- Explainable AI (XAI): Efforts to make AI decision-making processes more transparent and understandable to both regulators and end-users.

- Granular Consent for AI: Allowing users to consent to specific AI-driven functionalities or data uses, rather than broad, all-encompassing agreements.

For instance, if an AI system is used to personalize product recommendations, a privacy-led UX would clearly inform the user about this, explain the type of data used for this personalization (e.g., browsing history, purchase history), and provide an easy way to opt-out or adjust the level of personalization. This level of detail is crucial for maintaining trust, especially as AI’s influence on user experience becomes more profound.

Broader Impact and Implications

The shift towards privacy-led UX has far-reaching implications beyond individual companies. It signals a broader societal movement towards greater digital accountability and user empowerment. As more companies adopt these principles, it can create a virtuous cycle, encouraging competitors to follow suit and raising the overall standard for data privacy across the digital ecosystem.

The report serves as a call to action for businesses to move beyond a compliance-centric mindset and embrace privacy as a strategic enabler of growth and trust. The insights contained within suggest that investing in well-designed privacy experiences is not just a responsible choice, but a financially sound one. The intangible asset of consumer trust, when cultivated through genuine transparency and respect for user data, can yield dividends in loyalty, engagement, and long-term business sustainability.

The research, design, and writing of this report were conducted by human writers, editors, analysts, and illustrators. AI tools may have been utilized for secondary production processes but were subject to thorough human review, ensuring the integrity and quality of the content. This commitment to human oversight underscores the importance of transparency and accountability in all aspects of digital engagement, mirroring the very principles that privacy-led UX seeks to champion.

The full report, "Building Trust in the AI Era with Privacy-Led UX," is available for download, offering a comprehensive examination of these critical issues and actionable strategies for organizations seeking to navigate the complex landscape of data privacy and AI.