A Hands-On Guide to Testing Agents with RAGAs and G-Eval

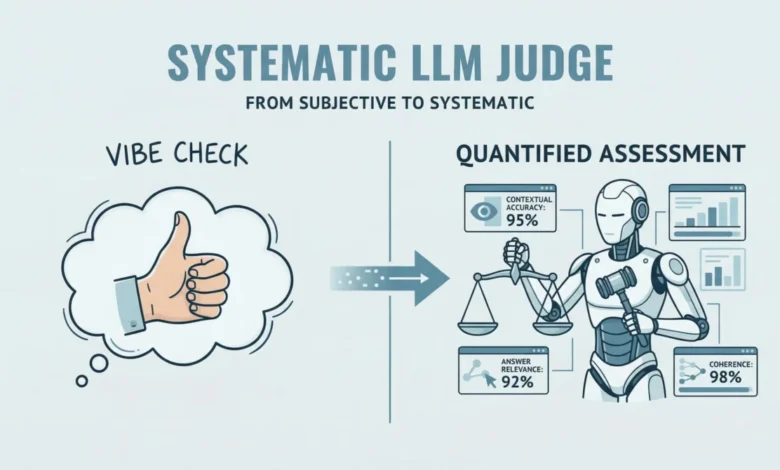

In the rapidly evolving landscape of artificial intelligence, the ability to rigorously evaluate the performance of Large Language Model (LLM) applications is paramount. This article provides a practical, hands-on workflow for assessing LLM and agent-based applications, leveraging the power of RAGAs (Retrieval-Augmented Generation Assessment) and G-Eval-based frameworks. We will delve into a systematic approach that moves beyond subjective assessments to quantifiable metrics, ensuring the reliability and quality of these advanced AI systems.

The Imperative for Robust LLM Evaluation

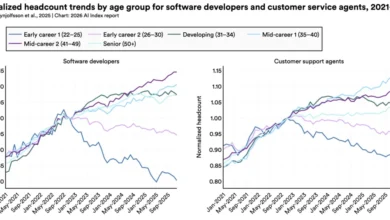

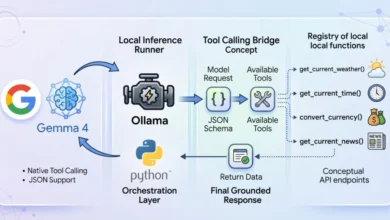

The proliferation of LLMs has opened up a vast array of possibilities across industries, from customer service chatbots and content generation tools to complex decision-support systems. However, the inherent probabilistic nature of these models, coupled with the intricate architectures of modern AI agents, necessitates sophisticated evaluation methodologies. Traditional testing methods often fall short when faced with the nuanced outputs and emergent behaviors of LLMs. This gap has spurred the development of specialized frameworks designed to quantify and qualify the performance of these systems.

RAGAs, an open-source evaluation framework, stands at the forefront of this movement. It aims to replace subjective "vibe checks" with an LLM-driven "judge" that systematically quantifies the quality of Retrieval-Augmented Generation (RAG) pipelines. RAGs focuses on assessing key desirable properties of RAG systems, such as contextual accuracy and answer relevance. Beyond RAG architectures, RAGAs has evolved to encompass agent-based applications, where methodologies like G-Eval are instrumental in defining custom, interpretable evaluation criteria.

To facilitate a comprehensive testing environment, this article will utilize DeepEval. This framework integrates multiple evaluation metrics into a unified testing sandbox, simplifying the process of implementing and running evaluations. For those new to LLM evaluation frameworks, a foundational understanding of RAG concepts is recommended, with a related article available for further exploration.

A Step-by-Step Practical Workflow

The following guide is designed for seamless execution in both standalone Python Integrated Development Environments (IDEs) and Google Colab notebooks. Users may encounter ModuleNotFoundError if certain libraries are not installed, necessitating a simple pip install command to resolve these dependencies.

Simulating a Basic Agent

Our journey begins with defining a foundational function that simulates a simple agent. This function accepts a user query and interacts with an LLM API, such as OpenAI’s, to generate a response. This represents a fundamental input-response workflow, intentionally kept simple to focus on the evaluation aspect.

import openai

def simple_agent(query):

# NOTE: this is a 'mock' agent loop

# In a real scenario, you would use a system prompt to define tool usage

prompt = f"You are a helpful assistant. Answer the user query: query"

# Example using OpenAI (this can be swapped for Gemini or another provider)

response = openai.chat.completions.create(

model="gpt-3.5-turbo",

messages=["role": "user", "content": prompt]

)

return response.choices[0].message.contentWhile this "mock" agent is a simplified representation, a production-ready agent would incorporate advanced capabilities like reasoning, planning, and tool execution. The current design prioritizes clarity for the evaluation demonstration.

Introducing RAGAs: Quantifying Faithfulness

We now introduce RAGAs to evaluate a question-answering scenario. The initial focus is on the faithfulness metric, which rigorously measures how accurately the generated answer aligns with the provided contextual information.

from ragas import evaluate

from ragas.metrics import faithfulness

# Defining a simple testing dataset for a question-answering scenario

data =

"question": ["What is the capital of Japan?"],

"answer": ["Tokyo is the capital."],

"contexts": [["Japan is a country in Asia. Its capital is Tokyo."]]

# Running RAGAs evaluation

result = evaluate(data, metrics=[faithfulness])It is important to note that executing these examples typically requires sufficient API quota from providers like OpenAI or Gemini, often necessitating a paid account.

Expanding Evaluation with Answer Relevancy

To further illustrate the capabilities of RAGAs, we can incorporate an additional metric, answer_relevancy, and utilize a more structured dataset. This allows for a more nuanced assessment of the LLM’s output.

We begin by defining a set of test cases, each comprising a question, the generated answer, supporting contexts, and the ground truth for comparison.

test_cases = [

"question": "How do I reset my password?",

"answer": "Go to settings and click 'forgot password'. An email will be sent.",

"contexts": ["Users can reset passwords via the Settings > Security menu."],

"ground_truth": "Navigate to Settings, then Security, and select Forgot Password."

]Before proceeding, ensure your API key is properly configured. Initially, we demonstrate the evaluation process without encapsulating the logic within an agent structure.

import os

from ragas import evaluate

from ragas.metrics import faithfulness, answer_relevancy

from datasets import Dataset

# IMPORTANT: Replace "YOUR_API_KEY" with your actual API key

os.environ["OPENAI_API_KEY"] = "YOUR_API_KEY"

# Convert list to Hugging Face Dataset (required by RAGAs)

dataset = Dataset.from_list(test_cases)

# Run evaluation

ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy])

print(f"RAGAs Faithfulness Score: ragas_results['faithfulness']")

print(f"RAGAs Answer Relevancy Score: ragas_results['answer_relevancy']")Simulating an Agent-Based RAGAs Evaluation

To simulate an agent-based workflow, we can encapsulate the RAGAs evaluation logic into a reusable function. This approach promotes modularity and makes the evaluation process more akin to how an AI agent might orchestrate tasks.

import os

from ragas import evaluate

from ragas.metrics import faithfulness, answer_relevancy

from datasets import Dataset

def evaluate_ragas_agent(test_cases, openai_api_key="YOUR_API_KEY"):

"""

Simulates a simple AI agent that performs RAGAs evaluation.

"""

os.environ["OPENAI_API_KEY"] = openai_api_key

# Convert test cases into a Dataset object

dataset = Dataset.from_list(test_cases)

# Run evaluation

ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy])

return ragas_resultsThe Hugging Face Dataset object is a crucial component here, designed for efficient handling of structured data, which is fundamental for both LLM evaluation and inference tasks.

The following code snippet demonstrates how to invoke this evaluation function:

my_openai_key = "YOUR_API_KEY" # Replace with your actual API key

if 'test_cases' in globals():

evaluation_output = evaluate_ragas_agent(test_cases, openai_api_key=my_openai_key)

print("RAGAs Evaluation Results:")

print(evaluation_output)

else:

print("Please define the 'test_cases' variable first. Example:")

print("test_cases = [ 'question': '...', 'answer': '...', 'contexts': [...], 'ground_truth': '...' ]")DeepEval: Qualitative Assessment with G-Eval

Moving beyond the quantitative metrics provided by RAGAs, we introduce DeepEval, which offers a qualitative evaluation layer. This framework employs a reasoning-and-scoring approach, making it particularly adept at assessing attributes such as coherence, clarity, and professionalism. This is where G-Eval, a method for defining custom, interpretable evaluation criteria, plays a significant role.

from deepeval.metrics import GEval

from deepeval.test_case import LLMTestCase, LLMTestCaseParams

# STEP 1: Define a custom evaluation metric

coherence_metric = GEval(

name="Coherence",

criteria="Determine if the answer is easy to follow and logically structured.",

evaluation_params=[LLMTestCaseParams.INPUT, LLMTestCaseParams.ACTUAL_OUTPUT],

threshold=0.7 # Pass/fail threshold

)

# STEP 2: Create a test case

# Assuming test_cases is defined as above and we are evaluating the first case

case = LLMTestCase(

input=test_cases[0]["question"],

actual_output=test_cases[0]["answer"]

)

# STEP 3: Run evaluation

coherence_metric.measure(case)

print(f"G-Eval Score: coherence_metric.score")

print(f"Reasoning: coherence_metric.reason")The G-Eval metric, as demonstrated, allows for the definition of specific criteria that an LLM will use to evaluate the provided input and actual output. The threshold parameter enables a binary pass/fail outcome, while the reason attribute provides valuable insight into the LLM’s assessment process.

Key Steps in the Evaluation Workflow

The comprehensive evaluation of LLM applications involves several critical steps:

- Define Agent Functionality: Clearly articulate the purpose and expected behaviors of the LLM agent. This includes specifying its role, the tools it can access, and the types of queries it is designed to handle.

- Develop Test Cases: Construct a diverse and representative set of test cases. These should cover various scenarios, including common queries, edge cases, and potentially adversarial inputs, to thoroughly probe the agent’s capabilities. Each test case should include the input, expected output (or ground truth), and relevant context.

- Integrate Evaluation Frameworks: Select and integrate appropriate evaluation frameworks like RAGAs and DeepEval. RAGAs is ideal for quantitative assessment of RAG pipelines and agent outputs, focusing on metrics like faithfulness and relevancy. DeepEval, with its G-Eval capabilities, excels in qualitative assessments, evaluating aspects like coherence, clarity, and adherence to specific criteria.

- Configure API Access: Ensure secure and proper configuration of API keys for the LLM providers being used. This is crucial for enabling the evaluation frameworks to interact with the models.

- Execute Evaluations: Run the defined test cases through the integrated evaluation frameworks. This process generates quantitative scores and qualitative feedback.

- Analyze Results and Iterate: Carefully analyze the evaluation results. Identify areas where the agent performs well and areas that require improvement. Use this analysis to refine the agent’s prompts, underlying logic, or even the choice of LLM. This iterative process is key to building robust and high-performing AI systems.

Summary and Broader Implications

This article has provided a practical guide to evaluating LLM and retrieval-augmented applications using RAGAs and G-Eval-based frameworks within the DeepEval ecosystem. By combining quantitative metrics, such as faithfulness and answer relevancy, with qualitative assessments like coherence, developers can construct a more comprehensive and reliable evaluation pipeline for modern AI systems.

The ability to systematically test and validate LLM applications is not merely an academic exercise; it is a critical necessity for their successful deployment in real-world scenarios. As LLM technology continues to advance, the demand for robust evaluation tools will only grow. Frameworks like RAGAs and DeepEval are empowering developers and organizations to build trust in their AI systems by providing transparent, data-driven insights into their performance. This rigorous approach to evaluation ultimately leads to more dependable, effective, and ethically sound AI solutions, paving the way for broader adoption and innovation across all sectors. The future of AI hinges on our ability to not only build powerful models but also to understand and control their behavior with precision.