HiBob HR Platform Review: A Modern People-First Solution for Global Hybrid Teams

HiBob, often referred to simply as "Bob," represents a significant shift in the Human Resources Information System (HRIS) landscape, moving away from the rigid, administrative-heavy databases of the past toward a dynamic, employee-centric experience. As the global workforce continues to navigate the complexities of remote, hybrid, and geographically dispersed employment models, the demand for software that fosters culture rather than just tracking hours has reached an all-time high. HiBob was engineered from its inception to address these specific modern challenges, positioning itself as a "people management platform" rather than a traditional HR tool. By prioritizing scalability, agility, and employee engagement, HiBob aims to help organizations of varying sizes—from mid-market growth companies to large enterprises—boost productivity and retention through a data-driven approach to human capital.

The Evolution of HR Tech: Context and Background

The emergence of HiBob in 2015 occurred at a pivotal moment for the technology sector. Founded by Ronni Zehavi and Israel David, the company sought to disrupt a market dominated by legacy systems like SAP and Workday, which many growing companies found too cumbersome, or SMB-focused tools like BambooHR, which some felt lacked the depth required for complex global operations. Since its founding, HiBob has raised hundreds of millions of dollars in venture capital, including a notable Series D round that valued the company at over $2.4 billion. This financial backing reflects a broader market trend: the global HR software market is projected to reach approximately $40 billion by 2030, driven by the necessity of digital transformation in the workplace.

The platform’s design philosophy is rooted in the "Consumerization of IT," where business software is expected to be as intuitive and engaging as social media or personal apps. This is particularly relevant in the post-pandemic era, where "The Great Resignation" and the "Quiet Quitting" phenomenon forced companies to re-evaluate how they connect with staff. HiBob addresses this by moving the HR function from the "back office" to the center of the employee’s daily digital life.

Platform Architecture: Operations, Culture, and Strategy

HiBob categorizes its extensive feature set into three distinct pillars: Operations, Culture, and Strategy. This tripartite structure allows HR teams to manage the entire employee lifecycle while ensuring that administrative efficiency does not come at the expense of corporate community.

Operations: Streamlining the Administrative Core

At its most basic level, HiBob automates the repetitive tasks that traditionally consume HR resources. The platform is built with custom automation and workflow engines that allow for the acceleration of tasks such as contract signing, document storage, and permission management. These workflows are highly adjustable; a company can create different onboarding sequences for a software engineer in Berlin versus a sales executive in New York. The centralized document storage and electronic signature support eliminate the need for third-party tools, creating a "single source of truth" for all employee data.

Culture: The Social-First Interface

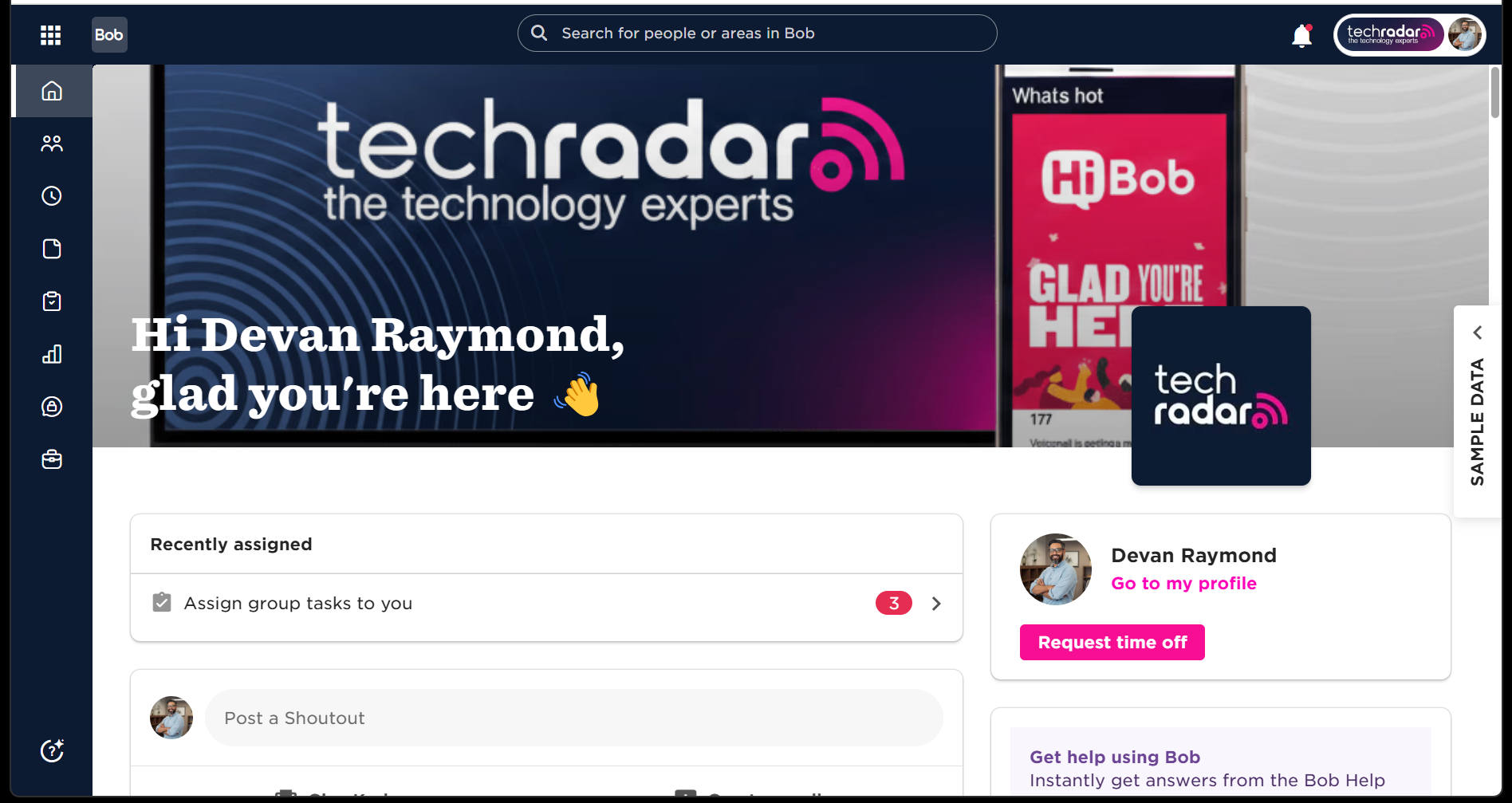

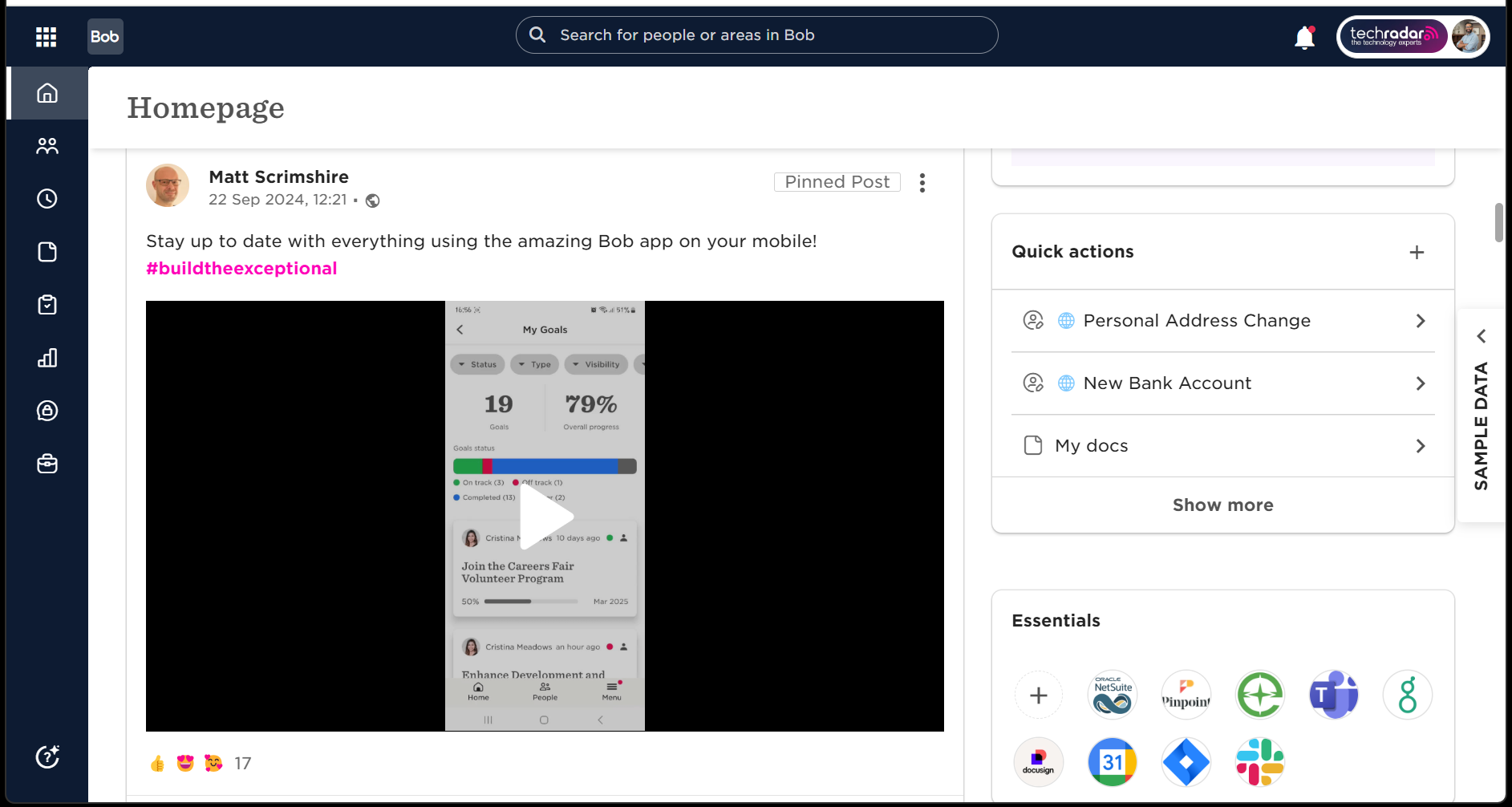

Perhaps the most striking feature of HiBob is its homepage, which functions similarly to a social media newsfeed. Instead of a sterile dashboard of forms and links, employees see updates on new hires, work anniversaries, birthdays, and "shout-outs" for good performance. This interface is designed to bridge the gap in hybrid work environments where spontaneous "water cooler" moments are rare. The visual organization chart further enhances this by allowing employees to see not just who reports to whom, but also personal details (as permitted) that help build rapport across departments.

Strategy: Data-Driven Decision Making

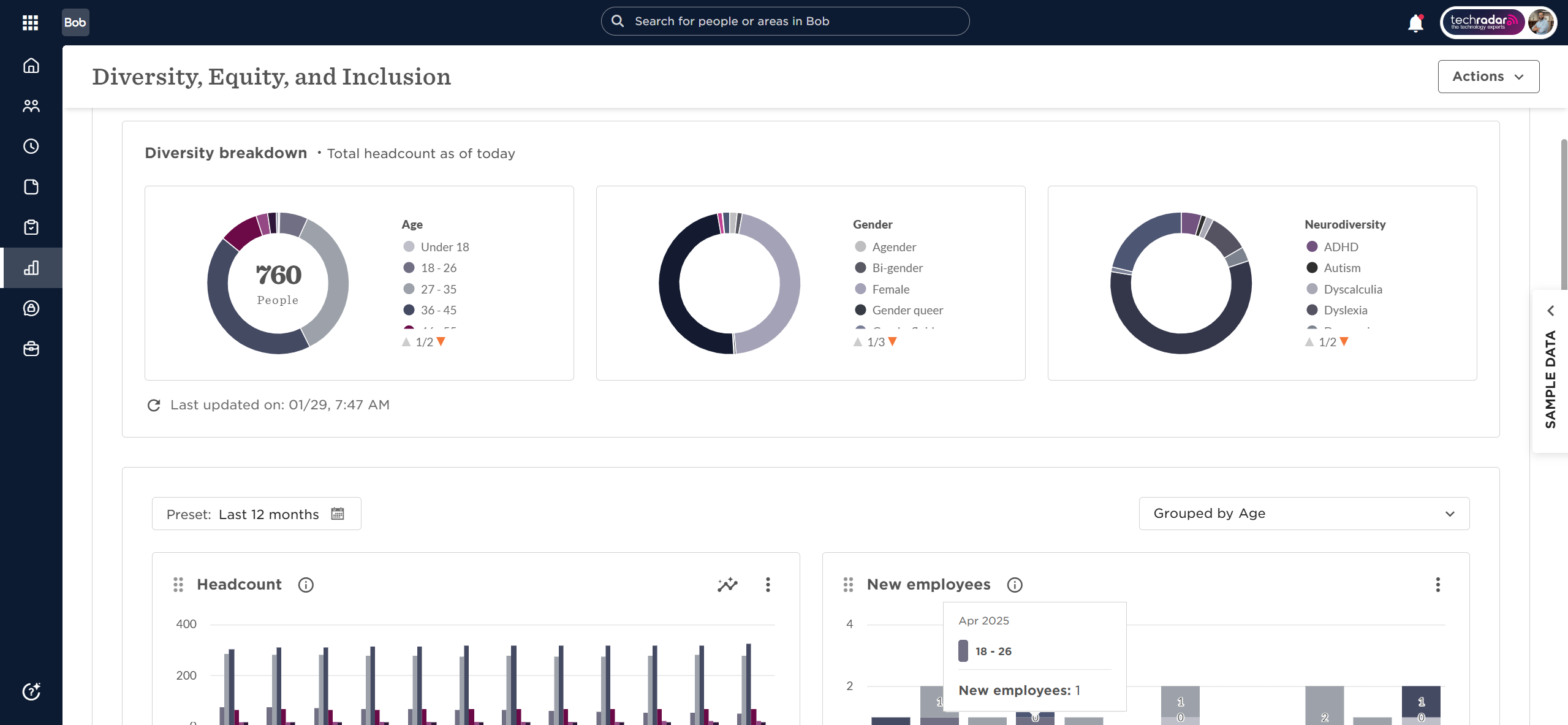

For leadership, HiBob offers sophisticated analytics that go beyond basic headcount. The platform includes 12 pre-configured dashboards, including a dedicated Diversity, Equity, and Inclusion (DEI) module. These tools allow executives to track attrition rates, gender pay gaps, and promotion cycles in real-time. The data is presented through highly customizable visual charts that can be dragged, dropped, and exported for board-level presentations.

Chronology of Feature Expansion and AI Integration

The development of HiBob has followed a steady trajectory of adding depth to its core offering. Over the last several years, the company has aggressively integrated Artificial Intelligence to keep pace with the broader tech industry.

- Early Years (2015-2018): Focus on the core HRIS, time tracking, and the "social feed" UI.

- Global Expansion (2019-2021): Introduction of multi-currency payroll hubs and compliance tools for various jurisdictions (US, UK, and Europe).

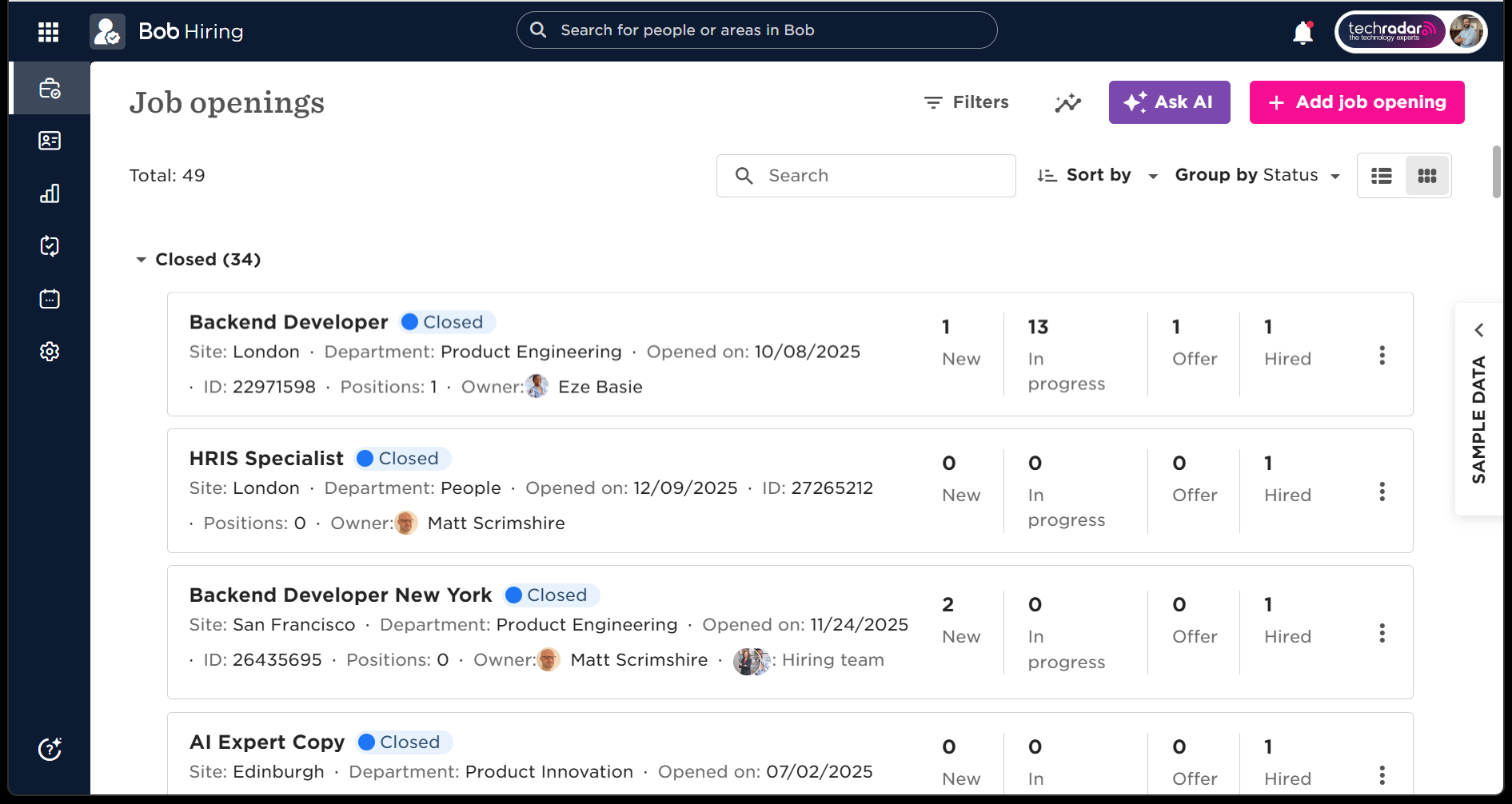

- The Talent Suite (2022): Expansion into recruitment and performance management, including 360-degree feedback and individual development plans.

- The AI Era (2023-Present): The launch of "Bob Companion" and AI-powered insights.

The current AI suite is more transparent than many of its competitors. HiBob allows administrators to toggle AI features on or off and provides clear guiding principles regarding data privacy. The AI assistant helps managers write job descriptions and performance reviews, while the "Bob Companion" tool—currently in beta—can analyze company policies to answer employee questions instantly, such as "What is the parental leave policy for the London office?"

Navigating the Modular Pricing and Implementation Model

One of the primary considerations for any business evaluating HiBob is its pricing structure. Unlike some entry-level HR software that offers flat-rate monthly subscriptions, HiBob utilizes a quote-based, modular pricing model. This means companies only pay for the features they need—such as the core HRIS, the payroll hub, or the performance module.

While this modularity offers flexibility for scaling businesses, it can present challenges for budget forecasting. As a company grows and requires more advanced features like compensation management or workforce planning, the total cost of ownership increases. Furthermore, the lack of public pricing can make the initial research phase more time-consuming for procurement departments.

Implementation is another factor that requires careful planning. Because HiBob is highly configurable, setting up the system to mirror a company’s specific hierarchy and culture takes time. HiBob typically assigns a dedicated implementation manager to new clients to assist with data migration and system architecture. While this ensures a high-quality setup, it means that "going live" is not an overnight process; it is a strategic rollout that requires buy-in from multiple stakeholders.

Competitive Analysis: HiBob vs. The Market

In the mid-market HR space, HiBob faces stiff competition from several directions.

- Rippling: Often cited as HiBob’s closest rival, Rippling focuses heavily on the intersection of HR and IT. While Rippling excels at managing hardware and software provisioning, HiBob is generally considered to have a superior "culture" and "employee experience" interface.

- BambooHR: A favorite for smaller businesses, BambooHR is often viewed as more straightforward and easier to implement. However, as companies grow toward 500+ employees and go global, they often find BambooHR’s reporting and multi-national support lacking compared to HiBob.

- Workday/SAP: These enterprise giants offer unparalleled power and depth. However, they are often criticized for being difficult to use and requiring specialized consultants to maintain. HiBob positions itself as the "modern alternative" that provides enterprise-grade data without the enterprise-grade headache.

Supporting Data and Performance Metrics

The effectiveness of HiBob is often measured through its impact on employee engagement. Internal case studies and industry reports suggest that companies using "culture-first" HRIS platforms see a marked improvement in employee Net Promoter Scores (eNPS). For instance, organizations with high levels of social recognition—a core component of the HiBob feed—report 31% lower voluntary turnover rates than those without.

Additionally, the efficiency gains for HR departments are measurable. By automating the "onboarding-to-payroll" pipeline, companies can reduce the administrative time spent on new hires by up to 50%. The platform’s integration capabilities also play a role; with support for ADP, Xero, Slack, Microsoft Teams, and Google Workspace, HiBob acts as a central hub that reduces "app fatigue" for the workforce.

Broader Impact and Implications for the Future of Work

The rise of platforms like HiBob signals a permanent change in how corporations view their employees. The transition from "Human Resources" to "People Operations" reflects a shift in power where employees expect transparency, recognition, and a sense of belonging, even if they never step foot in a physical office.

As HiBob continues to refine its AI capabilities and global compliance tools, the implications for the broader industry are clear: the HRIS of the future must be more than a database. It must be a communication tool, a strategic advisor, and a cultural anchor. While the costs and implementation time may be higher than basic alternatives, the long-term value for a "people-first" business lies in its ability to retain talent in a hyper-competitive global market.

In conclusion, HiBob stands out as a premier choice for modern, mid-to-large-scale organizations. Its commitment to design, transparency in AI, and focus on the human element of "human resources" makes it a formidable player in the tech landscape. For businesses that view their culture as a competitive advantage, HiBob is not just a utility—it is a strategic asset.