Python Decorators: Fortifying Machine Learning Systems for Production Reliability, Observability, and Efficiency

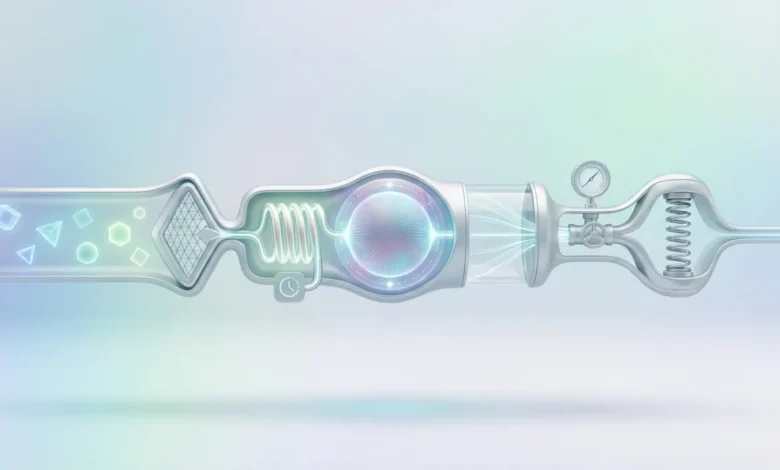

The deployment of machine learning (ML) models into production environments presents a distinct set of challenges that often diverge significantly from the controlled conditions of development and experimentation. While the core algorithms and their training might be well-understood, the real-world operationalization of these systems demands a robust approach to ensure they perform reliably, offer clear insights into their behavior, and operate with optimal efficiency. Python decorators, often perceived as a tool for concise code embellishment, emerge as a powerful and elegant solution for addressing these critical production concerns. This article explores how five specific decorator patterns—automatic retry with exponential backoff, input validation and schema enforcement, result caching with time-to-live, memory-aware execution, and execution logging and monitoring—can fundamentally enhance the resilience and manageability of ML systems in live production settings.

The Production ML Landscape: Beyond the Notebook

In the realm of machine learning engineering, moving a model from a Jupyter notebook to a live service introduces a cascade of complexities. The seamless execution of code within a development environment often masks the inherent fragility of systems interacting with external dependencies, fluctuating data streams, and finite computational resources. Production ML systems are not static entities; they are dynamic, constantly interacting with a world that is far less predictable than a curated dataset.

Consider the typical lifecycle of a production ML service. A request arrives, triggering a chain of operations: data retrieval from an external API, feature engineering, model inference, and finally, the return of a prediction. Each step in this chain represents a potential point of failure. An API might be temporarily unavailable due to network issues or server maintenance. Data sources could experience downtime, leading to missing values or incorrect formats. The sheer volume of data being processed, especially with large tensor operations, can strain memory resources, leading to service crashes. Furthermore, the performance of the model itself can degrade over time as the underlying data distribution drifts, a phenomenon known as data drift.

Traditional error handling, such as verbose try-except blocks scattered throughout the codebase, can quickly render a system unmanageable. This spaghetti-like error management not only obfuscates the core logic but also makes it incredibly difficult to implement consistent and sophisticated resilience strategies. This is where the strategic application of Python decorators offers a paradigm shift. By abstracting away these operational concerns into reusable, declarative units, decorators allow ML engineers to maintain a clear focus on the model’s core functionality while embedding critical production-grade robustness.

1. Ensuring Continuity: Automatic Retry with Exponential Backoff

Production machine learning pipelines are intrinsically linked to external services. Whether it’s querying a model inference endpoint, fetching embeddings from a vector database, or retrieving real-time features from a data store, these interactions are susceptible to transient failures. Network disruptions, service throttling, and even the "cold start" latency of serverless functions can cause requests to fail. Implementing a manual retry mechanism for every external call can lead to a significant increase in code complexity and a decrease in readability.

The @retry decorator provides an elegant solution to this pervasive problem. This pattern allows developers to define retry policies directly on the function that performs the external call. Crucially, it supports configurable parameters such as max_retries, which dictates the maximum number of attempts, and backoff_factor, which determines the multiplier for the delay between retries. This exponential backoff strategy is vital: it prevents overwhelming a struggling service with repeated, immediate requests. Instead, the delay between attempts gradually increases, offering the service ample time to recover.

For instance, a service that fetches user embeddings from an external API might be decorated with @retry(max_retries=3, backoff_factor=0.5, exceptions=(requests.exceptions.Timeout, ConnectionError)). If the initial call times out or fails due to a connection error, the decorator will automatically wait for a calculated duration (e.g., 0.5 seconds, then 1 second, then 2 seconds) before attempting the call again, up to a maximum of three times. If all retries are exhausted without success, the exception is re-raised, allowing for higher-level error handling.

The impact of this decorator is profound. It centralizes resilience logic, keeping the core function clean and focused on its task. This modularity enables fine-grained control over retry behavior for different external dependencies, optimizing resource utilization and minimizing service interruptions. In scenarios where occasional API timeouts are expected, such as during peak traffic or during scheduled maintenance of a dependent service, this decorator can transform a potentially disruptive failure into a seamless, imperceptible recovery for the end-user. The reduction in "noisy alerts" triggered by transient issues also frees up valuable engineering time for more complex problem-solving.

2. Safeguarding Against Data Inconsistencies: Input Validation and Schema Enforcement

Data quality is a foundational pillar of reliable machine learning systems. Models are meticulously trained on data exhibiting specific characteristics: expected data types, defined ranges, and predictable shapes. In production, however, the integrity of this data can be compromised by upstream changes in data pipelines, sensor malfunctions, or errors in data ingestion processes. The introduction of null values, incorrect data types, or unexpected data structures can lead to silent failures, where the system continues to operate but produces erroneous or nonsensical predictions. The detection of such issues can often be delayed, meaning the system may have been serving flawed outputs for an extended period.

A @validate_input decorator acts as a crucial gatekeeper, intercepting function arguments before they reach the core ML logic. This decorator can be designed to perform a variety of checks. For example, it might verify that a NumPy array passed as an input feature has the expected dimensions (shape) and data type. It can ensure that critical keys are present in a dictionary representing input parameters, or that numerical values fall within predefined acceptable ranges. When validation fails, the decorator can be configured to raise a clear, informative exception or, in some cases, return a safe default response, preventing corrupted data from propagating through the system.

The integration with libraries like Pydantic significantly enhances the sophistication of this pattern. Pydantic allows for the definition of data models with rich type hinting and validation rules, which can then be leveraged by a decorator to automatically validate incoming data against these schemas. However, even a simpler implementation focused on essential checks, such as array shapes and data types, can prevent a substantial portion of common production data-related failures. This proactive approach shifts the focus from reactive debugging of incorrect predictions to preventative measures that ensure data integrity at the point of entry. This is particularly critical in time-series forecasting or anomaly detection systems where even minor deviations in input can have significant downstream consequences.

3. Optimizing Resource Utilization: Result Caching with Time-to-Live (TTL)

In many real-time ML applications, particularly those involving recommendation systems, user profiling, or dynamic content generation, the same inputs are frequently encountered. A user might repeatedly request recommendations within a short session, or a batch processing job might re-evaluate overlapping feature sets. Performing the inference process redundantly for identical inputs is a waste of valuable computational resources and introduces unnecessary latency, negatively impacting user experience and system throughput.

A @cache_result decorator, equipped with a Time-to-Live (TTL) parameter, offers an efficient solution by storing function outputs and serving them from a cache when the same inputs are encountered again. Internally, this decorator typically maintains a dictionary where function arguments are hashed to create keys, and the corresponding values are tuples containing the function’s result and a timestamp of when it was computed.

Before executing the decorated function, the wrapper first checks if a valid cached result exists for the given inputs. If the cached entry’s timestamp falls within the defined TTL window, the cached result is returned immediately, bypassing the computation. If the entry is stale (i.e., outside the TTL window) or does not exist, the function is executed, its result is stored in the cache with the current timestamp, and then the result is returned.

The TTL component is what makes this caching strategy production-ready. Predictions in ML systems are not always static. They can become outdated as underlying data changes or as the context of the prediction evolves. A fixed cache without an expiration policy would serve stale information, potentially misleading users or downstream systems. The TTL ensures that cached results are refreshed periodically, striking a balance between performance gains and data freshness. For instance, a recommendation engine might use a TTL of 30 seconds for user-specific recommendations. This ensures that while repeat requests within that timeframe are served instantly, the system can still react to recent user interactions or changes in product availability. This significantly reduces redundant computations, lowers inference latency, and conserves processing power, especially under heavy load.

4. Preventing System Crashes: Memory-Aware Execution

The computational demands of modern machine learning models, particularly deep neural networks, can be substantial. Large models often require significant amounts of memory (RAM) to load parameters and perform computations. When multiple models are deployed on the same infrastructure, or when processing large batches of data, it is easy to exceed the available memory resources. Such memory exhaustion can lead to application crashes, service outages, and cascading failures across an infrastructure. These failures are often intermittent and difficult to reproduce, as they depend on the complex interplay of workload variability and the timing of the system’s garbage collection.

A @memory_guard decorator provides a proactive mechanism to monitor and manage memory usage. This decorator, often leveraging libraries like psutil to query system resource utilization, checks the available system memory before executing a computationally intensive function. It compares the current memory usage against a configurable threshold, such as 85% of total available RAM.

If the memory threshold is approaching or has been breached, the decorator can trigger a range of actions. It might initiate an explicit garbage collection cycle using gc.collect() to free up unused memory. Alternatively, it can log a warning to alert operators, introduce a brief delay to allow system memory to stabilize, or raise a custom exception. This exception can then be caught by an orchestration layer (e.g., Kubernetes) to gracefully reschedule the task or to scale down other services that are consuming excessive memory.

This decorator is particularly invaluable in containerized environments like Kubernetes, which enforce strict memory limits on containers. Exceeding these limits typically results in the container being terminated by the orchestration system. A memory guard provides the application with an opportunity to manage its memory footprint proactively, potentially avoiding a hard kill and allowing for a more controlled degradation of service or a self-correction before reaching critical levels. For batch processing jobs that load large datasets or models, this decorator can prevent the entire job from failing due to transient memory spikes.

5. Illuminating System Behavior: Execution Logging and Monitoring

Observability is paramount for understanding and debugging complex production systems, and ML systems are no exception. Beyond standard application metrics like request latency and error rates, ML systems require deeper insights into aspects such as inference times for individual predictions, the characteristics of input data that lead to anomalies, shifts in prediction distributions, and performance bottlenecks within the ML pipeline. While ad hoc logging can be implemented, it often lacks structure, consistency, and the ability to be easily queried and analyzed as the system scales.

A @monitor decorator offers a standardized approach to instrumenting ML functions with comprehensive, structured logging and metrics collection. This decorator wraps functions with automatic capture of key operational data. This includes precise timestamps for execution start and end, summaries of input data (e.g., shape, data types, statistical summaries), characteristics of the output predictions, and detailed exception information if an error occurs.

This decorator can integrate seamlessly with various observability tools and frameworks. It can push structured logs to centralized logging systems, emit metrics to time-series databases like Prometheus for aggregation and visualization, or send detailed traces to distributed tracing platforms like Jaeger or Datadog. By automatically capturing execution times, logging exceptions before they propagate, and optionally pushing performance metrics, the @monitor decorator creates a unified, searchable record of the ML system’s behavior.

The true power of this pattern is realized when it is applied consistently across all critical components of the inference pipeline. This provides ML engineers with a holistic view of system performance. When issues arise, such as a sudden increase in prediction latency or a shift in the distribution of predicted values, engineers have immediate access to actionable context. They can pinpoint the specific function responsible, examine the inputs that triggered the behavior, and understand the execution path, significantly accelerating the diagnostic process and reducing the mean time to resolution (MTTR). This level of granular visibility is essential for maintaining high-performing and reliable ML services in production.

Conclusion: Elevating Production ML with Declarative Resilience

The five decorator patterns explored—automatic retry with exponential backoff, input validation and schema enforcement, result caching with TTL, memory-aware execution, and execution logging and monitoring—collectively embody a powerful philosophy for production ML engineering. This philosophy advocates for keeping the core machine learning logic clean, focused, and readable, while externalizing operational concerns such as resilience, data integrity, performance optimization, and observability to the edges of the system through declarative constructs.

Python decorators provide a natural and elegant separation of concerns, significantly improving the readability, testability, and maintainability of production ML codebases. By abstracting away the boilerplate code associated with these critical operational aspects, engineers can dedicate more time and cognitive effort to the nuances of model development and performance tuning.

The adoption of these patterns can be a phased approach. Teams can begin by implementing the decorator that addresses their most pressing production challenge. For many organizations, this might be the need for robust retry mechanisms to handle unreliable external APIs or comprehensive monitoring to gain visibility into system behavior. Once the clarity and operational benefits of these patterns are experienced, they naturally become standard tools in the ML engineer’s toolkit for handling the complexities of production environments. By embracing these decorator-driven strategies, organizations can build machine learning systems that are not only intelligent but also robust, observable, and efficient, ensuring their successful and sustainable deployment in the demanding landscape of production.