Vercel Security Breach Traced to Infostealer Compromise of Third-Party AI Tool Context.ai

Web infrastructure and cloud platform provider Vercel has officially disclosed a significant security breach that allowed unauthorized actors to infiltrate several of its internal systems. The incident, which came to light on April 20, 2026, has been linked to the compromise of a third-party artificial intelligence tool, Context.ai, which was utilized by a Vercel employee. The breach highlights the growing risks associated with the rapid integration of third-party AI services into enterprise workflows and the persistent threat posed by infostealer malware targeting corporate personnel.

According to a security bulletin released by Vercel, the threat actor managed to leverage a compromised OAuth token to take over a Vercel employee’s Google Workspace account. This unauthorized access served as a gateway, allowing the attacker to penetrate various Vercel environments and access environment variables. While the company emphasized that environment variables marked as "sensitive" remained protected through encryption, non-sensitive variables were exposed. The breach has prompted a massive forensic investigation involving Google-owned Mandiant and other leading cybersecurity firms to determine the full extent of the data exfiltration.

The Genesis of the Attack: A Supply Chain Escalation

The root cause of the breach has been traced back to a series of security failures beginning in early 2026. Cybersecurity research firm Hudson Rock published a detailed report identifying that the initial point of infection occurred in February 2026. A staff member at Context.ai, a provider of AI-driven analytics and office suites, fell victim to the Lumma Stealer malware. Lumma Stealer is a sophisticated "infostealer" designed to harvest credentials, browser cookies, and session tokens from infected machines.

Evidence suggests the infection was the result of a common social engineering tactic. The affected Context.ai employee was reportedly searching for and downloading gaming exploits, specifically "auto-farm" scripts and executors for the popular gaming platform Roblox. These files are frequently used as delivery vehicles for malware. Once the Lumma Stealer was executed on the employee’s device, it harvested a treasure trove of corporate credentials.

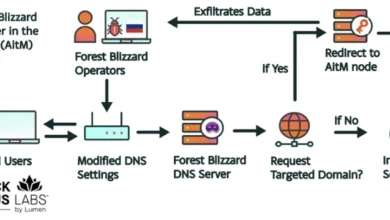

Among the stolen data were login credentials for the "[email protected]" account, as well as access keys for critical infrastructure tools including Supabase, Datadog, and Authkit. This initial compromise at Context.ai created a "supply chain escalation" path. Because Vercel employees used Context.ai’s "AI Office Suite," the attackers were able to pivot from the compromised third-party provider into Vercel’s internal Google Workspace environment.

Chronology of the Security Incident

The timeline of the breach reveals a multi-month operation characterized by high operational velocity and a deep understanding of cloud infrastructure.

February 2026: An employee at Context.ai is infected with Lumma Stealer after downloading malicious files disguised as Roblox game exploits. The malware exfiltrates corporate credentials and session tokens to a command-and-control server.

March 2026: Context.ai identifies and blocks unauthorized access to its Amazon Web Services (AWS) environment. At the time, the company issues a security update but does not yet realize the full extent of the OAuth token compromise affecting its users.

Late March to Early April 2026: The threat actor uses a stolen OAuth token from the Context.ai breach to target Vercel. Because a Vercel employee had previously signed up for Context.ai using their enterprise Google account and granted "Allow All" permissions, the attacker is able to bypass standard authentication hurdles.

April 15–19, 2026: The attacker moves laterally within Vercel’s Google Workspace and accesses internal environments. They target environment variables and search for credentials that could lead to further infrastructure access.

April 20, 2026: Vercel publicly discloses the breach, begins notifying affected customers, and engages Mandiant for a full-scale forensic audit. Simultaneously, the threat actor known as "ShinyHunters" claims responsibility for the attack on underground forums.

The Role of ShinyHunters and Data Exfiltration

As Vercel worked to contain the breach, a prominent threat actor using the "ShinyHunters" persona emerged to claim credit for the intrusion. ShinyHunters has a long history of high-profile data breaches, having previously targeted major corporations across the retail, tech, and finance sectors. The actor claimed to have exfiltrated a massive cache of data from Vercel’s internal systems and posted the database for sale on a dark web marketplace with an asking price of $2 million.

While Vercel has not officially confirmed the validity of the ShinyHunters claim or the specific volume of data stolen, the company admitted that a "limited subset" of customers had their credentials compromised. Vercel has been proactive in reaching out to these individuals, mandating immediate credential rotations and providing guidance on securing their respective deployments.

The distinction between "sensitive" and "non-sensitive" environment variables has become a focal point of the investigation. In modern cloud architecture, environment variables are used to store configuration settings, such as API keys, database connection strings, and service URLs. Vercel’s security model encrypts variables designated as sensitive, meaning that even with system-level access, an attacker cannot read the plaintext values. However, variables not marked as sensitive—which might still contain valuable architectural information or lower-level access keys—were accessible to the intruder.

Official Responses and Remediation Efforts

In the wake of the disclosure, Vercel CEO Guillermo Rauch took to social media to reassure the developer community and outline the company’s response. Rauch emphasized that Vercel’s core open-source projects, including Next.js and Turbopack, remain unaffected and safe for use.

"We’ve deployed extensive protection measures and monitoring. We’ve analyzed our supply chain, ensuring Next.js, Turbopack, and our many open source projects remain safe for our community," Rauch stated. He further noted that the company has already implemented UI improvements to help customers better manage their environment variables, making it easier to identify and protect sensitive data.

Context.ai also released a statement clarifying the mechanics of the OAuth compromise. The company noted that Vercel was not a direct corporate customer, but rather that individual Vercel employees had used their enterprise accounts to access the AI suite. Context.ai admitted that its internal OAuth configurations, combined with the broad permissions granted by the user, allowed the attacker to gain "broad permissions" within Vercel’s Google Workspace.

To mitigate further risk, Vercel has advised all Google Workspace administrators to audit their environments for a specific OAuth application ID (110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com) and revoke its access immediately.

Broader Implications for the Tech Industry

The Vercel-Context.ai incident serves as a stark reminder of the "shadow AI" problem—where employees sign up for third-party AI tools using corporate credentials without formal IT oversight. When these tools require broad OAuth permissions (such as "Allow All"), they create a direct tunnel from the third-party’s security environment into the heart of the enterprise.

Industry analysts suggest that this breach highlights three critical vulnerabilities in modern tech stacks:

- The Infostealer Menace: Traditional antivirus and endpoint detection often struggle to catch infostealers like Lumma, which are frequently updated to evade signatures. The fact that a single employee’s personal browsing habits (searching for game exploits) could lead to a multi-million dollar corporate breach underscores the need for stricter endpoint isolation.

- OAuth Permission Overreach: Many AI startups request expansive permissions to provide a "seamless" user experience. Security professionals are now calling for "least privilege" models for OAuth, where applications are only granted access to the specific data they need to function.

- Third-Party AI Risk: As companies race to integrate AI, the security posture of these AI vendors often lags behind. A breach at a small AI firm can have a "force multiplier" effect, impacting dozens of larger downstream companies that use their services.

Technical Recommendations for Organizations

In response to the incident, cybersecurity experts and Vercel have recommended a series of best practices to prevent similar supply-chain escalations:

- Mandatory Hardware Security Keys: Organizations should move away from SMS or app-based 2FA in favor of hardware keys (like YubiKeys) for all employees, especially those with access to production environments. This effectively neutralizes the threat of stolen session tokens.

- OAuth Scrutiny: Administrators should implement policies that require manual approval for any third-party application requesting broad access to Google Workspace or Microsoft 365 environments.

- Environment Variable Hygiene: Developers are urged to audit their cloud configurations and ensure that every API key, secret, and token is explicitly marked as "sensitive" to ensure encryption at rest and in transit.

- Employee Education: The link between the Roblox exploits and the Vercel breach highlights the necessity of ongoing security awareness training that addresses the risks of downloading unverified software on work-related devices.

As the investigation continues, Vercel has pledged to remain transparent, promising to contact any additional customers if evidence of further data exfiltration is discovered. For now, the tech community remains on high alert, as the ShinyHunters group continues to leverage stolen data to pressure organizations into high-value ransoms.