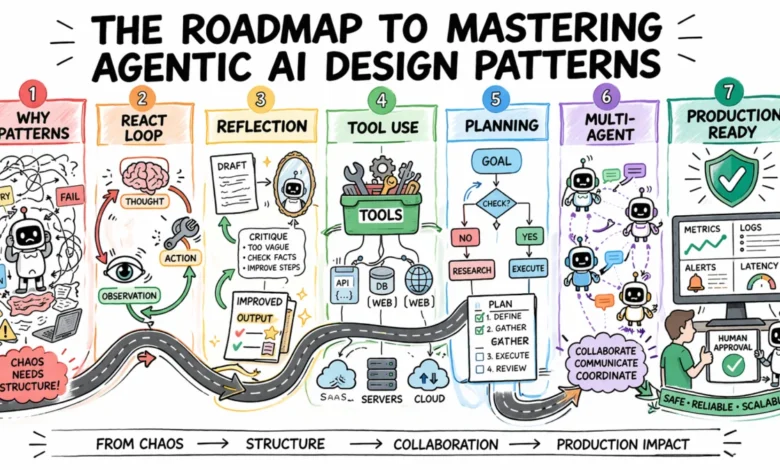

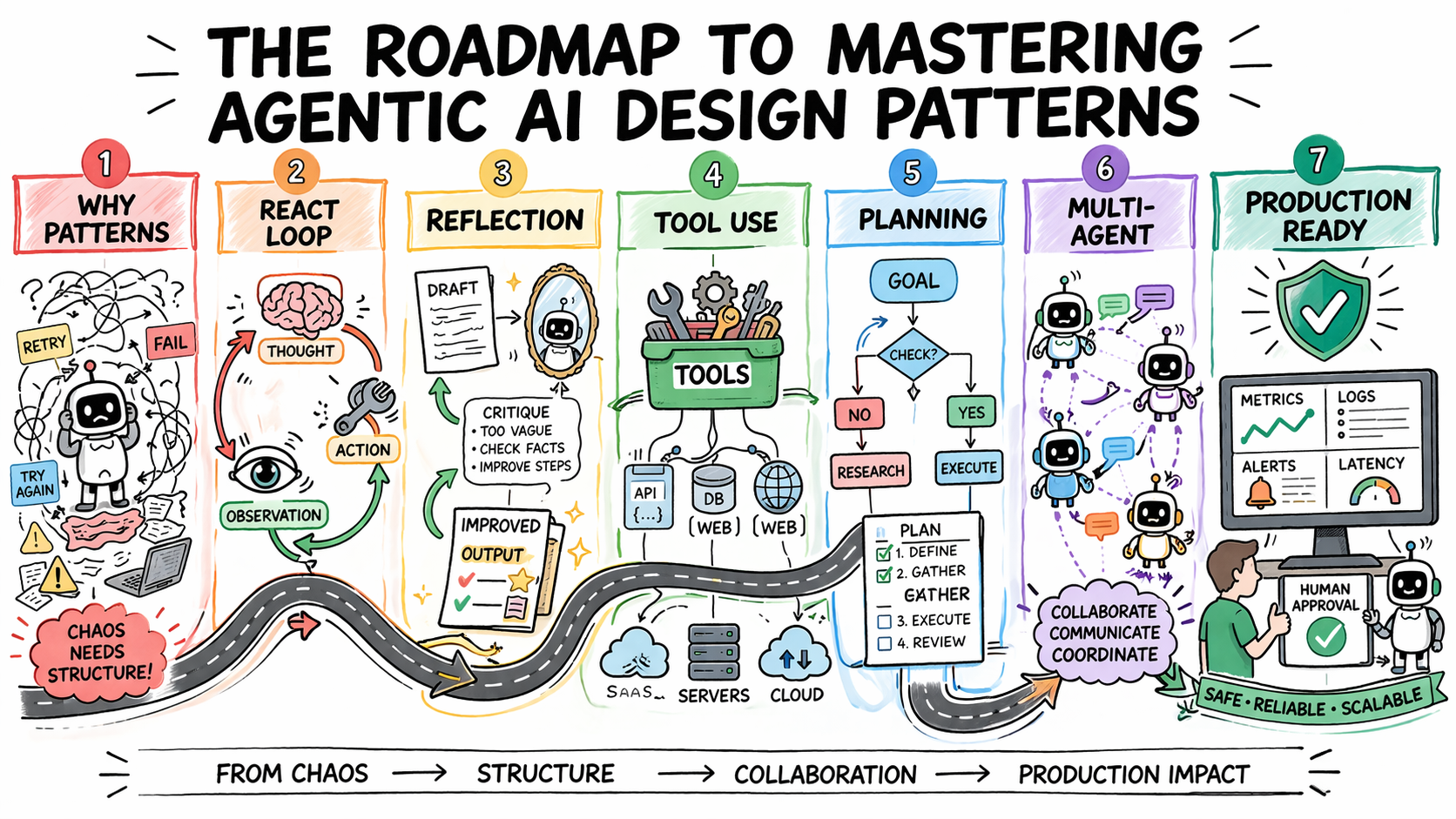

The Roadmap to Mastering Agentic AI Design Patterns

The development of reliable and scalable agentic AI systems hinges on the systematic selection and application of established design patterns. Without a governing framework, agent behavior can become unpredictable, debugging becomes a significant challenge, and systematic improvement is nearly impossible. This is particularly true for multi-step workflows where early errors cascade through subsequent operations. Agentic design patterns offer reusable solutions to recurring problems, dictating how agents reason, evaluate their outputs, select and invoke tools, divide responsibilities among multiple agents, and determine when human intervention is necessary. Strategic pattern selection transforms agent behavior into a predictable, debuggable, and composable system, capable of scaling with growing requirements.

This article presents a practical roadmap for understanding and implementing agentic AI design patterns. It will delve into why pattern selection is a critical architectural decision, explore the core agentic design patterns currently utilized in production, and discuss their appropriate applications, inherent trade-offs, and how they can be layered to construct sophisticated real-world systems.

The Imperative for Design Patterns in Agentic AI

A fundamental shift in perspective is required when addressing agent failures. The initial instinct for many developers is to attribute errors to prompt engineering, assuming that a better system prompt will rectify flawed agent actions. While this can sometimes be the case, more frequently, the root cause lies in architectural deficiencies. An agent caught in an infinite loop, for instance, may be suffering from a lack of explicitly designed stopping conditions. Inconsistent outputs from identical inputs often signal an absence of a structured decision-making framework.

Design patterns provide repeatable architectural templates that define the operational logic of an agent’s execution loop: how it determines the next action, when to terminate, how to recover from errors, and how to reliably interface with external systems. Their absence renders agent behavior exceptionally difficult to debug and scale.

A common pitfall for development teams is the premature adoption of overly complex patterns. The allure of multi-agent systems, intricate orchestration, or dynamic planning can lead to unnecessary sophistication. However, the cost of such premature complexity in agentic systems is substantial. Increased model calls translate directly to higher latency and token expenditure. A proliferation of agents introduces more potential failure points. Elaborate orchestration amplifies the risk of coordination bugs. The most significant mistake is often the adoption of complex patterns before simpler approaches have demonstrably reached their limitations.

Therefore, a practical implication for development teams is to:

- Prioritize Simplicity: Begin with the most straightforward pattern that effectively addresses the problem.

- Iterative Complexity: Introduce more sophisticated patterns only when existing ones prove inadequate.

- Focus on Core Problems: Address fundamental issues like reasoning, error handling, and tool integration before exploring advanced multi-agent dynamics.

Step 1: The ReAct Pattern as a Foundation

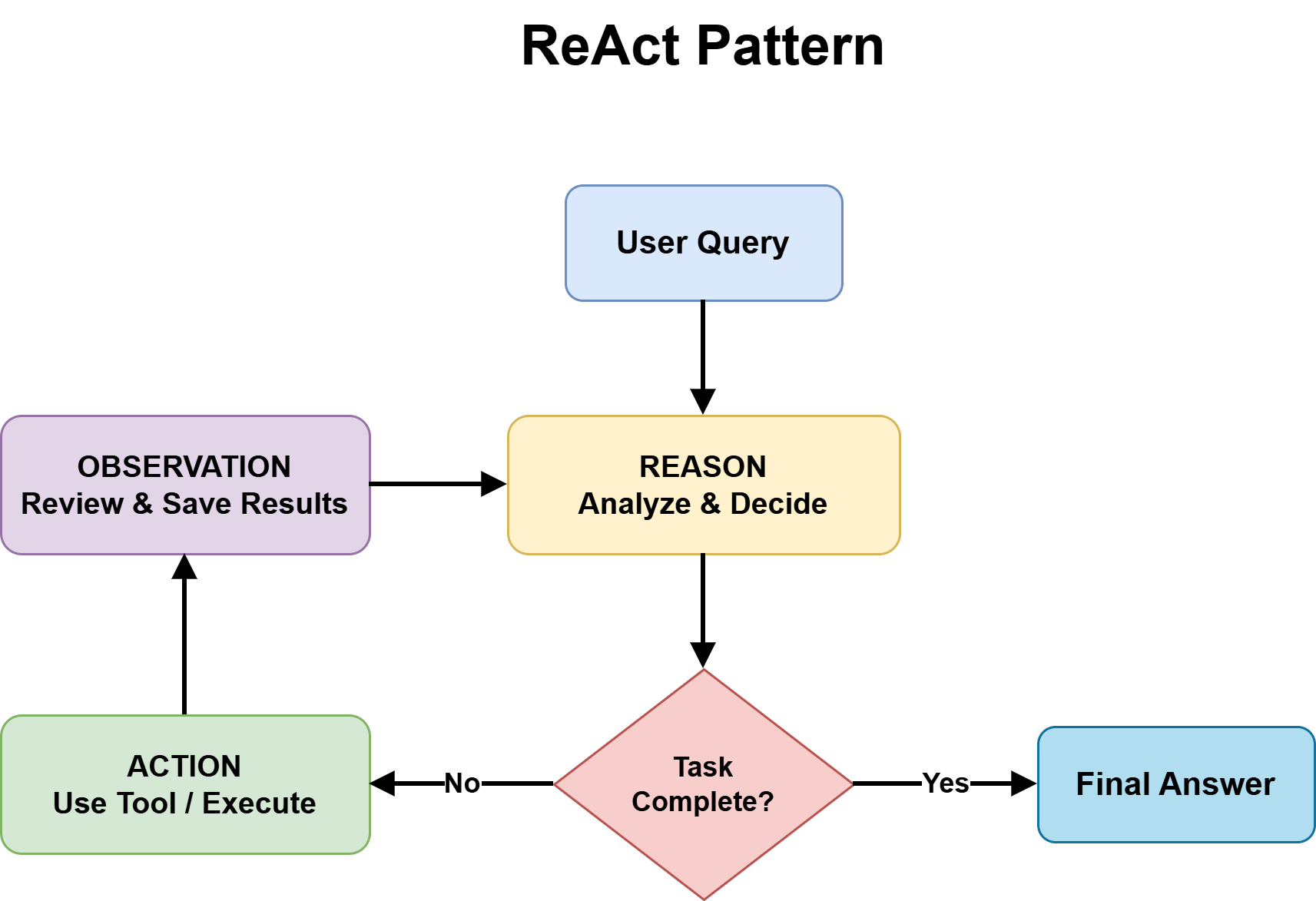

The ReAct (Reasoning and Acting) pattern stands as the most foundational agentic design pattern and serves as the default choice for most complex and unpredictable tasks. It masterfully combines chain-of-thought reasoning with the utilization of external tools within a continuous feedback loop. This structure is characterized by a cyclical progression through three distinct phases:

- Thought: The agent analyzes the current state, formulates a plan, or identifies the next logical step required to achieve the objective. This involves internal reasoning based on available information and the task’s goals.

- Action: Based on the reasoned thought, the agent either invokes an external tool (e.g., an API call, database query, or code execution) or generates a final output if the task is deemed complete.

- Observation: The agent receives the result from the executed action, which could be data retrieved from a tool, an error message, or the final answer. This observation then informs the subsequent "Thought" phase.

This cycle repeats until the task is successfully completed or a predefined stopping condition is met. The efficacy of the ReAct pattern lies in its externalization of the reasoning process. Every decision made by the agent is transparent, allowing for precise identification of logical breakdowns during debugging, rather than attempting to decipher a black-box output. Furthermore, by grounding each reasoning step in an observable outcome before proceeding, it mitigates the tendency of models to jump to premature conclusions, thereby reducing hallucinations when real-world feedback is absent.

However, the ReAct pattern is not without its trade-offs. Each iteration of the loop necessitates an additional model call, inevitably increasing latency and operational costs. Errors originating from tool outputs can propagate and negatively impact subsequent reasoning steps. The inherent non-deterministic nature of model behavior means that identical inputs may lead to divergent reasoning paths, posing consistency challenges in regulated environments. Without explicit iteration caps, the loop can persist indefinitely, leading to escalating costs.

The ReAct pattern is best suited for scenarios where the solution path is not predetermined, such as adaptive problem-solving, multi-source research, and customer support workflows characterized by variable complexity. Conversely, it should be avoided when speed is the paramount concern or when inputs are sufficiently well-defined that a fixed workflow would offer a more efficient and cost-effective solution.

Step 2: Enhancing Output Quality with Reflection

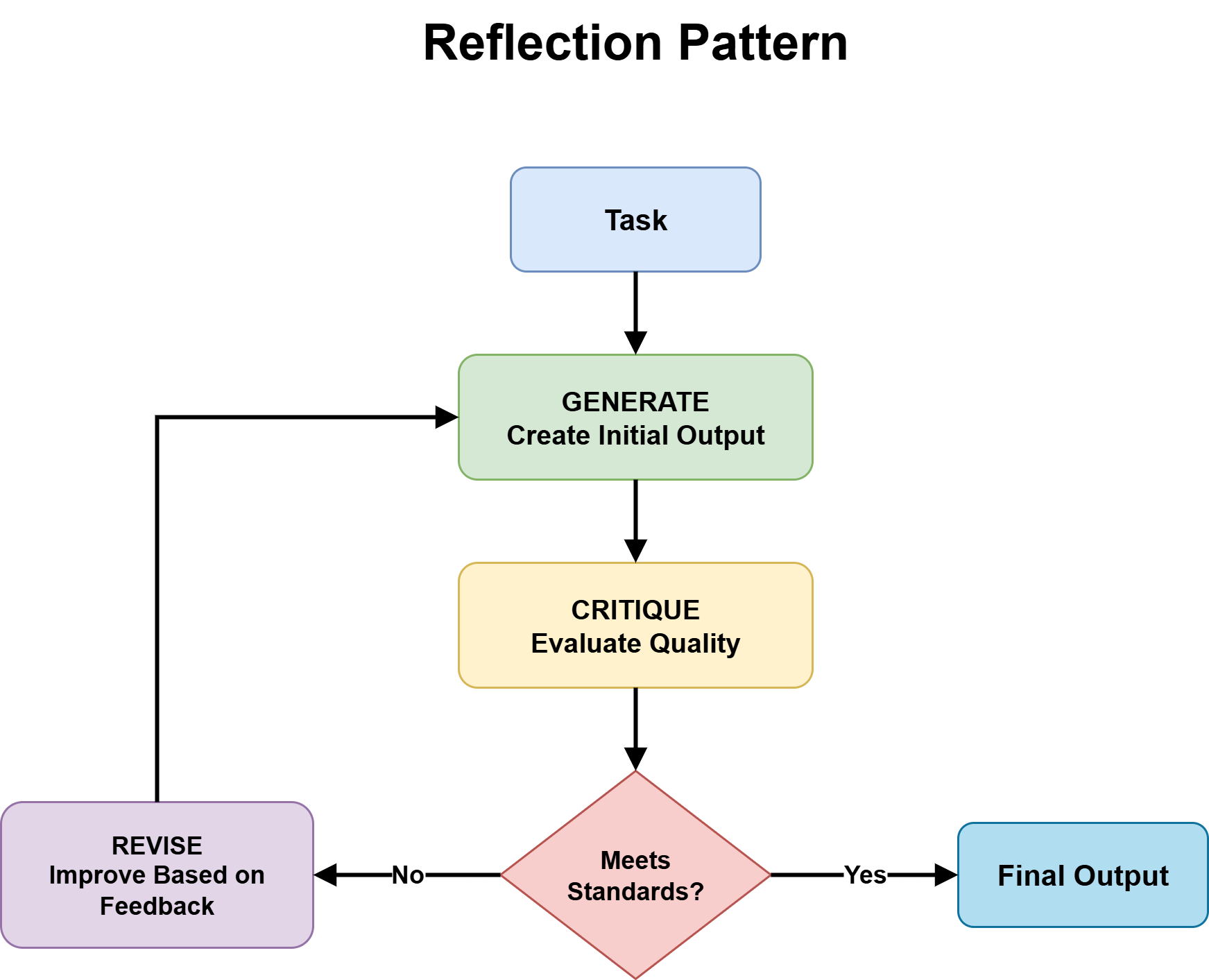

Reflection imbues an agent with the capability to critically evaluate and refine its own outputs before presenting them to the user. This pattern operates on a "generation-critique-refinement" cycle. The agent first produces an initial output, then rigorously assesses it against predefined quality criteria. This assessment then serves as the basis for subsequent revisions. This iterative cycle continues for a predetermined number of iterations or until the output meets the established quality threshold.

The reflection pattern proves particularly effective when the critique process is specialized. For instance, an agent tasked with reviewing code can focus its critique on identifying bugs, edge cases, or security vulnerabilities. Similarly, an agent reviewing a legal contract can pinpoint missing clauses or logical inconsistencies. Integrating the critique step with external verification tools – such as linters, compilers, or schema validators – amplifies the benefits, as the agent receives deterministic feedback rather than relying solely on its own subjective judgment.

Several design decisions are crucial for the successful implementation of reflection. The critic component should ideally be independent of the generator. At a minimum, this involves distinct system prompts with differing instructions. In high-stakes applications, employing a separate model for critique can be beneficial, preventing the critic from inheriting the same blind spots as the generator and thus avoiding superficial self-agreement. Explicit iteration bounds are also non-negotiable. Without a maximum loop count, an agent that perpetually identifies marginal improvements may stall rather than converge on a satisfactory solution.

Reflection is the optimal pattern when output quality is prioritized over speed, and when tasks possess clear correctness criteria that can be systematically evaluated. It introduces additional cost and latency that may not be justifiable for simple factual queries or applications with stringent real-time constraints.

Step 3: Tool Use as a Core Architectural Pillar

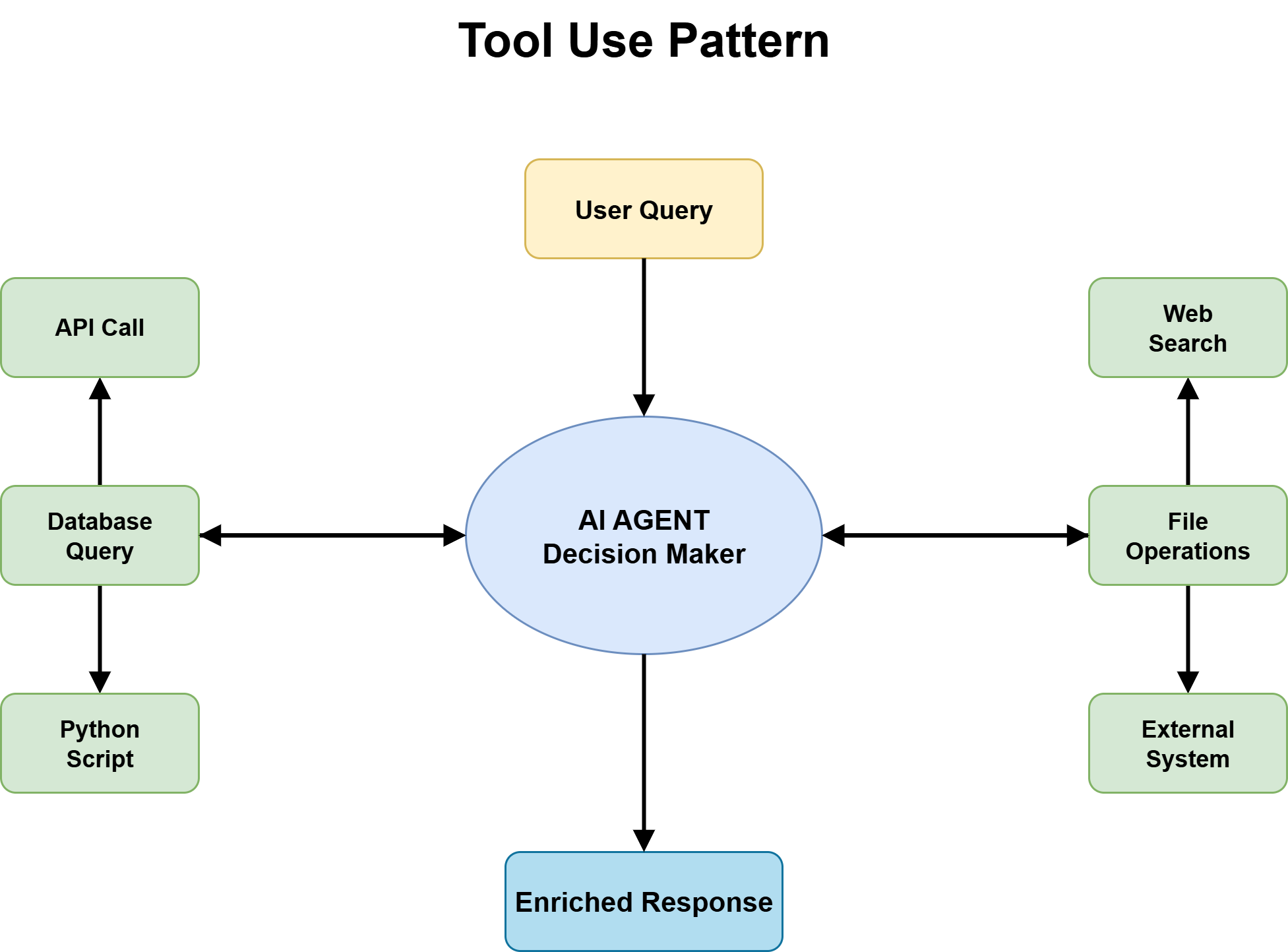

Tool use is the pivotal pattern that transforms an agent from a mere knowledge repository into an active system capable of performing actions. Without it, an agent lacks access to current information, external systems, and the ability to initiate real-world actions. With the integration of tool use, agents can effectively call APIs, query databases, execute code, retrieve documents, and interact with various software platforms. For nearly all production agents designed to handle real-world tasks, tool use forms the bedrock upon which all other functionalities are built.

The most critical architectural decision in this domain is the establishment of a fixed tool catalog with stringent input and output schemas. Without clear schemas, agents resort to guesswork when invoking tools, leading to predictable failures under edge-case conditions. The descriptions of these tools must be precise enough to enable the agent to accurately reason about which tool is appropriate for a given situation. Overly vague descriptions can result in mismatched calls, while excessively narrow ones may cause the agent to overlook valid use cases.

The second vital decision involves handling tool failures. An agent that inherits the reliability issues of its tools without any inherent failure-handling logic becomes fragile, with its stability directly proportional to the instability of its external dependencies. APIs are prone to rate limiting, timeouts, unexpected format returns, and behavior changes after updates. Consequently, an agent’s tool layer must incorporate explicit error handling, retry mechanisms, and graceful degradation paths for scenarios where tools are unavailable.

Tool selection accuracy presents a subtler yet equally significant concern. As tool libraries expand, agents must perform more complex reasoning over larger catalogs to identify the correct tool for each task. Performance in tool selection tends to degrade with increasing catalog size. A valuable design principle is to structure tool interfaces in a manner that clearly and unambiguously delineates distinctions between different tools.

Finally, tool use introduces a security surface that agent developers often underestimate. Once an agent gains the ability to interact with real systems – submitting forms, updating records, or initiating transactions – the potential impact of errors escalates considerably. Sandboxed execution environments and human approval gates are indispensable safeguards for high-risk tool invocations.

Step 4: Strategic Planning Before Action

Planning emerges as the preferred pattern for tasks characterized by high complexity or significant coordination requirements, where ad-hoc reasoning via a ReAct loop proves insufficient. While ReAct operates through step-by-step improvisation, planning deconstructs a goal into ordered subtasks with explicitly defined dependencies prior to execution.

Two primary implementations of planning exist:

- Pre-defined Plans: A static plan is generated upfront, outlining the sequence of actions. This is suitable for well-understood, repeatable workflows.

- Dynamic Plans: The plan is generated and potentially revised iteratively based on intermediate results. This offers greater flexibility for tasks with evolving requirements.

Planning yields significant advantages in tasks demanding real coordination, such as multi-system integrations requiring a specific sequence, research endeavors that necessitate synthesizing information from multiple sources, and development workflows encompassing design, implementation, and testing phases. The primary benefit lies in exposing latent complexities before execution commences, thereby preventing costly mid-run failures.

The trade-offs are straightforward. Planning necessitates an additional model call upfront, which is an unnecessary overhead for simpler tasks. It also presupposes that the task structure can be accurately articulated in advance, a condition not always met.

The rule of thumb is to employ planning when the task structure is articulable before execution and when the coordination between steps is sufficiently complex to benefit from explicit sequencing. For tasks where these conditions are not met, defaulting to ReAct is the more pragmatic approach.

Step 5: Orchestrating Multi-Agent Collaboration

Multi-agent systems distribute work across a network of specialized agents, each possessing focused expertise, a tailored toolset, and a clearly defined role. A central coordinator manages the routing of tasks and the synthesis of results, while specialized agents execute the tasks for which they are optimally designed.

The benefits of this approach are substantial, including enhanced output quality, independent improvability of individual agents, and a more scalable architectural foundation. However, the complexity of coordination can be equally significant. Successful implementation requires early and decisive answers to key questions:

- Ownership: Explicit definition of which agent holds write authority over shared state is paramount.

- Routing Logic: Determining whether the coordinator employs an LLM or deterministic rules for task distribution is a critical design choice. Most production systems opt for a hybrid approach.

- Orchestration Topology: The specific arrangement of agent interactions significantly shapes the system’s dynamics. Common topologies include:

- Sequential: Agents execute tasks in a predefined order.

- Parallel: Multiple agents work on different tasks simultaneously.

- Hierarchical: Agents are organized in a tree-like structure, with higher-level agents delegating tasks to lower-level ones.

- Networked: Agents form a more fluid network, interacting based on dynamic needs.

The strategic advice for adopting multi-agent architectures is to begin with a single, capable agent utilizing ReAct and appropriate tools. Transitioning to a multi-agent system should only be considered when a clear bottleneck emerges that cannot be resolved through enhancements to the single-agent system.

Step 6: Production Readiness: Evaluation and Safety by Design

Pattern selection represents only half the challenge. Ensuring the reliability of these patterns in production necessitates deliberate evaluation, explicit safety design, and continuous monitoring.

Defining Pattern-Specific Evaluation Criteria: Each pattern requires tailored evaluation metrics:

- ReAct: Assess reasoning accuracy, tool invocation success rates, and the efficiency of the thought-action-observation loop.

- Reflection: Measure the degree of output improvement post-reflection and the cost-effectiveness of the refinement cycles.

- Tool Use: Track tool invocation accuracy, error rates, and the performance impact of tool latency.

- Planning: Evaluate the plan’s completeness, the accuracy of subtask sequencing, and the efficiency gains over ad-hoc approaches.

- Multi-Agent Collaboration: Assess inter-agent communication effectiveness, task distribution fairness, and overall system throughput.

Developing failure mode tests early in the development lifecycle is crucial. These tests should probe scenarios such as tool misuse, infinite loops, routing failures, and degraded performance under prolonged context windows.

Observability must be treated as a non-negotiable requirement. Step-level traces – capturing reasoning, tool calls, tool results, and decisions at each juncture of the execution loop – are indispensable for understanding agent behavior when issues arise.

Guardrails should be designed based on identified risks. Validation mechanisms, rate limiting, and approval gates are essential where appropriate. The OWASP Top 10 for LLM Applications provides a valuable framework for identifying and mitigating common vulnerabilities.

Furthermore, human-in-the-loop workflows should be integrated as a design pattern, not merely a fallback mechanism. Most production agents judiciously divide labor: routine tasks are automated, while specific decision categories are escalated to human oversight. For decisions that are difficult to reverse or carry significant accountability, this escalation path represents a robust design choice rather than a limitation of the system.

Leveraging existing agent orchestration frameworks such as LangGraph, AutoGen, CrewAI, and Guardrails AI can significantly streamline the development and deployment of robust agentic systems.

Conclusion: Evolving Architectures for Intelligent Agents

Agentic AI design patterns are not a static checklist to be completed once. They are dynamic architectural tools that must evolve in tandem with the system they govern. The recommended approach is to commence with the simplest pattern that effectively addresses the problem at hand, introducing complexity incrementally only when necessitated by performance limitations or emerging requirements. A substantial investment in observability and rigorous evaluation is paramount. This methodical approach fosters the development of agentic systems that are not only functional but also demonstrably reliable and scalable, paving the way for more sophisticated and trustworthy AI applications in the future.