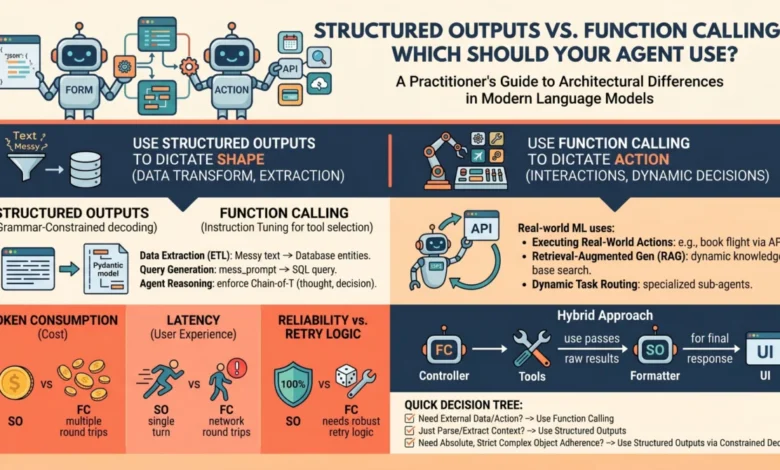

Structured Outputs vs. Function Calling: Which Should Your Agent Use?

In the rapidly evolving landscape of artificial intelligence, language models (LMs) are transitioning from mere text generators to sophisticated components within autonomous agents and robust software pipelines. While inherently designed for conversational interaction, their raw text output presents significant challenges for programmatic integration. To address this, leading LM providers like OpenAI, Anthropic, and Google Gemini have introduced two key mechanisms: structured outputs and function calling. Though superficially similar, these capabilities serve distinct architectural purposes, and understanding their differences is crucial for building reliable and efficient AI systems. Conflating them can lead to brittle architectures, increased latency, and unnecessary operational costs.

The Core Challenge: From Unstructured Text to Deterministic Systems

At their fundamental level, language models operate as text-in, text-out systems. For a human user engaging with a chatbot, this free-form text exchange is intuitive. However, for machine learning practitioners aiming to build autonomous agents capable of complex task execution or integrating LMs into deterministic software pipelines, raw, unstructured text is a significant hurdle. Parsing, routing, and ensuring the reliability of systems that depend on such output is a complex and often error-prone endeavor. The need for predictable, machine-readable outputs and seamless interaction with external environments has driven the development of specialized LM functionalities.

The advent of structured outputs and function calling represents a significant leap forward in bridging this gap. Both mechanisms aim to provide LMs with the ability to produce outputs that are not only predictable but also directly usable by downstream systems. Both often rely on the underlying mechanism of passing JSON schemas to the API, resulting in the model generating structured key-value pairs rather than prose. However, their architectural roles in agent design are fundamentally different, impacting how agents interact with the world and process information.

Unpacking the Mechanics: A Deep Dive into How They Operate

To effectively discern when to employ each capability, a granular understanding of their underlying mechanics and API interactions is essential.

Structured Outputs: Grammar-Constrained Decoding for Form and Fidelity

Historically, eliciting JSON output from a language model was largely an exercise in sophisticated prompt engineering, often requiring iterative refinement and extensive retry logic. Phrases like "You are a helpful assistant that only speaks in JSON" were common, but their reliability was often questionable.

Modern "structured outputs" revolutionize this by introducing grammar-constrained decoding. This advanced technique, supported by libraries such as Outlines and native features like OpenAI’s Structured Outputs, mathematically constrains the token probabilities during the generation process. If a predefined schema dictates that the next token must be a quotation mark, a specific boolean value, or a number within a certain range, the probabilities of all non-compliant tokens are effectively masked out, set to zero. This ensures that the model’s output adheres strictly to the specified format.

This is fundamentally a single-turn generation process, with its primary objective being the adherence to a prescribed form. The model is directly answering the prompt, but its vocabulary is rigorously confined to the exact structure defined by the schema. The aim is to achieve near 100% schema compliance, making the output highly predictable and reliable for parsing.

Function Calling: Instruction Tuning for Agentic Action and Interaction

Function calling, conversely, is deeply rooted in instruction tuning. During the training phase of these models, they are fine-tuned to recognize specific contexts where they might lack the requisite information to fulfill a user’s request or when a prompt explicitly signals an intent to perform an action.

When a developer provides a model with a list of available "tools" or functions, they are essentially equipping the model with a decision-making framework. The model is instructed: "If you encounter a situation where you need external information, or if the user asks you to perform a task that requires an external action, you can pause your text generation, select an appropriate tool from this list, and generate the necessary arguments to execute it."

This mechanism facilitates an inherently multi-turn, interactive workflow. The sequence typically unfolds as follows:

- User Prompt: The user provides an initial request.

- Model Decision: The LM analyzes the prompt and determines if it can answer directly or if it needs to call a function.

- Function Call Generation: If a function call is deemed necessary, the model generates a structured output (often JSON) specifying the function name and its arguments, formatted according to the provided schema for that function.

- Tool Execution: The external application or agent receives this structured output, parses it, and executes the specified function with the provided arguments.

- Tool Response: The executed function returns a result.

- Model Continues: The LM receives the result from the tool execution and uses this information to generate a final, coherent response to the user.

This process allows the LM to dynamically interact with its environment, access real-time data, or trigger complex computational processes, thereby enhancing its capabilities beyond simple text generation.

When to Choose Structured Outputs: The Foundation of Data Standardization

Structured outputs are the default and preferred approach when the primary objective is pure data transformation, extraction, or standardization. They are ideal for scenarios where the language model already possesses all the necessary information within its prompt and context window and simply needs to reshape it into a machine-readable format.

Primary Use Case: The model has access to all required information and its task is to reformat or extract specific data points.

Examples for Practitioners:

- Data Extraction: Extracting names, dates, and amounts from unstructured invoices or receipts.

- Data Formatting: Converting free-text product descriptions into a standardized JSON format with fields like

product_name,description,price, andfeatures. - Sentiment Analysis Categorization: Classifying user feedback into predefined categories like "positive," "negative," or "neutral" with associated confidence scores.

- Summarization into Key Points: Generating a bulleted list of the most critical information from a long document.

- Entity Recognition and Linking: Identifying named entities (e.g., people, organizations, locations) and their types within a text.

The Verdict: Employ structured outputs when the "action" required from the model is primarily one of formatting or structuring existing information. Because this approach bypasses mid-generation interaction with external systems, it guarantees high reliability, minimizes latency, and eliminates the possibility of schema-parsing errors that can plague less constrained methods. It forms the bedrock for predictable data pipelines.

When to Choose Function Calling: The Engine of Agentic Autonomy

Function calling is the indispensable engine driving agentic autonomy. While structured outputs dictate the shape of the data, function calling dictates the control flow of an application. It empowers models to go beyond simple information retrieval and engage in dynamic decision-making and external interactions.

Primary Use Case: Situations requiring external interactions, dynamic decision-making, and scenarios where the model needs to acquire information it does not currently possess.

Examples for Practitioners:

- Real-time Information Retrieval: Answering queries about current stock prices, weather conditions, or flight statuses by calling external APIs.

- Task Execution: Booking appointments, sending emails, or controlling smart home devices based on user commands.

- Complex Query Resolution: Breaking down a complex user request into a series of function calls to gather necessary data from multiple sources before formulating a comprehensive answer.

- Interactive Decision Trees: Guiding users through a complex decision process by asking targeted questions and executing predefined actions based on their responses.

- Database Interaction: Querying databases to retrieve or update information based on natural language requests.

The Verdict: Opt for function calling when the language model must interact with the outside world, fetch data that is not present in its immediate context, or conditionally execute software logic as part of its "thought" process. This capability is key to building agents that can perform actions and adapt to dynamic environments.

Performance, Latency, and Cost Implications: Strategic Deployment Considerations

The architectural choice between structured outputs and function calling has direct and significant implications for deployment economics and user experience.

- Structured Outputs: As a single-turn generation process that constrains the model’s output directly, structured outputs generally offer lower latency. The model completes its task in one go, and the output is immediately available for parsing. This also tends to be more cost-effective, as it typically involves a single API call per request. The reliability and predictability of structured outputs reduce the need for complex error handling and retry mechanisms, further contributing to operational efficiency.

- Function Calling: The multi-turn nature of function calling inherently introduces higher latency. Each function call requires an additional API interaction between the model and the external tool, followed by the model processing the tool’s response. This sequential process can significantly extend the overall response time. Furthermore, the increased number of API calls, both to the LM and potentially to external services, can lead to higher operational costs. However, for tasks requiring external interaction or dynamic decision-making, the enhanced capabilities often justify these trade-offs.

Hybrid Approaches and Best Practices: The Blurring Lines of Advanced Architectures

In sophisticated agent architectures, the distinction between structured outputs and function calling can become nuanced, leading to hybrid approaches that leverage the strengths of both.

The Overlap: It is important to note that modern function calling mechanisms themselves rely on structured outputs internally. The model generates the arguments for a function call in a structured format (typically JSON) that must precisely match the function’s signature. Conversely, one can design an agent that exclusively uses structured outputs to return a JSON object describing an action. This JSON can then be interpreted by a deterministic system that executes the described action after the model’s generation is complete. This effectively mimics tool use without incurring the multi-turn latency associated with true function calling.

Architectural Advice:

- Prioritize Structured Outputs for Data Integrity: Whenever the primary goal is to obtain reliable, machine-readable data from the LM, structured outputs should be the first choice. This ensures data quality and simplifies downstream processing.

- Reserve Function Calling for Action and Interaction: Reserve function calling for scenarios where the LM needs to actively interact with external systems, fetch dynamic information, or trigger complex logic.

- Consider the "Fake Tool Use" Pattern: For simple action descriptions that can be handled by a separate, deterministic execution layer, using structured outputs to describe the action can be a more performant and cost-effective alternative to function calling.

- Robust Error Handling: Regardless of the chosen method, implement comprehensive error handling. For function calling, this includes managing potential API failures, unexpected tool outputs, and scenarios where the model might hallucinate function calls.

Wrapping Up: Mastering LM Engineering for Autonomous Agents

The field of language model engineering is rapidly evolving from the creation of conversational chatbots to the development of reliable, programmatic, and autonomous agents. Mastering the ability to constrain and direct model outputs is fundamental to this transition.

TL;DR:

- Structured Outputs: Use for pure data formatting and extraction within a single turn. Ensures reliability and low latency.

- Function Calling: Use for enabling agents to interact with external systems, make decisions, and fetch dynamic information across multiple turns.

The Practitioner’s Decision Tree:

When embarking on the development of a new AI feature, consider this streamlined checklist:

- Does the task require the LM to fetch information it doesn’t already have or perform an external action?

- If Yes: Proceed to question 2.

- If No: Structured outputs are likely the most efficient and reliable solution.

- Is the interaction with the external system a core part of the LM’s "thinking" process, requiring immediate feedback to continue its generation?

- If Yes: Function calling is the appropriate mechanism.

- If No: Consider if structured outputs can describe the desired action for a separate execution layer.

Final Thought:

The most effective AI engineers approach function calling as a potent but inherently less predictable capability, one that should be employed judiciously and always surrounded by robust error-handling mechanisms. Conversely, structured outputs should be viewed as the dependable, foundational element that underpins modern AI data pipelines, ensuring consistency and reliability. By understanding and applying these principles, developers can build more sophisticated, performant, and trustworthy AI systems.