Getting Started with Zero-Shot Text Classification

Zero-shot text classification represents a significant advancement in natural language processing, enabling the categorization of text without the necessity of pre-training a model on a specific dataset for each desired label. This innovative approach allows for flexible and efficient text analysis, particularly in scenarios where data is scarce, label sets are dynamic, or rapid prototyping is essential. Instead of relying on exhaustive, labeled training data, users provide the model with a piece of text and a list of potential labels. The model then leverages its broad understanding of language to determine the most appropriate classification.

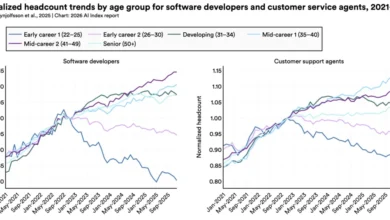

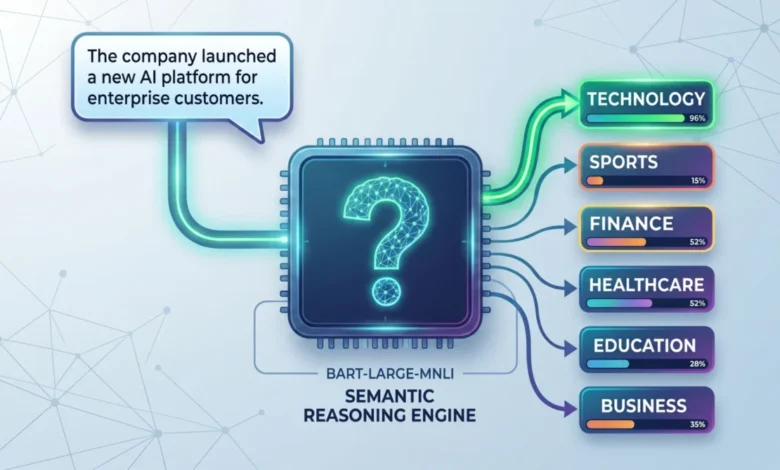

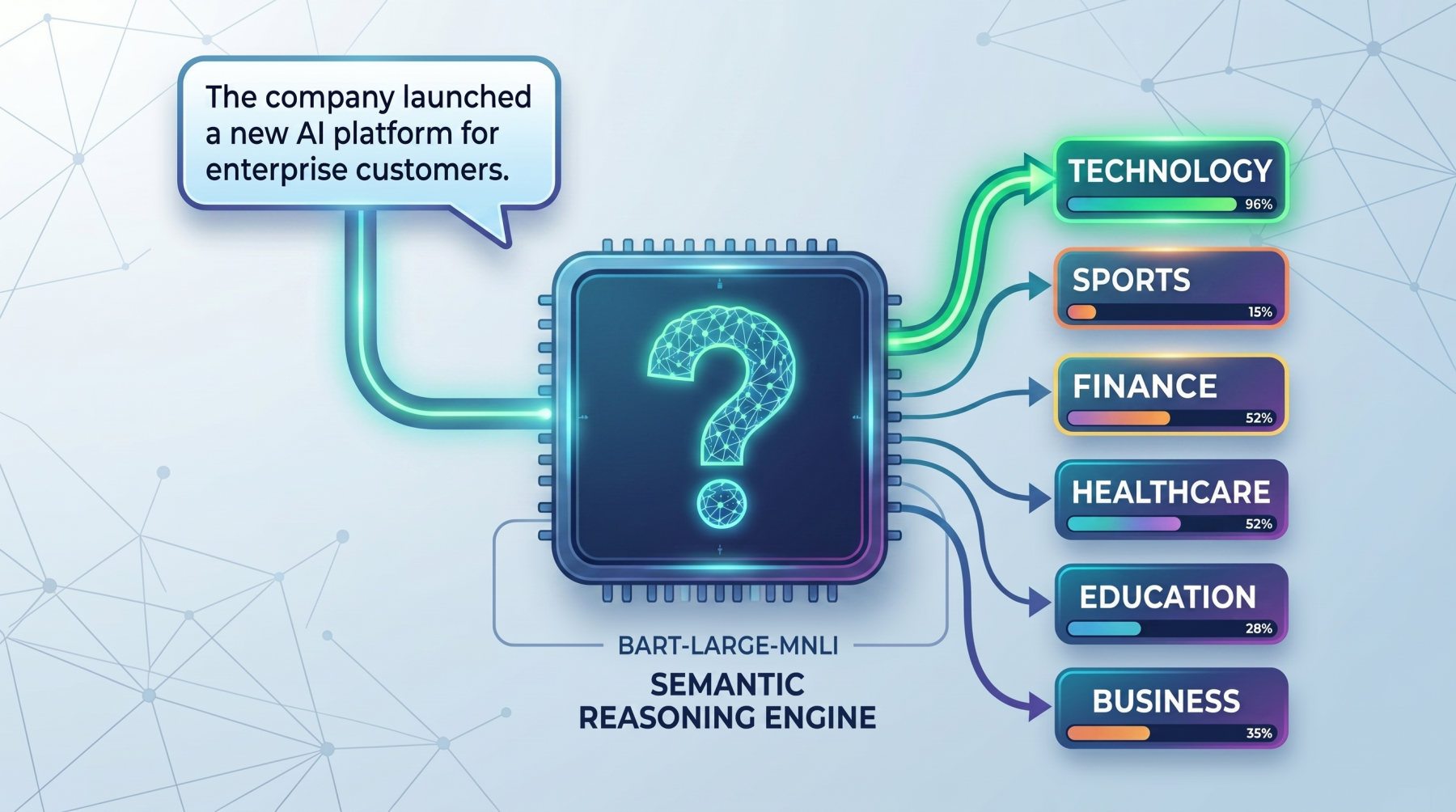

This guide delves into the fundamental principles of zero-shot classification and demonstrates its practical application using the robust facebook/bart-large-mnli transformer model. We will explore how this method transcends traditional classification by framing the problem as a reasoning task, where the model assesses the degree to which a text supports a given label. This distinction is crucial for understanding its efficacy in diverse applications, from customer support ticket routing and content moderation to market research and internal document organization.

The Mechanics of Zero-Shot Classification

At its core, zero-shot text classification operates on a principle fundamentally different from conventional supervised learning. Rather than learning a direct mapping between text patterns and discrete category identifiers, the model interprets each candidate label as a natural language statement. It then evaluates how well the input text corroborates or supports each of these statement-labels. This nuanced approach allows the model to generalize to new categories it has not explicitly been trained on, provided those categories can be articulated in a way that the model can understand.

Consider an illustrative example. If the input text is: "The company launched a new AI platform for enterprise customers," and the candidate labels are "technology," "sports," and "finance," the zero-shot model conceptually transforms these labels into statements. It might internally evaluate hypotheses such as:

- "This text is about technology."

- "This text is about sports."

- "This text is about finance."

The model then proceeds to score the input text against each of these hypotheses, determining which statement is most strongly supported. In this case, the text’s mention of an "AI platform" and "enterprise customers" would lead the model to assign the highest score to the "technology" label. This method proves invaluable for scenarios requiring swift decision-making without extensive data collection, such as:

- Customer Support: Automatically routing incoming tickets to the correct department (e.g., "billing issue," "technical support," "account inquiry").

- Content Moderation: Identifying and flagging inappropriate content (e.g., "spam," "harassment," "hate speech").

- News Aggregation: Categorizing articles into broad or niche topics.

- Market Research: Analyzing customer feedback to identify emerging trends or product sentiments.

The effectiveness of zero-shot classification hinges on the clarity and semantic richness of the candidate labels. Vague labels, such as "money," are less likely to yield precise results compared to more descriptive ones like "billing issue" or "investment opportunity." This is because more specific labels provide the model with richer linguistic cues to draw upon. Therefore, careful consideration of label wording is paramount for achieving optimal performance across various domains, including news categorization, customer intent detection, moderation systems, and business workflow management. This approach fundamentally reframes classification as a natural language inference or reasoning problem, making it highly adaptable for rapid prototyping, low-resource environments, and nascent domains where labeled data is not yet available.

Practical Implementation: Leveraging Pretrained Models

To operationalize zero-shot text classification, readily available libraries and pretrained models offer a streamlined pathway. The transformers library by Hugging Face provides a high-level pipeline abstraction that simplifies the process of loading and using sophisticated NLP models.

1. Setting Up the Environment

The initial step involves installing the necessary Python libraries. This typically includes torch for tensor computations and transformers for accessing pretrained models.

pip install torch transformers2. Loading the Zero-Shot Classification Pipeline

Once the dependencies are installed, a zero-shot classification pipeline can be instantiated. The facebook/bart-large-mnli model is a popular choice due to its strong performance on Natural Language Inference (NLI) tasks, which are foundational to zero-shot classification.

from transformers import pipeline

classifier = pipeline(

"zero-shot-classification",

model="facebook/bart-large-mnli"

)This code snippet initializes a pipeline specifically designed for zero-shot classification. By specifying model="facebook/bart-large-mnli", we are instructing the pipeline to load this particular transformer architecture, which has been fine-tuned on a massive dataset of natural language inference tasks. This pre-training equips the model with a deep understanding of semantic relationships between sentences, enabling it to effectively assess the entailment or contradiction between a given text and a potential label. The pipeline abstracts away the complexities of model loading, tokenization, and inference, providing a user-friendly interface for practical application.

3. Basic Zero-Shot Classification Example

A straightforward application involves providing a piece of text and a list of candidate labels. The pipeline then returns the labels ranked by their probability of matching the text.

Input Text: "This tutorial explains how transformer models are used in NLP."

Candidate Labels: ["technology", "health", "sports", "finance"]

text = "This tutorial explains how transformer models are used in NLP."

candidate_labels = ["technology", "health", "sports", "finance"]

result = classifier(text, candidate_labels)

print(f"Top prediction: result['labels'][0] (result['scores'][0]:.2%)")Expected Output:

Top prediction: technology (96.52%)In this instance, the model accurately identifies "technology" as the most relevant label, given the text’s focus on transformer models and Natural Language Processing (NLP), which are core components of modern technology. The high confidence score of 96.52% underscores the model’s proficiency in semantic understanding.

4. Multi-Label Classification

In many real-world scenarios, a single piece of text can logically belong to multiple categories. The zero-shot classification pipeline accommodates this by allowing the multi_label parameter to be set to True. This enables the model to assign scores to all candidate labels, and a threshold can be applied to select those that meet a certain confidence level.

Input Text: "The company launched a health app and announced strong business growth."

Candidate Labels: ["technology", "healthcare", "business", "travel"]

text = "The company launched a health app and announced strong business growth."

candidate_labels = ["technology", "healthcare", "business", "travel"]

result = classifier(

text,

candidate_labels,

multi_label=True

)

threshold = 0.50

top_labels = [

(label, score)

for label, score in zip(result["labels"], result["scores"])

if score >= threshold

]

print("Top labels:", ", ".join(f"label (score:.2%)" for label, score in top_labels))Expected Output:

Top labels: healthcare (99.41%), technology (99.06%), business (98.15%)The output demonstrates the model’s ability to recognize the multifaceted nature of the input text. It correctly assigns high scores to "healthcare" (due to "health app"), "technology" (implicitly related to app development and launch), and "business" (due to "strong business growth"). This multi-label capability is critical for nuanced text analysis where categories are not mutually exclusive.

5. Customizing the Hypothesis Template

The default hypothesis template used by the pipeline is generally effective, but custom templates can often refine results, especially when dealing with specialized domain terminology or when aiming for more natural language alignment. By providing a custom hypothesis_template, users can guide the model’s reasoning process more precisely. The placeholder in the template is where the candidate label is inserted.

Input Text: "The user cannot access their account and keeps seeing a login error."

Candidate Labels: ["technical support", "billing issue", "feature request"]

Custom Hypothesis Template: "This text is about ."

text = "The user cannot access their account and keeps seeing a login error."

candidate_labels = ["technical support", "billing issue", "feature request"]

result = classifier(

text,

candidate_labels,

hypothesis_template="This text is about ."

)

for label, score in zip(result["labels"], result["scores"]):

print(f"label: score:.4f")Expected Output:

technical support: 0.7349

feature request: 0.1683

billing issue: 0.0968In this example, the custom template explicitly frames the evaluation as a topic identification task. The model correctly prioritizes "technical support" as the most fitting category for a user experiencing login issues. This demonstrates how tailoring the hypothesis can enhance the model’s ability to align with specific semantic expectations, leading to more accurate classifications in domain-specific contexts. The model’s confidence in "technical support" is significantly higher than in "billing issue" or "feature request," reflecting the clear problem described in the text.

The Broader Implications of Zero-Shot Text Classification

The advent and refinement of zero-shot text classification mark a paradigm shift in how we approach automated text analysis. Historically, deploying a text classifier required a substantial investment in data collection, annotation, and model training for each specific task. This process could be time-consuming, costly, and impractical for rapidly evolving domains or for organizations with limited NLP expertise and resources.

Zero-shot classification liberates users from these constraints. Its ability to classify text based on descriptive labels alone opens up a wealth of new possibilities:

- Accelerated Innovation: Startups and research teams can quickly prototype and validate ideas without waiting for extensive data labeling. This dramatically shortens the feedback loop for product development and experimental research.

- Adaptability to Change: In dynamic environments where categories frequently shift, such as social media trends or evolving customer needs, zero-shot models can adapt without requiring complete retraining. This agility is crucial for staying relevant.

- Democratization of NLP: By lowering the barrier to entry, zero-shot classification empowers a wider range of users and organizations to leverage advanced NLP capabilities without needing deep machine learning expertise.

- Handling Low-Resource Languages and Domains: For languages or specialized fields with scarce annotated data, zero-shot approaches offer a viable path to classification, bridging critical gaps in digital information processing.

The underlying mechanism, where models infer relationships based on semantic understanding rather than memorized patterns, is the key to its power. This is why models trained on large-scale natural language inference datasets, like bart-large-mnli, excel in this domain. They have learned to discern subtle meanings and contextual nuances, enabling them to generalize effectively.

However, it is crucial to acknowledge that the quality of zero-shot classification is intrinsically linked to the quality of the provided labels. Clear, unambiguous, and semantically rich labels lead to more accurate predictions. For instance, when classifying customer feedback, labels like "product defect," "slow performance," or "user interface confusion" will yield better results than generic terms like "problem" or "issue." Similarly, for content moderation, specific labels like "incitement to violence" or "misinformation about public health" are more effective than broad categories.

While zero-shot classification offers a powerful starting point, it is not a panacea. For highly critical applications demanding absolute precision, or for domains with abundant labeled data, fine-tuning a model on task-specific datasets may still yield superior performance. Nevertheless, as a tool for rapid exploration, flexible categorization, and initial deployment, zero-shot text classification represents a significant leap forward, promising to reshape how we interact with and understand textual information across an ever-expanding digital landscape. Its continued development and adoption are poised to unlock new efficiencies and insights across numerous industries and research endeavors.