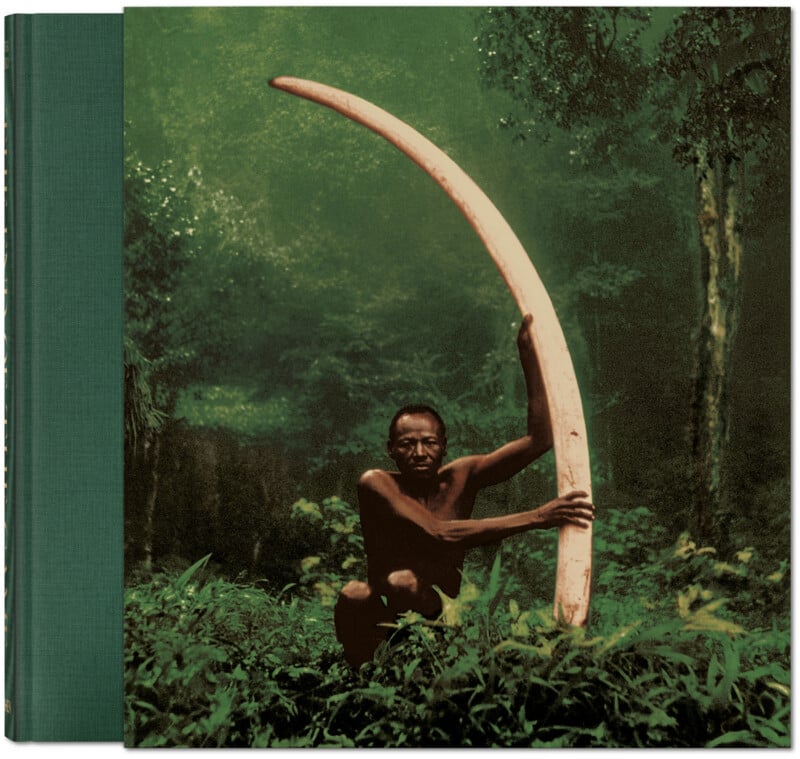

Peter Beard: The End of the Game

Peter Beard, a figure whose life and work blurred the lines between art, photography, and the raw realities of the African wilderness, left an indelible mark on the world of conservation and artistic expression. His seminal book, The End of the Game, stands as a critical chronicle of the ecological devastation that began to unfold across Africa in the mid-20th century, particularly impacting iconic species like elephants, rhinos, and hippos. Initially published in 1965 and subsequently updated, the work is a profound visual testament to the escalating conflict between human expansion and the natural world, documented through Beard’s unique lens and extensive field research.

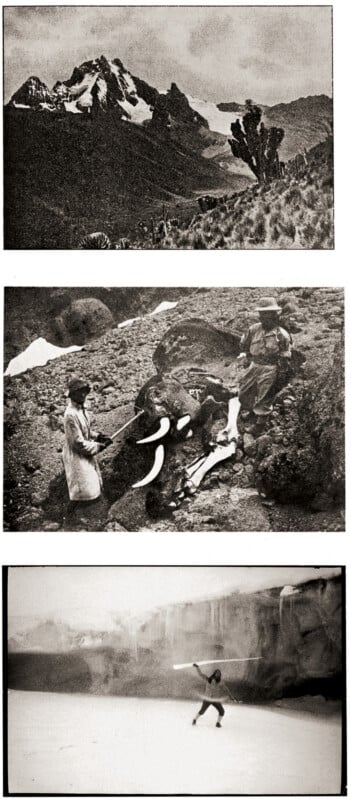

Beard’s approach was far from conventional. He meticulously compiled his observations, photographs, and writings over a span of two decades, weaving together a narrative that was as much personal as it was a stark environmental exposé. The End of the Game is more than just a collection of striking images; it is a deeply researched document that incorporates historical photographs from early explorers, missionaries, and fellow photographers. This historical layering, combined with poignant quotations from those who witnessed Africa’s changing face, amplifies the urgency and gravity of the ecological crisis Beard sought to convey. His work served as a powerful early warning against the unchecked human impact on fragile ecosystems, particularly in areas like Kenya’s Amboseli National Park and various regions of Uganda during the 1960s and 1970s.

The Genesis of a Landmark Work: Documenting Africa’s Ecological Transformation

Peter Beard’s engagement with Africa began in earnest in the late 1950s. Born into a prominent New York family, his life was characterized by a dual existence, navigating the sophisticated circles of high society while simultaneously immersing himself in the untamed landscapes of the continent. This duality profoundly shaped his artistic vision. He spent significant periods in Kenya, particularly in and around Tsavo National Park, a region that would become central to his most impactful work.

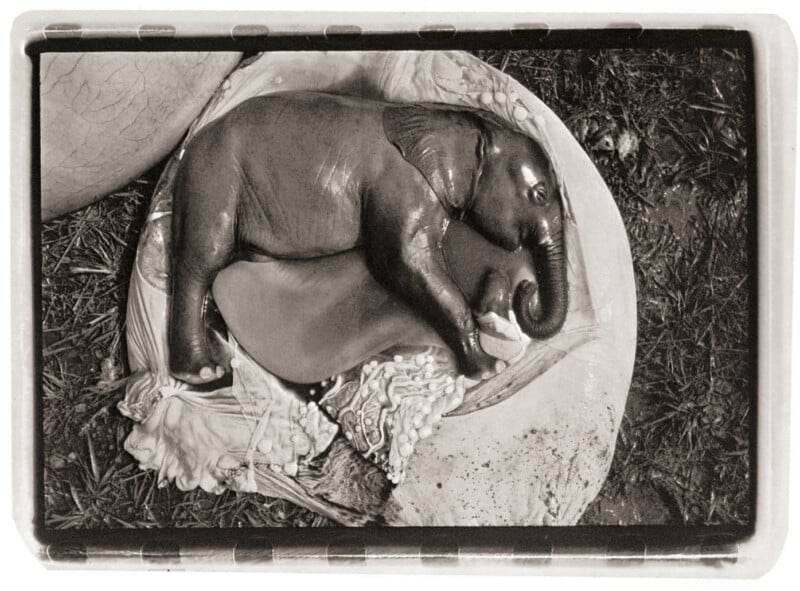

The End of the Game emerged from Beard’s profound concern over the dramatic decline in elephant populations, a phenomenon he attributed directly to human intervention and unsustainable land use practices. His early photographic work captured the majestic presence of these animals, but as the decades progressed, his lens also focused on the stark evidence of their demise. The book’s power lies in its unflinching portrayal of this ecological imbalance. Beard didn’t shy away from the difficult realities; his images documented the overpopulation of wildlife, the resulting scarcity of resources, and the tragic consequences that followed.

The period between the 1960s and 1970s was a critical juncture for many African ecosystems. As human populations grew and agricultural frontiers expanded, vast tracts of land were converted for farming and ranching. This encroachment led to habitat fragmentation, reduced grazing areas, and increased competition for water resources. For large herbivores like elephants and rhinos, this meant a drastic reduction in their carrying capacity, leading to starvation, disease, and heightened mortality rates. Beard’s meticulous documentation captured this unfolding tragedy, presenting a visual narrative that was both deeply disturbing and undeniably compelling.

Beard’s Unique Artistic Vision and Method

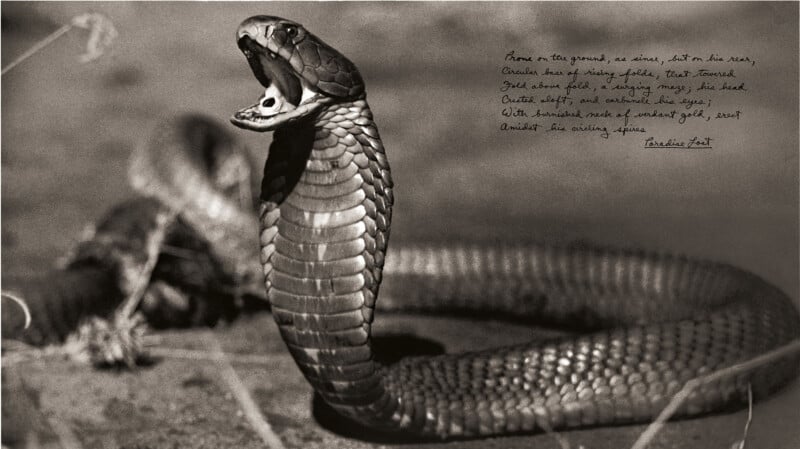

Peter Beard was not a traditional wildlife photographer. His approach was avant-garde, characterized by a fusion of photography, collage, drawing, and text. He treated his photographs as raw material, often altering them with paint, ink, and handwritten annotations. This experimental technique transformed his images into densely layered artifacts, blurring the boundaries between objective documentation and subjective interpretation. His work became a form of visual diary, reflecting not only the external world but also his internal dialogue and emotional response to the unfolding environmental crisis.

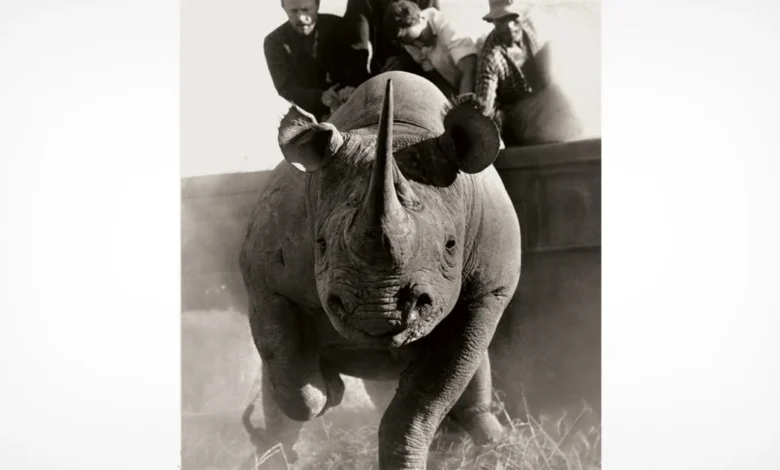

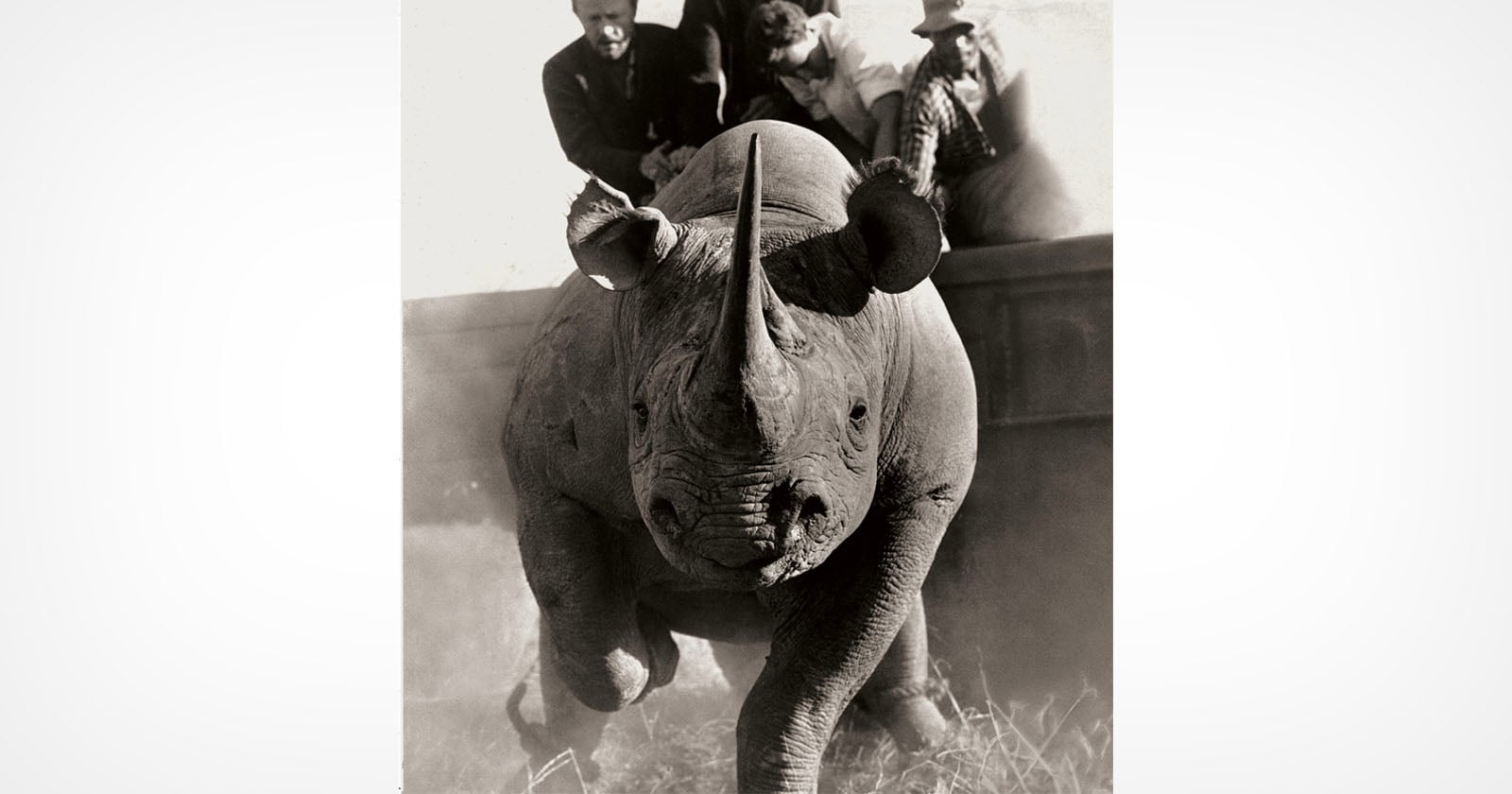

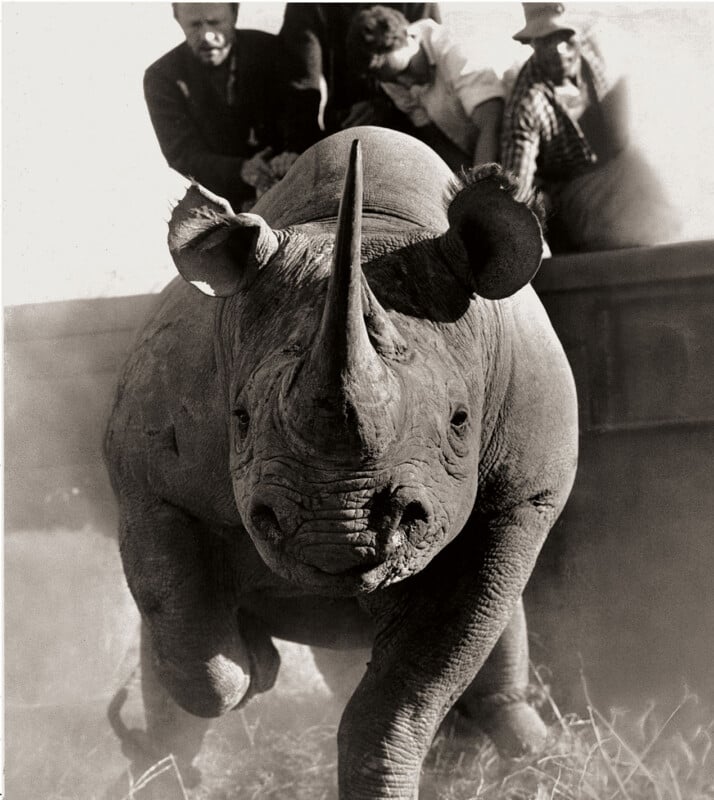

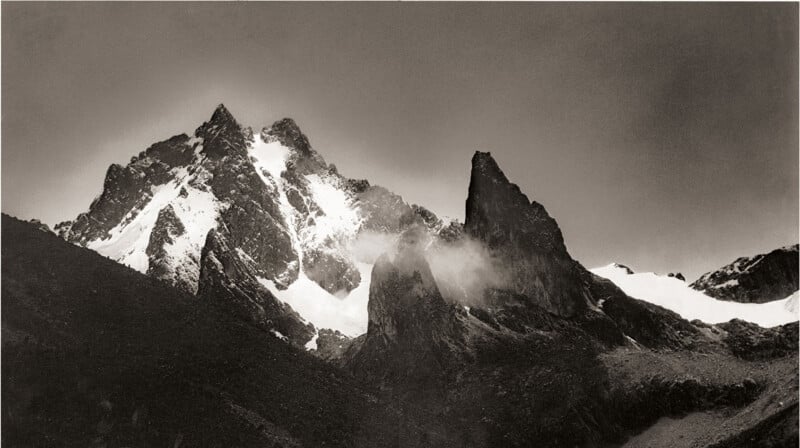

His methods were as intense as his subject matter. Beard would often spend extended periods in the field, living alongside the wildlife he was documenting. He developed a deep, almost visceral connection with the African landscape and its inhabitants. This immersion allowed him to capture moments of raw intimacy and power, from the explosive charge of a rhino to the quiet, poignant stillness of a lioness feeding on a carcass. The black-and-white aesthetic he predominantly employed lent a timeless and dramatic quality to his images, emphasizing their stark beauty and the gravity of their subject.

The inclusion of historical images within The End of the Game was a deliberate choice by Beard. By juxtaposing contemporary photographs with those from earlier eras, he aimed to highlight the profound changes that had occurred over time. These historical images often depicted a more abundant and seemingly untouched Africa, serving as a visual counterpoint to the encroaching desolation that Beard witnessed. This technique not only provided historical context but also underscored the narrative of loss and transformation that is central to the book.

The Impact of The End of the Game

When The End of the Game was first released, it sent ripples through the art world and conservation circles. It challenged conventional notions of documentary photography, pushing the boundaries of what was considered acceptable or even artistic. Beard’s bold use of collage and mixed media, combined with his unflinching portrayal of environmental degradation, resonated with a growing awareness of ecological issues.

The book is credited with playing a significant role in raising early awareness about the plight of African wildlife and the broader implications of human impact on natural environments. Its powerful imagery served as a wake-up call, prompting discussions about conservation strategies and the ethical responsibilities of humanity towards the natural world. Beard’s work provided a visual language for the environmental movement, making complex ecological issues accessible and emotionally resonant for a wider audience.

Beyond its conservation impact, The End of the Game also redefined the possibilities of artistic expression within documentary photography. Beard’s innovative approach inspired a generation of artists and photographers to experiment with form and content, encouraging a more personal and integrated approach to visual storytelling. His work demonstrated that documentary photography could be both a tool for social and environmental commentary and a powerful form of artistic creation.

Peter Beard: A Life Lived on the Edge

Peter Beard’s life was as complex and captivating as his art. Born in 1938 into a family of considerable wealth and influence, he eschewed a conventional path, drawn instead to the allure of adventure and the raw beauty of the wild. His early education included stints at Yale University, where he studied art history, but it was his travels and experiences in Africa that truly shaped his destiny.

By the mid-1990s, Beard’s life took a dramatic and perilous turn. In 1996, he was severely injured after being trampled by an elephant in Kenya. This traumatic event, while nearly costing him his life, underscored the profound and often dangerous relationship he had with the wildlife he so passionately documented. He survived, but the incident left him with lasting physical challenges and a deeper, perhaps more somber, understanding of the wild.

His social life was legendary, characterized by a flamboyant and often chaotic lifestyle that orbited figures in the art, fashion, and celebrity worlds of New York, Paris, and London. He cultivated an image of a wild man, a bohemian artist living on the fringes of polite society. His personal diaries, often filled with sketches, clippings, and handwritten prose, were an extension of his artistic practice, serving as a raw and unfiltered record of his thoughts, experiences, and obsessions. These diaries, much like his photographic collages, were intensely personal, often incorporating elements like dried blood, insect specimens, and human hair, further blurring the lines between art, autobiography, and the visceral realities of life and death in the wild.

Peter Beard passed away in 2020 at the age of 82, following a period of declining health. His death marked the end of an era for many who were captivated by his unique vision and larger-than-life persona. He left behind a rich and complex legacy, one that continues to inspire awe and provoke thought about our relationship with the natural world and the enduring power of art to bear witness to its struggles.

Enduring Relevance: A New Edition of a Classic

The continued resonance of Peter Beard’s work is evident in the recent publication of a new edition of Peter Beard. The End of the Game by Taschen. This release ensures that his groundbreaking vision and urgent message are accessible to new generations of readers and enthusiasts. The book, a comprehensive collection of his work on the ecological crisis in Africa, remains a vital resource for understanding the history of conservation and the artistic innovations that have accompanied it.

The enduring appeal of The End of the Game lies not only in its historical significance but also in its timeless relevance. The ecological challenges that Peter Beard documented in the mid-20th century—habitat loss, species decline, and the impact of human activity—remain pressing issues today. His work serves as a powerful reminder of the fragility of our planet’s ecosystems and the urgent need for continued vigilance and action.

The photographs within the book offer a glimpse into a world that has irrevocably changed. Images of vast herds of elephants, once a common sight, now evoke a sense of nostalgia for a lost abundance. The stark depictions of animal carcasses, a consequence of overpopulation and resource scarcity, serve as somber memorials to the environmental toll of unchecked human expansion. Yet, amidst the tragedy, Beard’s work also captures the raw, untamed beauty of Africa and its magnificent wildlife, a beauty that he fought to preserve through his art and activism.

The End of the Game is more than a historical document; it is a call to awareness, a testament to the power of visual storytelling, and a profound meditation on the complex and often fraught relationship between humanity and the natural world. Peter Beard’s legacy, cemented by this enduring work, continues to challenge and inspire, urging us to confront the realities of our impact on the planet and to champion the preservation of its wild places and creatures.