The 2026 Stanford AI Index Report: A Deep Dive into the Accelerating World of Artificial Intelligence

The world of artificial intelligence is a whirlwind of conflicting narratives, often characterized by breathless hype and dire warnings. Amidst claims of an impending AI gold rush, the specter of job displacement, and pronouncements of AI’s limitations, the 2026 AI Index from Stanford University’s Institute for Human-Centered Artificial Intelligence offers a crucial, data-driven assessment of the field’s rapid evolution. This comprehensive annual report card cuts through the noise, providing a detailed look at the state of AI development, adoption, and its multifaceted societal impacts.

AI’s Unprecedented Acceleration and Growing Pains

Contrary to predictions of development hitting a plateau, the 2026 AI Index reveals that leading AI models continue to achieve remarkable advancements. The report highlights an adoption rate for AI that outpaces that of the personal computer and the internet, signaling a profound shift in technological integration. While AI companies are experiencing revenue growth unprecedented in previous tech booms, this surge is accompanied by staggering investments, with hundreds of billions of dollars being poured into data centers and advanced semiconductor chips. This rapid progress, however, is creating significant challenges: the benchmarks used to measure AI performance, the policies designed to govern it, and the job market are all struggling to keep pace. AI is advancing at an breakneck speed, leaving many sectors of society playing catch-up.

This accelerated development comes with a substantial environmental and logistical cost. The global network of AI data centers now consumes an estimated 29.6 gigawatts of power, a figure comparable to the peak demand of the entire state of New York. The operational demands of a single advanced model, such as OpenAI’s GPT-4o, may require an annual water consumption exceeding the needs of 1.2 million people for drinking water. Furthermore, the supply chain for the specialized chips essential for AI development is alarmingly fragile. The United States hosts a majority of the world’s AI data centers, yet the fabrication of nearly every leading AI chip is concentrated in the hands of a single Taiwanese company, TSMC, underscoring a critical geopolitical and supply chain vulnerability.

The data presented in the AI Index paints a picture of a technology evolving at a pace that outstrips our current capacity for effective management and oversight. The report’s key findings offer a granular understanding of this complex landscape.

The US and China: A Tightening Race in AI Supremacy

In a highly competitive and geopolitically charged arena, the United States and China are now nearly indistinguishable in terms of AI model performance. This assessment is based on data from Arena, a community-driven platform that allows users to compare the outputs of large language models (LLMs) when presented with identical prompts. In early 2023, OpenAI’s ChatGPT held a clear lead, but this advantage eroded throughout 2024 with the release of competing models from Google and Anthropic. By February 2025, R1, an AI model developed by the Chinese lab DeepSeek, briefly matched the performance of the leading US model. As of March 2026, Anthropic has emerged as the frontrunner, closely followed by xAI, Google, and OpenAI. Chinese models like DeepSeek and Alibaba are now only modestly trailing. With the top AI models performing at such similar levels, the competitive battleground is shifting to factors such as cost, reliability, and practical, real-world applicability.

- Key Performance Trends: The Arena platform’s data from May 2023 to January 2026 shows a consistent upward trend in the performance of top AI models. While US-based models from Anthropic, xAI, Google, and OpenAI currently lead, the gap between them and Chinese models like Alibaba and DeepSeek has narrowed significantly. Meta trails slightly behind these frontrunners. This tight clustering indicates a highly competitive development environment.

The AI Index report also delineates distinct advantages for each nation. The United States possesses more powerful AI models, greater access to capital, and an estimated 5,427 data centers, more than ten times the number in any other country. Conversely, China leads in AI research publications, patent filings, and advancements in robotics.

As the competition intensifies, leading AI companies such as OpenAI, Anthropic, and Google have increasingly opted to withhold details about their model training processes, including code, parameter counts, and dataset sizes. This growing lack of transparency is a significant concern for independent researchers. Yolanda Gil, a computer scientist at the University of Southern California and a co-author of the report, stated, "We don’t know a lot of things about predicting model behaviors." This opacity hinders the crucial work of studying and implementing safety measures for AI models, raising questions about accountability and future development trajectories.

AI Models: Surpassing Human Capabilities in Specific Domains

Despite earlier concerns about development reaching an impasse, AI models continue to demonstrate rapid and significant improvements. In certain benchmarks designed to assess advanced capabilities, these models now meet or exceed the performance of human experts in areas such as PhD-level science, mathematics, and language comprehension. The SWE-bench Verified benchmark, which evaluates AI models in software engineering tasks, saw top scores escalate from approximately 60% in 2024 to nearly 100% in 2025. By 2025, AI systems were capable of independently generating weather forecasts.

"I am stunned that this technology continues to improve, and it’s just not plateauing in any way," remarked Gil, echoing the report’s findings on sustained progress.

- Benchmark Performance vs. Human Baseline: A comparison of AI Index technical performance benchmarks against human performance reveals that AI has surpassed the human baseline in skills such as image classification, English language understanding, multitask language understanding, visual reasoning, medium-level reading comprehension, and multimodal understanding and reasoning by at least 2025. Areas like autonomous software engineering, mathematical reasoning, and agent multimodal computer use are trending towards meeting the human baseline by 2026.

However, AI’s progress is not uniform across all domains. Due to their learning methodology, which relies on processing vast amounts of text and images rather than direct physical interaction, AI models exhibit what is termed "jagged intelligence." This means they excel in some areas while struggling significantly in others. For instance, robotics is still in its nascent stages, with success rates in household tasks averaging only 12%. Self-driving cars represent a more advanced application, with Waymo vehicles operating in five US cities and Baidu’s Apollo Go offering ride-sharing services in China. AI is also making inroads into professional fields like law and finance, though no single model has yet achieved dominance in these sectors.

The Crisis of AI Benchmarking: Measuring a Moving Target

The remarkable progress reported in AI performance must be viewed with a degree of caution, as the very benchmarks used to track this advancement are struggling to keep pace. The Stanford report indicates that AI models are rapidly exceeding the performance ceilings of many existing benchmarks. Compounding this issue are the limitations of the benchmarks themselves. Some are poorly constructed, with a popular mathematics benchmark, for example, exhibiting a 42% error rate. Others are susceptible to manipulation; models trained on benchmark test data can achieve high scores without demonstrating genuine improvement in intelligence.

This disconnect between benchmark performance and real-world effectiveness is a critical concern. Strong scores on a benchmark do not always translate to reliable or practical application in real-world scenarios, especially given that AI is rarely deployed in the exact same manner as it is tested. For complex, interactive technologies like AI agents and robots, the development of adequate benchmarks remains a significant challenge.

Furthermore, a growing trend of reduced transparency from AI companies complicates independent assessment. The report notes that many companies are not disclosing their performance on certain benchmarks, particularly those related to responsible AI. This omission, according to Gil, "maybe says something" about the models’ capabilities or limitations.

AI’s Growing Footprint: Impact on the Job Market Emerges

Within a mere three years of becoming widely accessible, AI is now utilized by over half the global population, a rate of adoption unprecedented compared to the personal computer or the internet. An estimated 88% of organizations currently employ AI technologies, with four out of five university students also leveraging these tools.

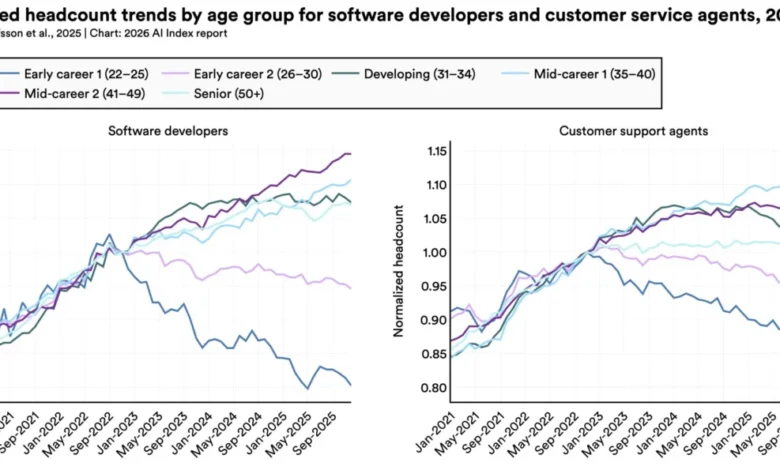

While the long-term implications of AI on employment are still unfolding, early studies suggest a discernible impact on younger workers in specific professions. A 2025 study by Stanford economists found that employment for software developers aged 22 to 25 had decreased by nearly 20% since 2022. While broader macroeconomic factors may also contribute to this trend, AI appears to be a significant contributing element.

- Shifting Employment Trends: Data from 2021 through 2025 indicates a diverging trend in headcount for different age groups within the software development sector. While the early career cohort (ages 22-25) experienced a sharp decline after a peak in September 2022, other age groups continued to see rising employment, albeit at a less steep rate. A similar pattern, though less pronounced, is observed among customer support agents, where the early career group shows a decline.

Employer surveys from 2025 conducted by McKinsey & Company indicate that hiring may continue to contract. One-third of organizations anticipate that AI will lead to a reduction in their workforce in the coming year, with particular impacts expected in service and supply chain operations, as well as software engineering. While AI has been shown to boost productivity by 14% in customer service and 26% in software development, these gains are less apparent in tasks requiring complex judgment. The broader economic ramifications of AI remain a subject of ongoing research and analysis.

Public Sentiment: A Dichotomy of Optimism and Anxiety

Globally, public sentiment towards AI is characterized by a complex interplay of optimism and apprehension. An Ipsos survey cited in the index reveals that 59% of people believe AI will yield more benefits than drawbacks, while simultaneously, 52% express nervousness about its proliferation.

A notable divergence exists between the perspectives of AI experts and the general public regarding the future of the technology, as highlighted by a Pew survey. The most significant gap is observed in discussions about the future of work: 73% of experts foresee a positive impact of AI on how people perform their jobs, whereas only 23% of the American public shares this view. Experts also express greater optimism than the public concerning AI’s role in education and healthcare. However, both groups largely agree that AI poses risks to the integrity of elections and the quality of personal relationships.

- Perceptions of AI’s Societal Impact (US): A comparative analysis of US adults and AI experts reveals a stark difference in their outlook on AI’s future impact. AI experts are significantly more optimistic across various domains. For instance, 84% of experts predict a positive outcome for AI in medical care, compared to 44% of US adults. The most substantial disparity is observed in the realm of jobs, with experts at 73% optimism versus just 23% for the general public. Interestingly, both groups share a similar level of expectation for AI’s positive impact on elections, with experts at 11% and US adults at 9%.

Further insights from an Ipsos survey indicate that Americans have the lowest trust among surveyed nations in their government’s ability to regulate AI effectively. A greater proportion of Americans are concerned that federal AI regulation will be insufficient rather than overly stringent.

Navigating the Regulatory Maze: Governments Grapple with AI Governance

Governments worldwide are facing significant challenges in effectively regulating the rapidly evolving field of artificial intelligence, though some incremental progress was made in the past year. The European Union’s AI Act, for example, saw its initial prohibitions take effect, banning AI applications in predictive policing and emotion recognition. Japan, South Korea, and Italy also enacted national AI legislation. In contrast, the US federal government adopted a more deregulatory stance, with President Trump issuing an executive order aimed at limiting states’ authority to regulate AI.

Despite federal initiatives, state legislatures across the US passed a record 150 AI-related bills in 2025. California introduced landmark legislation, including SB 53, which mandates safety disclosures and whistleblower protections for AI model developers. New York’s RAISE Act requires AI companies to publish safety protocols and report significant safety incidents.

- Legislative Activity in the US: Data tracking AI-related bills passed into law by all US states from 2016 to 2025 shows a dramatic acceleration in legislative activity, with a sharp increase in 2023 and a peak of 150 bills enacted in 2025. This surge reflects growing legislative attention to AI’s implications.

Despite this legislative momentum, the pace of regulation continues to lag behind technological advancement, largely due to an incomplete understanding of how AI systems function. "Governments are cautious to regulate AI because… we don’t understand many things very well," explained Gil. "We don’t have a good handle on those systems." This knowledge gap presents a fundamental obstacle to developing robust and effective regulatory frameworks.

The 2026 Stanford AI Index serves as a critical snapshot of a technology in perpetual motion. It underscores the immense potential of AI while simultaneously illuminating the urgent need for proactive governance, ethical development, and a deeper societal understanding of its far-reaching implications. The race is on, not just for AI supremacy, but for the wisdom to harness its power responsibly.