A new way to express yourself: Gemini can now create music

Google DeepMind’s cutting-edge generative music model, Lyria 3, is now accessible in a beta release within the Gemini application, marking a significant expansion of creative tools for users. This new capability allows individuals to generate custom music by simply describing their desired output or by providing an image for inspiration. The integration signifies Google’s ongoing commitment to democratizing creative expression through artificial intelligence, building upon previous advancements in image and video generation.

The core of this innovation lies in Lyria 3, the latest iteration of Google DeepMind’s generative music technology. This advanced model is engineered to transform textual prompts or visual cues into original musical compositions. Users can articulate their creative vision, for instance, by requesting "a comical R&B slow jam about a sock finding their match," and within moments, Gemini translates this idea into a high-quality, catchy musical track. The ability to draw inspiration from uploaded content further broadens the scope of creative possibilities, enabling users to generate music that aligns with specific moods, aesthetics, or even existing visual themes.

This rollout is not merely an incremental update; it represents a strategic leap in AI-powered content creation. The Gemini app, which has already established itself as a versatile tool for image and video manipulation, now enters the realm of auditory art. The initial beta phase is designed to gather user feedback and refine the model’s performance, with broader accessibility anticipated in subsequent updates. The goal is to empower a wider audience, from casual users seeking a unique way to express themselves to content creators looking for novel tools to enhance their projects.

The development of Lyria 3 builds upon the foundational research and advancements demonstrated in its predecessors. While the specific technical details of Lyria 3’s architectural improvements are not fully disclosed in the initial announcement, it is understood that the model benefits from significant enhancements in audio generation capabilities. These advancements are likely to include more sophisticated understanding of musical structure, genre nuances, harmonic progressions, and rhythmic patterns. The ability to produce "high-quality, catchy tracks" suggests a leap in sonic fidelity and musical coherence compared to earlier generative music models.

From Concept to Composition: How Gemini’s Music Generation Works

The user experience with Gemini’s music generation is designed for simplicity and immediate creative gratification. A user opens the Gemini app and navigates to the music generation feature. Here, they can input a descriptive text prompt outlining the desired genre, mood, instrumentation, lyrical theme, or any other musical characteristic. For example, a user might request "an upbeat electronic track with a driving bassline, perfect for a workout montage." Alternatively, they can upload an image, and Gemini will analyze its visual elements to generate a soundtrack that complements its aesthetic.

The output is a 30-second musical track, accompanied by custom cover art generated by Nano Banana, a creative AI collaborator. This curated presentation enhances the shareability and appeal of the generated music. Users have the option to download the track or share a direct link, facilitating easy distribution among friends or within online communities. The emphasis on short, impactful tracks suggests a focus on immediate creative expression and the generation of unique sonic snippets rather than full-length compositions.

The objective, as stated by Google, is not to produce "musical masterpieces" but to offer a "fun, unique way to express yourself." This framing positions the tool as an accessible entry point into musical creativity, encouraging experimentation and personal artistic exploration without the steep learning curve often associated with traditional music production.

Lyria 3: Advancing the State of Generative Audio

Lyria 3 represents a significant advancement over previous Lyria models in three key areas, though the specifics of these improvements are yet to be fully detailed. However, based on the product’s output and industry trends, these enhancements likely pertain to:

- Musical Coherence and Structure: Lyria 3 is expected to exhibit a more robust understanding of song structure, transitions, and thematic development, resulting in tracks that feel more complete and less repetitive.

- Genre Fidelity and Nuance: The model’s ability to capture the distinct characteristics of various musical genres, from the introspective melodies of lo-fi hip-hop to the energetic rhythms of dance music, is likely enhanced, offering more authentic and nuanced sonic outputs.

- Expressiveness and Emotional Range: Lyria 3 may possess a greater capacity to translate emotional cues from prompts into musical expression, allowing for the generation of tracks that evoke a wider spectrum of feelings, from joy and excitement to melancholy and introspection.

The integration of Lyria 3 into Gemini is a strategic move by Google to embed AI-powered creative tools directly into a widely accessible platform. This approach democratizes access to advanced generative capabilities, empowering a broader user base to engage with AI for creative purposes.

A Look at the Technical Underpinnings and Creative Potential

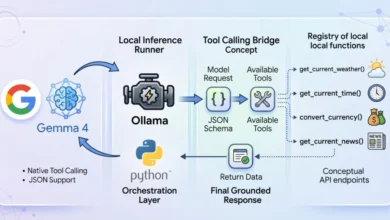

While the article highlights the user-facing experience, it’s important to acknowledge the complex technological foundation upon which Lyria 3 is built. Generative music models typically involve sophisticated neural network architectures, trained on vast datasets of musical information. This training enables them to learn patterns, relationships, and stylistic elements that define different genres and musical compositions.

The process of text-to-music generation involves several stages:

- Text Understanding: The AI processes the user’s textual prompt, parsing keywords, sentiment, and stylistic requests to extract the core musical intent.

- Feature Extraction: Based on the understanding of the prompt, the model identifies key musical features such as tempo, key, instrumentation, mood, and genre.

- Music Synthesis: Using these extracted features, the AI synthesizes audio waveforms that align with the desired musical characteristics. This often involves techniques like Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs).

- Post-processing and Refinement: The synthesized audio may undergo further processing to enhance its quality, add effects, and ensure a coherent musical structure.

The inclusion of Nano Banana for cover art generation adds another layer of AI-driven creativity, providing a visually appealing package for the generated music. This collaborative approach between different AI models showcases the potential for synergistic creative outputs.

Expanding Horizons: Lyria 3 on YouTube and Creator Tools

Beyond the Gemini app, Lyria 3’s capabilities are also being integrated into YouTube’s creator ecosystem through a feature called "Dream Track." This initiative aims to elevate the quality of soundtracks for YouTube Shorts, enabling creators to produce unique music for their short-form video content.

Dream Track, currently available in the U.S. and rolling out to creators in other countries, leverages Lyria 3 to enhance the sonic landscape of Shorts. Creators can utilize the model to generate lyrical verses or atmospheric backing tracks, providing them with greater control over the emotional and stylistic tone of their videos. This feature is particularly valuable for creators who may not have the resources or expertise to commission original music, thereby democratizing access to high-quality audio for their content.

The implications for content creators are substantial. By offering a seamless way to generate custom music, Google is empowering a new wave of creativity on platforms like YouTube. This can lead to more engaging and memorable video content, as creators are no longer limited by stock music libraries or the cost of hiring musicians. The ability to tailor soundtracks precisely to the visual narrative can significantly amplify the impact of short-form videos, a format that continues to dominate online engagement.

The integration into YouTube’s Shorts platform suggests a strategic focus on short-form, attention-grabbing content, where custom music can play a crucial role in setting the mood and enhancing viewer experience. This move also aligns with YouTube’s ongoing efforts to provide creators with a comprehensive suite of tools to produce and distribute compelling content.

Ensuring Authenticity and Responsibility: Audio Verification and Ethical AI

A critical aspect of introducing powerful AI content generation tools is the need for robust mechanisms to identify and manage AI-generated content. Google is addressing this through the integration of SynthID, an imperceptible watermarking technology, into all tracks generated by the Gemini app. SynthID embeds a digital signature within the audio that can be detected by specialized tools, thereby verifying its AI origin.

Furthermore, Gemini’s verification capabilities are being expanded to include audio, alongside image and video. Users can now upload a file and ask Gemini to determine if it was generated using Google AI. This feature leverages both SynthID detection and Gemini’s own reasoning capabilities to provide a response. This commitment to transparency and verifiable AI content is crucial for building trust and combating misinformation.

The responsible development of generative AI is a paramount concern for Google. Since the initial launch of Lyria in 2023, the company has emphasized collaboration with the music community. This consultative approach, along with insights gained from experimental platforms like the Music AI Sandbox, has informed the development of Lyria 3, with a strong focus on copyright and partner agreements during the training process.

Google’s policy on music generation explicitly states that the technology is designed for "original expression, not for mimicking existing artists." If a prompt references a specific artist, Gemini is intended to interpret this as a directive for broad creative inspiration, aiming to produce a track that shares a similar style or mood rather than a direct imitation. Filters are in place to cross-reference outputs against existing musical content, aiming to prevent unauthorized replication.

However, acknowledging the inherent challenges in AI development, Google has also implemented mechanisms for user feedback and recourse. Users can report content that may infringe upon their rights or the rights of others. Adherence to Google’s Terms of Service and specific Generative AI prohibited use policies, which strictly forbid the violation of intellectual property and privacy rights, is mandatory for all users. This layered approach to responsible AI development underscores Google’s commitment to ethical deployment of its generative technologies.

Accessibility and Future Outlook

Lyria 3 is currently available in the Gemini app for users aged 18 and above. The service supports English, German, Spanish, French, Hindi, Japanese, Korean, and Portuguese, with plans to expand language support and improve quality in more languages over time. The desktop version of the Gemini app is rolling out the music generation feature today, with mobile app integration to follow over the next several days.

Subscribers to Google AI Plus, Pro, and Ultra services will benefit from higher usage limits, indicating a tiered access model that rewards premium users. This strategy is common in the AI service landscape, providing enhanced features and capacity to paying customers.

The overarching goal, as articulated by Google, is to enrich daily life by providing users with a "fun, custom soundtrack." The accessibility of this tool through the Gemini app, and its integration into platforms like YouTube, signals a broader strategy to weave AI-powered creativity into the fabric of everyday digital interactions. Users are encouraged to explore this new frontier of musical expression at gemini.google.com/music. The continued evolution of Lyria and its integration into various Google products suggest that AI-generated music is poised to become an increasingly prevalent and integral part of the digital creative landscape, offering novel avenues for personal expression, content creation, and artistic exploration. The coming months will likely see further refinements, expanded features, and broader adoption as users discover and experiment with this innovative new capability.