AI’s Transformative Potential for Public Sector Operations: Navigating Constraints with Small Language Models

The global surge in artificial intelligence (AI) adoption is compelling organizations across all sectors to integrate these advanced technologies. However, public sector entities face a unique set of challenges, including stringent security protocols, robust governance frameworks, and operational complexities that differ significantly from their private sector counterparts. These distinctive constraints are driving the exploration of purpose-built Small Language Models (SLMs) as a viable and promising pathway to operationalize AI within government environments.

Recent analyses highlight the public sector’s inherent caution regarding AI. A comprehensive study conducted by Capgemini revealed that a substantial 79 percent of public sector executives worldwide express reservations about the data security implications of AI. This apprehension is deeply rooted in the highly sensitive nature of government data and the stringent legal obligations that govern its handling and utilization. Han Xiao, Vice President of AI at Elastic, elaborates on this critical point: "Government agencies must be very restricted about what kind of data they send to the network. This sets a lot of boundaries on how they think about and manage their data." This fundamental need for granular control over sensitive information is a pivotal factor that complicates AI deployment, especially when contrasted with the more permissive operational assumptions prevalent in the private sector.

Unique Operational Challenges in the Public Sector

The typical expansion of AI capabilities in the private sector often relies on a set of assumed conditions: continuous cloud connectivity, dependence on centralized infrastructure, a degree of acceptance for incomplete model transparency, and relatively few restrictions on data movement. For many state institutions, however, embracing these conditions can range from being inadvisable to outright impossible.

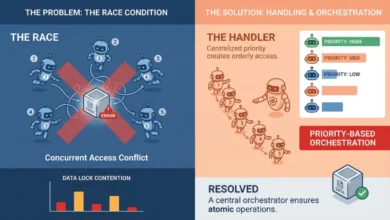

Government agencies are mandated to ensure that their data remains firmly under their control, that information can be rigorously checked and verified, and that operational disruptions are minimized to the greatest extent possible. Simultaneously, these entities frequently operate within environments characterized by limited, unreliable, or even absent internet connectivity. These compounding complexities have historically hindered many promising public sector AI pilot projects from advancing beyond the experimental phase.

"Many people undervalue the operating challenge of AI," Xiao notes. "The public sector needs AI to perform reliably on all kinds of data, and then to be able to grow without breaking. Continuity of operations is often underestimated." This sentiment is echoed by findings from an Elastic survey of public sector leaders, which indicated that a significant 65 percent struggle with the continuous, real-time, and scaled use of data.

Infrastructure limitations further exacerbate these challenges. Government organizations often face difficulties in acquiring the Graphics Processing Units (GPUs) that are essential for training and accessing complex AI models. Xiao explains this bottleneck: "Government doesn’t often purchase GPUs, unlike the private sector—they’re not used to managing GPU infrastructure. So accessing a GPU to run the model is a bottleneck for much of the public sector." This scarcity of specialized hardware creates a significant barrier to entry for many public sector AI initiatives.

The Rise of Smaller, More Practical AI Models

The non-negotiable requirements of the public sector render traditional large language models (LLMs) often untenable. In contrast, SLMs offer a compelling alternative. These specialized AI models can be housed locally, thereby affording enhanced security and greater control over data. Typically, SLMs utilize billions, rather than hundreds of billions, of parameters, making them considerably less computationally demanding than their larger LLM counterparts.

This local deployment capability means the public sector does not necessarily need to invest in building and maintaining massive models housed in remote, centralized locations. Empirical research has demonstrated that SLMs can perform as well as, or even better than, LLMs in specific tasks. This empirical backing suggests that SLMs can enable the effective and efficient use of sensitive information without introducing the operational complexities associated with managing large-scale, distributed models. Xiao aptly illustrates this point: "It is easy to use ChatGPT to do proofreading. It’s very difficult to run your own large language models just as smoothly in an environment with no network access."

The purpose-built nature of SLMs is a key advantage. These models are designed and trained to meet the specific needs of the department or agency that will employ them. Crucially, data is stored securely outside the model and is accessed only when a query is made. Through carefully engineered prompts, only the most relevant information is retrieved, leading to more accurate and contextually appropriate responses. The integration of techniques such as smart retrieval, vector search, and verifiable source grounding allows for the construction of AI systems that are precisely tailored to public sector requirements.

This paradigm shift suggests that the next phase of AI adoption in the public sector will likely involve bringing AI tools to the data, rather than transmitting sensitive data to the cloud. Industry analysts at Gartner predict that by 2027, small, task-specific AI models will be utilized three times more frequently than general-purpose LLMs, underscoring the growing trend toward specialized AI solutions.

Enhancing Public Sector Search Capabilities with AI

"When people in the public sector hear AI, they probably think about ChatGPT. But we can be much more ambitious," Xiao remarks. "AI can revolutionize how the government searches and manages the large amounts of data they have." Beyond the well-known chatbot applications, one of AI’s most immediate and impactful opportunities for the public sector lies in dramatically improving search functionalities.

Like many large organizations, government entities possess vast repositories of unstructured data, including technical reports, procurement documents, meeting minutes, and financial records. Modern AI, powered by SLMs, can now deliver results sourced from a diverse range of media, encompassing readable PDFs, scanned documents, images, spreadsheets, and audio recordings, often across multiple languages. These SLM-powered systems can index this disparate information to provide tailored responses and even draft complex texts, all while ensuring that the outputs adhere to legal and regulatory compliance standards. "The public sector has a lot of data, and they don’t always know how to use this data. They don’t know what the possibilities are," Xiao observes.

Even more powerfully, AI can assist government employees in interpreting the data they access. Xiao explains, "Today’s AI can provide you with a completely new view of how to harness that data." A well-trained SLM possesses the capability to interpret complex legal norms, extract valuable insights from public consultations, support data-driven decision-making by executives, and significantly enhance public access to essential services and administrative information. These advancements hold the potential to fundamentally transform public sector operations, leading to greater efficiency, improved service delivery, and enhanced transparency.

The Promise of Small Language Models

Focusing on SLMs shifts the conversation from the sheer comprehensiveness of a model to its operational efficiency and suitability for specific tasks. LLMs, with their immense scale, incur significant performance and computational costs, often necessitating specialized hardware that many public entities find economically prohibitive. While SLMs do require some capital expenditure, they are considerably less resource-intensive than LLMs, resulting in lower costs and a reduced environmental footprint.

Furthermore, public sector agencies are frequently subject to stringent audit requirements. SLM algorithms, by their nature, can be meticulously documented and certified for transparency, meeting these critical compliance needs. In addition, countries with robust data privacy regulations, such as the General Data Protection Regulation (GDPR) in Europe, can benefit from SLMs specifically engineered to adhere to these strict privacy standards.

The use of tailored training data is instrumental in producing more targeted results, thereby mitigating the prevalence of errors, bias, and the phenomenon of "hallucinations" that can plague AI systems. Xiao articulates this advantage: "Large language models generate text based on what they were trained on, so there is a cut-off date when they were trained. If you ask about anything after that, it will hallucinate. We can solve this by forcing the model to work from verified sources." This grounding in verified information ensures greater accuracy and reliability.

The inherent risks associated with AI are further minimized by keeping data on local servers or even on specific devices. This approach is not about isolation but about fostering strategic autonomy, which in turn enables trust, resilience, and relevance in the deployment of AI technologies within government.

By prioritizing task-specific models designed for environments that process data locally, and by implementing continuous monitoring of performance and impact, public sector organizations can cultivate enduring AI capabilities that directly support real-world decision-making. Xiao offers a crucial piece of advice for organizations embarking on this journey: "Do not start with a chatbot; start with search. Much of what we think of as AI intelligence is really about finding the right information." This strategic focus on enhanced information retrieval lays a solid foundation for broader AI integration and maximizes immediate value.

The timeline for this transformation is already underway. As early as 2025, government technology conferences and industry reports began highlighting the growing interest and investment in specialized AI solutions for the public sector. This indicates a maturing understanding within government agencies that off-the-shelf, large-scale AI solutions may not be the most effective or secure approach. The shift towards SLMs represents a pragmatic and strategic response to these evolving needs, promising to unlock significant improvements in operational efficiency, data management, and public service delivery. The implications are far-reaching, potentially leading to more responsive governance, better-informed policy decisions, and a more accessible and efficient public sector for citizens.

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff. It was researched, designed, and written by human writers, editors, analysts, and illustrators. This includes the writing of surveys and collection of data for surveys. AI tools that may have been used were limited to secondary production processes that passed thorough human review.