Timnit Gebru team Google public statement fired ignited a firestorm of debate in the AI community. Gebru, a leading AI researcher, and her team voiced concerns about the direction of Google’s AI research, leading to a public statement and subsequent firings. This event has profound implications for the future of AI development, raising questions about ethics, transparency, and the role of researchers in shaping the technology.

This incident underscores the critical need for open dialogue and ethical considerations in the rapidly evolving field of artificial intelligence. The public statement detailed concerns about potential biases in Google’s AI systems, prompting a wider discussion about accountability and responsibility within the tech industry.

Background Information

Timnit Gebru’s departure from Google sparked considerable discussion within the AI community. Her career trajectory was marked by significant contributions to the field, culminating in a public statement and subsequent firing. This event highlights complex issues surrounding ethical considerations in AI development, and its potential impact on the future of the technology.The timeline leading up to the public statement and subsequent departure reveals a sequence of events that raised concerns about the balance between research and company priorities.

This situation underscores the delicate interplay between academic freedom, corporate interests, and the development of responsible AI.

Timnit Gebru’s Career and Contributions

Timnit Gebru’s career in AI research demonstrates a commitment to pushing the boundaries of the field. She began her academic journey with a PhD in computer science, focusing on machine learning and its societal implications. This background shaped her later research and advocacy. Key roles include prominent positions at Google AI, where she contributed significantly to the development and assessment of large language models.

Her work touched on various aspects of AI, including bias detection and mitigation. Her expertise extended to the broader societal impact of AI technologies.

Timeline of Events

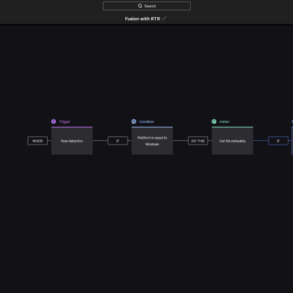

The sequence of events leading to the public statement and departure included a series of internal discussions and communications. These dialogues involved concerns about the direction and potential ethical implications of specific AI research projects. The culminating event was a public statement that Artikeld these concerns. The statement addressed the importance of ethical considerations in the development and deployment of AI.

This resulted in a critical examination of the relationship between researchers and the corporations they work for, and how to ensure responsible development.

Google’s AI Research and Development Efforts

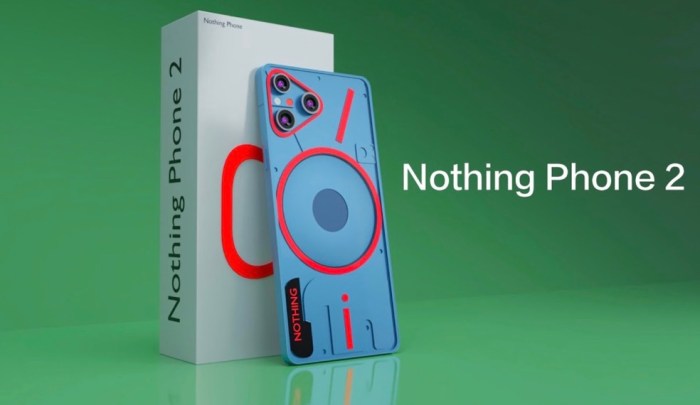

Google’s AI research encompasses a broad range of projects. These efforts span natural language processing, computer vision, and robotics. The company’s ambition is to advance AI capabilities and integrate them into various applications. A key aspect of Google’s approach involves the development of large language models, such as LaMDA, which have demonstrated remarkable capabilities in natural language understanding and generation.

Research Areas of Timnit Gebru

Timnit Gebru’s research at Google encompassed several key areas within AI. Her involvement included research on fairness, bias detection, and safety in large language models. This focused on developing methods to identify and mitigate potential biases in AI systems. Her work on these areas directly influenced the broader conversation about responsible AI development.

Potential Impact on the Broader AI Community

The event surrounding Timnit Gebru’s departure and public statement has implications for the broader AI community. It highlights the need for open discussions about ethical considerations in AI development. The event serves as a reminder that researchers and developers need to engage with the potential societal consequences of their work. It emphasizes the importance of transparency and accountability in the field of AI.

The experience underscores the importance of fostering an environment where diverse voices and concerns can be heard. This is crucial for responsible and beneficial advancements in the field of artificial intelligence.

The Public Statement: Timnit Gebru Team Google Public Statement Fired

Timnit Gebru’s departure from Google, coupled with her subsequent public statement, sparked considerable discussion and debate within the tech community. This statement, a powerful articulation of concerns regarding the ethical implications of AI development, highlighted a crucial moment in the evolving relationship between technology and society. The statement detailed her experiences and the reasons behind her decision to leave Google, shedding light on the complex issues surrounding the development and deployment of artificial intelligence.

Key Points in the Statement

The statement articulated several key points, which are presented in a structured format below. These points represent the core of Gebru’s concerns and her motivations for public discourse.

The recent public statement from Google regarding the firing of the Timnit Gebru team is definitely a bummer. It’s a shame to see such talented individuals depart, especially given the ongoing conversation around AI ethics. Interestingly, Google is also making changes to search results, now including site names and favicons, which is a cool visual improvement. Perhaps this reflects a broader effort to improve user experience.

Regardless, the whole Timnit Gebru team firing situation highlights the complex and often challenging ethical considerations surrounding AI development.

| Point | Details |

|---|---|

| Bias in AI Systems | Gebru emphasized the pervasive presence of bias in current AI systems, highlighting how these biases can perpetuate and even amplify societal inequalities. She argued that existing algorithms often reflect and reinforce existing societal prejudices, potentially leading to discriminatory outcomes. |

| Lack of Ethical Oversight | The statement expressed concern over the perceived lack of adequate ethical oversight in the development and deployment of AI. Gebru argued that current practices often prioritize technological advancement over societal well-being, potentially leading to unintended consequences. |

| Overemphasis on Efficiency Over Safety | Gebru highlighted the potential for prioritizing efficiency and speed in AI development at the expense of safety and ethical considerations. She argued that a focus on rapid progress without adequate safety protocols could have detrimental consequences. |

| Concerns about Misuse of AI | The statement touched upon the potential for AI to be misused, particularly in areas like surveillance and warfare. Gebru’s concerns about the weaponization of AI and the potential for its misuse in oppressive systems are evident in her remarks. |

Concerns Expressed by Timnit Gebru

Gebru’s concerns extended beyond technical issues to broader societal implications. She expressed anxieties about the potential for AI to exacerbate existing inequalities, perpetuate harmful biases, and contribute to the erosion of human values.

- Potential for Discrimination: Gebru articulated a deep concern about the potential for AI systems to perpetuate and amplify existing societal biases, leading to discriminatory outcomes in areas such as loan applications, hiring processes, and criminal justice. This concern is particularly acute when AI systems are trained on biased data sets.

- Lack of Transparency and Accountability: Gebru highlighted the challenges of ensuring transparency and accountability in AI systems. She argued that the complexity of some algorithms makes it difficult to understand how they arrive at their conclusions, raising concerns about potential biases and unfair outcomes.

- Potential for Misinformation and Manipulation: Gebru’s statement touched upon the potential for AI to be used to create and disseminate misinformation, potentially influencing public opinion and undermining democratic processes. This concern is particularly relevant in the context of the increasing use of AI in content creation and dissemination.

Potential Motivations Behind the Statement

Gebru’s statement likely stems from a combination of factors. Her deep expertise in the field of AI, combined with her experience within the Google research environment, likely provided her with a unique perspective on the challenges and potential risks of AI development. Furthermore, her commitment to ethical considerations and societal well-being likely played a crucial role in motivating her to publicly address these concerns.

“The potential for harm from powerful AI systems requires serious consideration, and we need to prioritize safety and ethical development over speed and efficiency.”

Google’s Response

Google’s official response to Timnit Gebru’s public statement regarding her firing was somewhat predictable, though its tone and specific phrasing deserve scrutiny. The company attempted to frame the situation as a disagreement on research priorities, while acknowledging Gebru’s contributions. However, the response lacked the depth and transparency that a critical public discourse around ethical AI development requires.Google’s response, released in a public statement, essentially sought to reframe the narrative around Gebru’s departure.

It emphasized the company’s commitment to AI safety and ethical considerations, but fell short of directly addressing the core concerns raised by Gebru.

Summary of Google’s Official Response

Google’s statement emphasized their commitment to responsible AI development and research. They acknowledged Gebru’s contributions to the company but stated that her departure was a result of a difference of opinion regarding research priorities. The company highlighted its ongoing efforts to address potential risks and biases in AI systems.

Tone and Approach of Google’s Response

The tone of Google’s response was largely conciliatory and apologetic, seeking to maintain a positive image while minimizing the impact of Gebru’s departure. The approach was defensive, deflecting criticism and focusing on the company’s purported dedication to ethical AI. This tone, while attempting to mitigate the negative publicity, may have inadvertently reinforced the perception of a lack of transparency and accountability.

Contradictions and Ambiguities in Google’s Response

While Google’s response acknowledged Gebru’s contributions, it lacked specifics about the differing research priorities that allegedly led to her departure. The vagueness in this aspect of the statement leaves room for speculation and interpretation. Further, the statement’s emphasis on Google’s commitment to AI safety contrasted with the perception of Gebru’s concerns being disregarded. This creates an ambiguity that invites further scrutiny.

Comparison of Statement and Response

| Aspect | Timnit Gebru’s Statement | Google’s Response |

|---|---|---|

| Core Issue | Concerns about the potential risks and societal impacts of uncontrolled AI development, particularly in areas like bias and job displacement. | A difference of opinion on research priorities and a commitment to responsible AI development. |

| Tone | Direct, assertive, and critical of Google’s approach. | Conciliatory, apologetic, and defensive. |

| Specifics | Detailed explanations of her concerns and examples of potential problems. | Vague and general statements about research priorities. |

| Transparency | Transparent and forthright about her concerns and experiences. | Less transparent, focusing on maintaining a positive image. |

Ethical Implications

The firing of Timnit Gebru from Google, a leading AI researcher, has ignited a crucial discussion about the ethical responsibilities of tech companies in the development and deployment of artificial intelligence. This incident highlights the potential for bias in AI systems and the importance of prioritizing ethical considerations throughout the entire AI lifecycle. The controversy underscores the need for a more nuanced understanding of the ethical implications of AI, going beyond the technical aspects to include social and societal impacts.The case of Gebru’s dismissal underscores the tension between innovation and ethical considerations.

It’s a reminder that progress in AI should not come at the cost of fairness, transparency, and accountability. Furthermore, it emphasizes the importance of fostering a diverse and inclusive research environment where dissenting voices can be heard without fear of retribution.

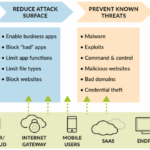

Potential Biases in AI Systems, Timnit gebru team google public statement fired

AI systems are trained on vast datasets that can reflect existing societal biases. These biases can be amplified and perpetuated by AI, leading to discriminatory outcomes in areas like loan applications, criminal justice, and hiring. For instance, facial recognition systems trained on predominantly white datasets may have lower accuracy rates for people of color. Similarly, language models trained on biased text corpora can exhibit biases in their output, generating prejudiced or stereotypical statements.

Responsibility of Tech Companies

Tech companies have a crucial role in mitigating the risks of bias in AI. They should prioritize the ethical development of AI systems by incorporating diverse perspectives throughout the design process. This includes actively seeking input from researchers, ethicists, and community members to identify and address potential biases. Furthermore, companies should be transparent about the data used to train their models, the algorithms employed, and the potential limitations of their systems.

They should also establish clear guidelines and protocols for addressing ethical concerns and ensure that these are consistently enforced.

AI Safety and Fairness

The Gebru case resonates with broader societal discussions on AI safety and fairness. Concerns are growing about the potential for AI systems to exacerbate existing inequalities and create new forms of discrimination. The debate necessitates a multi-faceted approach that includes rigorous research into AI safety and fairness, the development of ethical guidelines and regulations, and ongoing public discourse to address societal concerns.

Examples of AI-related harm, such as algorithmic bias in loan applications leading to financial exclusion, highlight the urgent need for ethical considerations in AI.

The Timnit Gebru team at Google getting the public firing statement was a big deal, highlighting ethical concerns in AI development. It’s important to stay informed about these issues, and also to separate fact from fiction when it comes to health topics like the coronavirus. Learning how to critically evaluate COVID-19 news and debunk conspiracy theories is crucial, check out this resource for helpful information: debunk coronavirus conspiracy theories how to covid 19 news science.

Ultimately, responsible AI development and accurate information about global health crises are essential for a healthy future.

Ethical Dilemmas in AI Research and Development

| Dilemma | Description | Example |

|---|---|---|

| Bias in Training Data | AI models trained on biased datasets can perpetuate and amplify existing societal biases. | A facial recognition system trained primarily on images of white faces may have lower accuracy rates for people of color. |

| Lack of Transparency | Opacity in AI algorithms makes it difficult to understand how decisions are made, potentially leading to unfair or discriminatory outcomes. | An AI system used in hiring decisions may not reveal the factors influencing its recommendations, making it difficult to identify bias. |

| Accountability and Responsibility | Determining who is responsible for the actions of an AI system when errors or harm occur can be challenging. | If an autonomous vehicle causes an accident, who is held accountable: the manufacturer, the programmer, or the system itself? |

| Job Displacement | Automation through AI can lead to job displacement in various sectors. | AI-powered robots in manufacturing could lead to the loss of jobs for human workers. |

This table illustrates some potential ethical dilemmas that arise in AI research and development. These issues require careful consideration and proactive strategies to mitigate potential risks and ensure responsible AI development.

Impact on the AI Field

The firing of Timnit Gebru at Google, a prominent figure in AI ethics, has reverberated through the field, prompting critical discussions about the future of AI research and development. Her dismissal raised concerns about the potential for suppression of dissenting voices and the broader implications for ethical considerations in the rapidly evolving field. This event serves as a stark reminder of the need for transparency, accountability, and open dialogue within the AI community.The incident has ignited a debate about the balance between innovation and the ethical considerations that underpin it.

Researchers and practitioners are grappling with the implications for their work, questioning the environment in which they operate and the impact of such decisions on the long-term trajectory of AI development.

Potential Effects on Future AI Research and Development

The dismissal of Dr. Gebru has created a climate of apprehension within the AI research community. Researchers might be hesitant to challenge established norms or express concerns about potential ethical pitfalls, leading to a self-censorship that could stifle the free exchange of ideas. This could ultimately hinder the development of truly ethical and beneficial AI systems. The chilling effect of such actions can impact the depth and breadth of research conducted.

Potential Changes in Research Methodologies

Researchers might adopt more cautious approaches in their methodologies, focusing on quantifiable results and avoiding potentially controversial or nuanced ethical discussions. There’s a risk that research might become more focused on immediate, measurable outputs rather than addressing long-term societal implications. The emphasis might shift towards incremental improvements over radical innovation, particularly in areas where ethical considerations are complex and challenging to define.

Potential Changes in the Hiring and Retention of Researchers

The incident could lead to a shift in the types of researchers universities and tech companies recruit. There may be a preference for researchers who are perceived as less critical or disruptive, potentially compromising the diversity of viewpoints and perspectives within the field. This could lead to a lack of diverse perspectives, hindering the ability of the AI community to address the complex ethical challenges facing the field.

Retention of talent, especially those who may feel vulnerable to criticism or dismissal, may also be affected.

Potential Impact on Public Trust in AI

The Gebru incident has raised questions about the trustworthiness of AI development and the companies involved. Public trust in AI systems and the institutions developing them could be eroded if similar events continue to occur. This could lead to slower adoption of AI technologies, and perhaps more stringent regulations and oversight. The public perception of AI, already fraught with concerns, could be significantly impacted, potentially leading to a more cautious and less enthusiastic approach to AI integration in various sectors.

Comparison of Responses from Other Tech Companies or Research Institutions to Similar Issues

| Company/Institution | Response to Similar Issues |

|---|---|

| Dismissed Dr. Gebru, sparking controversy and concern. | |

| Other Tech Companies | Varied responses. Some may be actively addressing similar issues through internal review processes and policy changes. Others may remain largely silent or dismissive. Examples include (but are not limited to) Microsoft, OpenAI, and Meta. |

| Research Institutions | Some universities and research centers have established ethics boards and guidelines. Others might be more reactive to issues than proactive in addressing them. |

The table highlights the diverse responses to similar ethical concerns within the tech industry. The lack of a standardized response and the varying degrees of proactive engagement illustrate the need for broader industry-wide guidelines and accountability mechanisms.

Alternative Perspectives on the Timnit Gebru Firing

The recent dismissal of Timnit Gebru from Google ignited a firestorm of debate, prompting various interpretations and analyses from diverse stakeholders. Beyond the official statements and public outcry, alternative perspectives offer valuable insights into the complexities surrounding the incident. These viewpoints illuminate potential misunderstandings and provide context for the broader implications of this event.

Potential Misunderstandings and Miscommunications

The public discourse surrounding the incident has been rife with potential misunderstandings. Different parties may have interpreted the same information differently, leading to divergent conclusions. For example, the phrasing of certain statements or the omission of crucial context could easily contribute to misinterpretations, ultimately escalating the conflict.

- Communication breakdowns between Gebru and her superiors could have contributed to the situation. A lack of clarity in expectations or differing interpretations of her role within Google’s research division might have led to escalating tensions. Similar conflicts have been documented in other professional settings, highlighting the challenges of communication in complex organizational structures.

- Public statements, while intended to be transparent, could have inadvertently been misinterpreted by the public or selectively highlighted by certain parties to serve their specific agendas. This can occur when the context behind the statements isn’t fully understood or when emotions run high. The need for careful consideration of the impact of public statements in such sensitive situations is crucial.

Alternative Interpretations of Events

The incident can be viewed through several lenses, each offering a unique interpretation of the events. Different stakeholders may have different perspectives on the motivations behind the actions taken. For instance, some might see the firing as a necessary measure to maintain the company’s reputation, while others may see it as an unfortunate consequence of broader systemic issues within the tech industry.

- A pro-Google interpretation could argue that the dismissal was a necessary step to ensure the company maintained its ethical standards and upheld its commitment to responsible AI development. This interpretation might focus on the need for maintaining internal policies and procedures. Such an argument would likely cite adherence to established protocols or prior infractions as evidence.

- A pro-Gebru interpretation could argue that the dismissal was a retaliatory measure stemming from Gebru’s outspoken criticism of the company’s AI practices. This view might point to past incidents where her opinions clashed with Google’s official stances, leading to this outcome. This interpretation would likely focus on the company’s response to dissent or perceived threats to the company’s public image.

The recent firing of Timnit Gebru from Google sparked a lot of discussion, raising questions about ethical AI development. This incident highlights the complex relationship between technology and human values, particularly in areas like blockchain, bitcoin, ethereum, and cryptocurrency. Understanding these concepts, as explained in more detail here blockchain bitcoin ethereum cryptocurrency meaning , is crucial for comprehending the broader implications of the Gebru situation and the future of AI.

The controversy surrounding the dismissal underscores the need for open dialogue and responsible innovation in this fast-moving field.

Expert Insights

Several experts in the field of AI ethics and organizational dynamics have commented on the Gebru case. Their diverse perspectives provide a more nuanced understanding of the situation. These experts often highlight the complexities of navigating ethical dilemmas in AI development and the importance of fostering open communication within organizations.

- Some AI ethicists have argued that the case highlights the need for more transparent and accountable practices in AI research. They emphasize the importance of creating mechanisms for employees to voice concerns without fear of reprisal. These experts would likely cite cases of past similar incidents to demonstrate the broader issue.

- Organizational psychologists, on the other hand, might highlight the potential for psychological factors to play a role in such conflicts. Their insights could explore the dynamics of power imbalances, communication styles, and the potential for misinterpretations within organizations. These perspectives may analyze specific aspects of the situation to determine potential influences.

Potential Alternative Outcomes

The incident has far-reaching implications, and the potential outcomes are multifaceted. The situation could either strengthen or weaken the ethical considerations surrounding AI development.

- One possible outcome is that the incident could prompt a wider discussion on AI ethics within the tech industry. This could lead to more stringent regulations and improved practices in AI development, resulting in more responsible development and deployment of AI systems.

- Another possible outcome is that the incident could lead to a chilling effect on open discussion and dissent within the AI research community. This outcome might result in less transparency and fewer challenges to the status quo in AI research, hindering the progress of the field.

Future Considerations

The Timnit Gebru incident serves as a stark reminder of the ethical and societal implications of AI development. Her dismissal, amidst concerns about the potential harm of certain AI applications, highlights the urgent need for a more transparent and accountable approach to research and development. This requires a shift in mindset, from prioritizing profit to prioritizing ethical considerations and public well-being.The dismissal raises fundamental questions about the responsibility of tech companies in fostering a safe and inclusive environment for researchers, particularly those working on potentially controversial topics.

This incident underscores the necessity of proactive measures to mitigate future occurrences of similar issues, and to build a more collaborative and understanding relationship between researchers and the corporations they serve.

Potential Future Implications

The dismissal of Timnit Gebru has far-reaching implications, potentially affecting the future trajectory of AI research. Researchers may be hesitant to voice concerns about harmful applications, fearing retribution or jeopardizing their careers. This could lead to a chilling effect on open discourse, hindering the identification and mitigation of potential risks associated with AI development. Moreover, the incident could damage the reputation of tech companies, impacting their ability to attract and retain top talent.

Strategies to Prevent Similar Incidents

Establishing clear ethical guidelines and review processes for AI research is crucial. These guidelines should encompass not only technical aspects but also social and ethical implications. Companies should develop robust mechanisms for researchers to voice concerns without fear of reprisal. This could include confidential reporting channels, independent review boards, and mechanisms for addressing concerns about bias and potential harm.Furthermore, promoting a culture of open dialogue and constructive feedback within research teams is essential.

This can be achieved through regular ethical reviews, workshops, and training programs that encourage critical thinking and diverse perspectives.

Promoting Open Dialogue and Constructive Feedback

Open dialogue between researchers and tech companies is critical. Creating platforms for researchers to express their concerns and perspectives without fear of retribution will help build trust and foster a culture of transparency. This includes actively seeking feedback from diverse groups, including marginalized communities, and incorporating their perspectives into the research process. Establishing clear communication channels and providing avenues for feedback will help prevent future incidents.For instance, Google could establish an independent ethics review board composed of researchers, ethicists, and community representatives.

This board could review AI projects for potential risks and provide recommendations for mitigating those risks. The board’s recommendations would be publicly available, increasing transparency and accountability.

Fostering Collaboration and Understanding

Building strong collaborative relationships between researchers and tech companies requires mutual respect and shared understanding. Companies should actively seek out researchers with diverse backgrounds and perspectives to ensure a more inclusive and representative research environment. This can be achieved through mentorship programs, funding opportunities, and initiatives that support researchers from underrepresented groups.Promoting interdisciplinary collaborations between researchers and stakeholders from various fields, such as sociology, law, and philosophy, can provide crucial insights into the potential societal impact of AI.

This will foster a more holistic understanding of the technology and its implications.

Creating Inclusive Research Environments

Creating inclusive research environments is essential for fostering innovation and addressing societal challenges. This includes actively recruiting and retaining researchers from diverse backgrounds, ensuring equitable access to resources, and creating a supportive work environment where everyone feels valued and respected.Implementing diversity and inclusion training programs for researchers and company leadership is crucial. Companies should also actively solicit feedback from researchers and other stakeholders on how to improve the inclusivity of their research environment.

The creation of mentorship programs tailored to the needs of underrepresented researchers can significantly enhance their career development and overall well-being.

Illustrative Examples

The Timnit Gebru case highlights the complex interplay between AI research, ethical considerations, and the need for diverse perspectives. Understanding the current state of AI, its limitations, and the potential for bias is crucial to fostering responsible development and deployment. This section provides concrete examples to illustrate these key points.The field of AI research is rapidly expanding, encompassing a broad range of techniques and applications.

From machine learning algorithms to deep learning architectures, the goal is to create systems that can learn from data, solve complex problems, and potentially automate tasks previously performed by humans. This includes areas like computer vision, natural language processing, and robotics.

AI Research Field

The field of AI research is diverse, encompassing theoretical advancements in algorithms, practical applications in various industries, and ongoing debates about ethical implications. Researchers explore different approaches to problem-solving, ranging from symbolic reasoning to neural networks, with each method possessing unique strengths and limitations.

Challenges and Limitations of Current AI Systems

Current AI systems face significant challenges and limitations. One major hurdle is the potential for bias in algorithms, often stemming from the data they are trained on. Another critical limitation is the lack of generalizability. Systems trained on one specific task may struggle to adapt to slightly different situations or new inputs. Furthermore, explainability remains a significant issue for many AI models.

Understanding the decision-making process of complex AI systems can be extremely difficult, making it challenging to trust their outputs.

Potential Biases in AI Algorithms

AI algorithms are susceptible to various biases present in the data they are trained on. These biases can manifest in several ways. For instance, if a dataset predominantly features images of light-skinned individuals, an AI trained on this data might perform poorly on images of darker-skinned individuals. Similarly, biases in language datasets can lead to biased language models.

Gender bias is another common concern, as is bias based on socioeconomic status or geographical location. These biases can have real-world consequences, potentially perpetuating existing societal inequalities.

Importance of Diversity in AI Research Teams

A diverse research team is crucial for developing unbiased and robust AI systems. Different perspectives, backgrounds, and experiences lead to a wider range of ideas and potential solutions. Diverse teams can identify and mitigate potential biases in data and algorithms more effectively. This is critical because AI systems are not inherently objective; they reflect the values and biases present in the data and the people who create them.

Examples of Successful Diversity Initiatives in Tech Companies

Several tech companies have implemented diversity initiatives to foster inclusive environments and encourage diverse representation in their AI research teams. These initiatives often involve mentorship programs, sponsorship opportunities, and employee resource groups focused on specific demographics. Such programs aim to provide support and create a sense of belonging for underrepresented groups, fostering innovation and creativity. Google’s internal programs and external partnerships are examples of successful initiatives, promoting diversity in the workforce.

Microsoft’s efforts also demonstrate the commitment of large companies to fostering a more inclusive work environment.

Final Thoughts

The firing of Timnit Gebru and her team at Google, following a public statement, highlights the complexities of ethical AI development. This event compels a deeper examination of the role of researchers, the responsibilities of tech companies, and the broader implications for the future of AI. The incident is likely to have lasting impacts on research methodologies, hiring practices, and public trust in AI.

Further discussion and analysis of alternative perspectives are essential for navigating this complex situation and moving forward in a more ethical and responsible manner.